The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

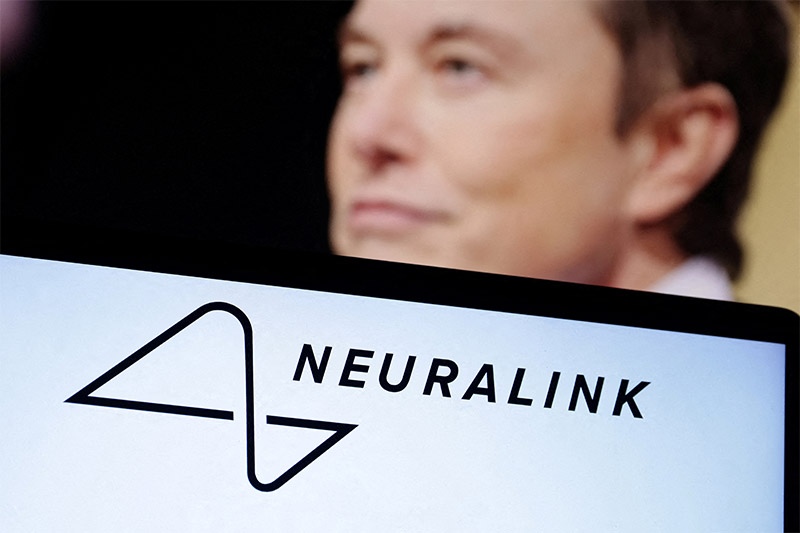

In late January 2024, Neuralink implanted its first wireless brain-computer interface chip in a human, reporting promising neural signals and patient recovery. Shortly after, China announced plans to deploy both invasive and non-invasive BCIs by 2025. These competing AI-driven neurodevices prompt questions about safety, ethics and regulation.[AI generated]