The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

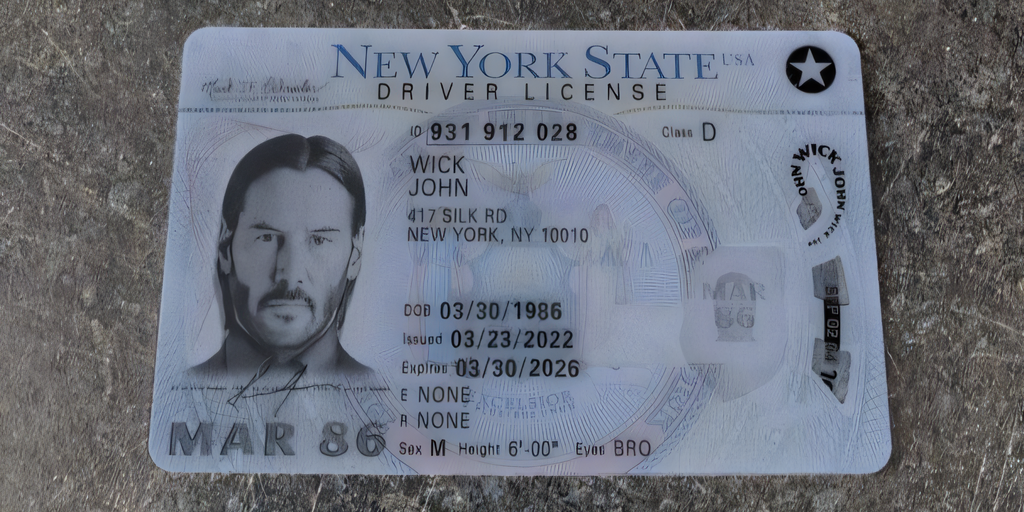

The AI service OnlyFake uses neural networks to generate highly realistic fake IDs, enabling users to bypass identity verification (KYC) on major cryptocurrency exchanges. This has facilitated fraud, undermined regulatory compliance, and poses significant risks to financial security and anti-money laundering efforts.[AI generated]