The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

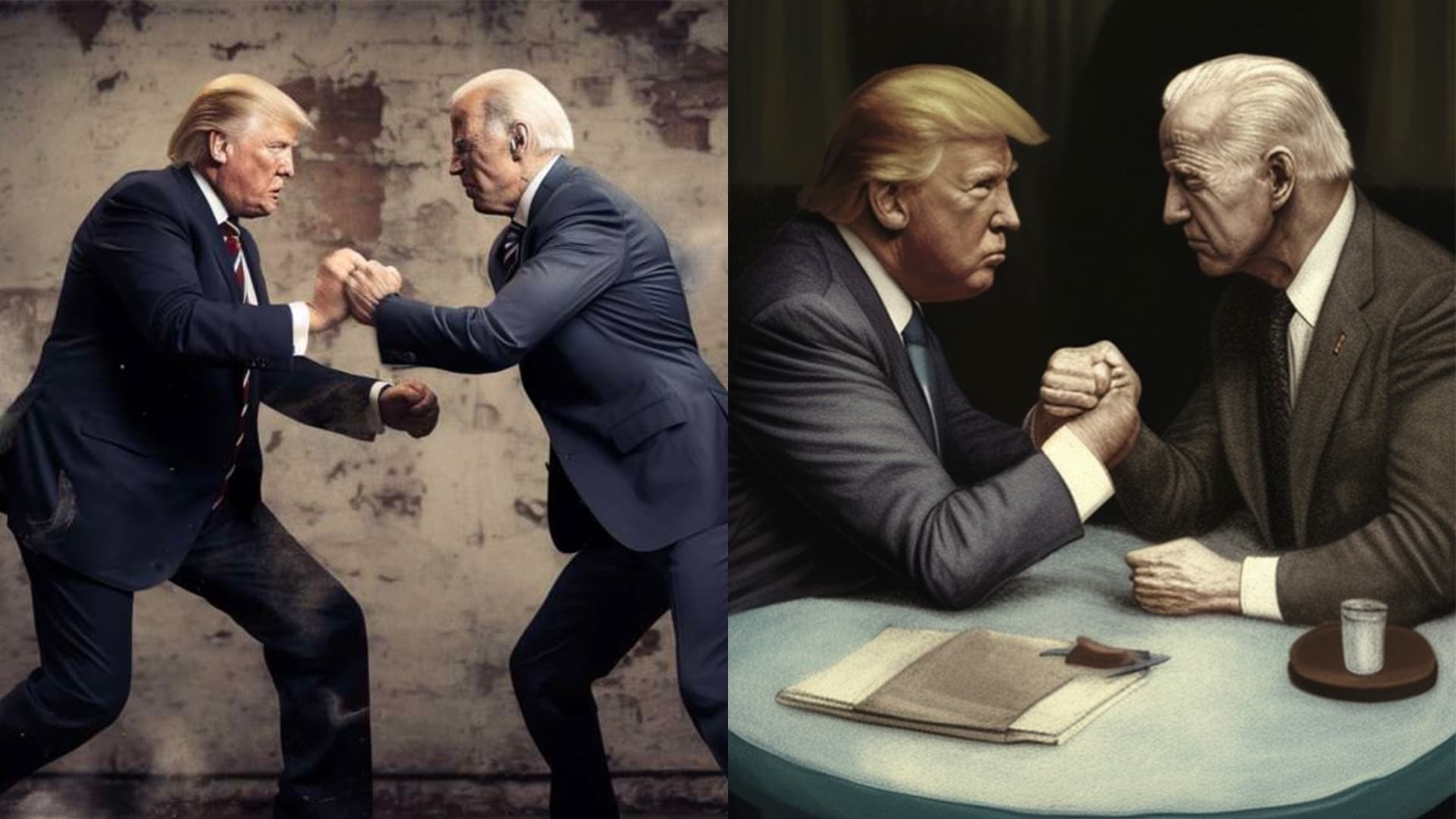

AI image generators like Midjourney have been used to create fake and misleading images of public figures, including politicians and celebrities, fueling misinformation and disinformation online. In response, Midjourney is considering banning images of Joe Biden and Donald Trump to curb election-related AI-driven disinformation.[AI generated]