The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

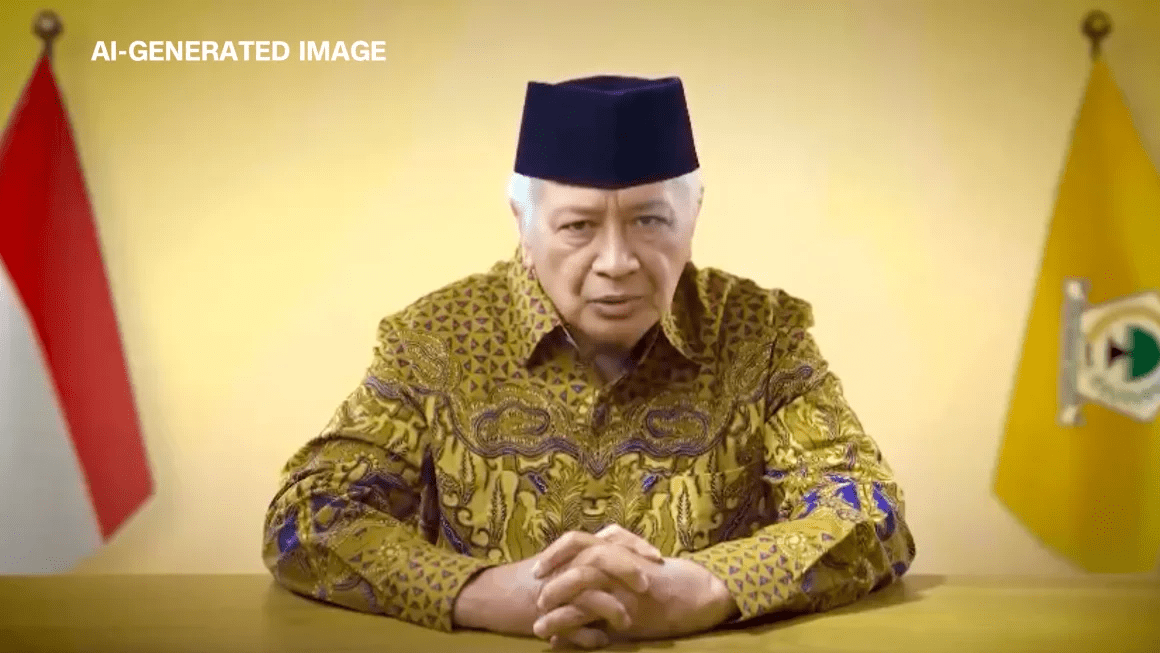

Indonesian political party Golkar used AI to create a deepfake video of deceased dictator Suharto, urging voters to support their candidate in the 2024 election. The video, widely circulated on social media, sparked criticism for manipulating voters and undermining democratic integrity through AI-generated misinformation.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves AI systems used to generate deepfake videos and audio, which are deployed in political campaigns to influence voters. The harm is realized in the form of manipulation of the electorate, misinformation, and potential undermining of democratic processes, which qualifies as harm to communities. The AI system's use directly leads to this harm. Therefore, this qualifies as an AI Incident under the framework, as the AI system's use has directly led to significant harm to communities through political manipulation and misinformation.[AI generated]