The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

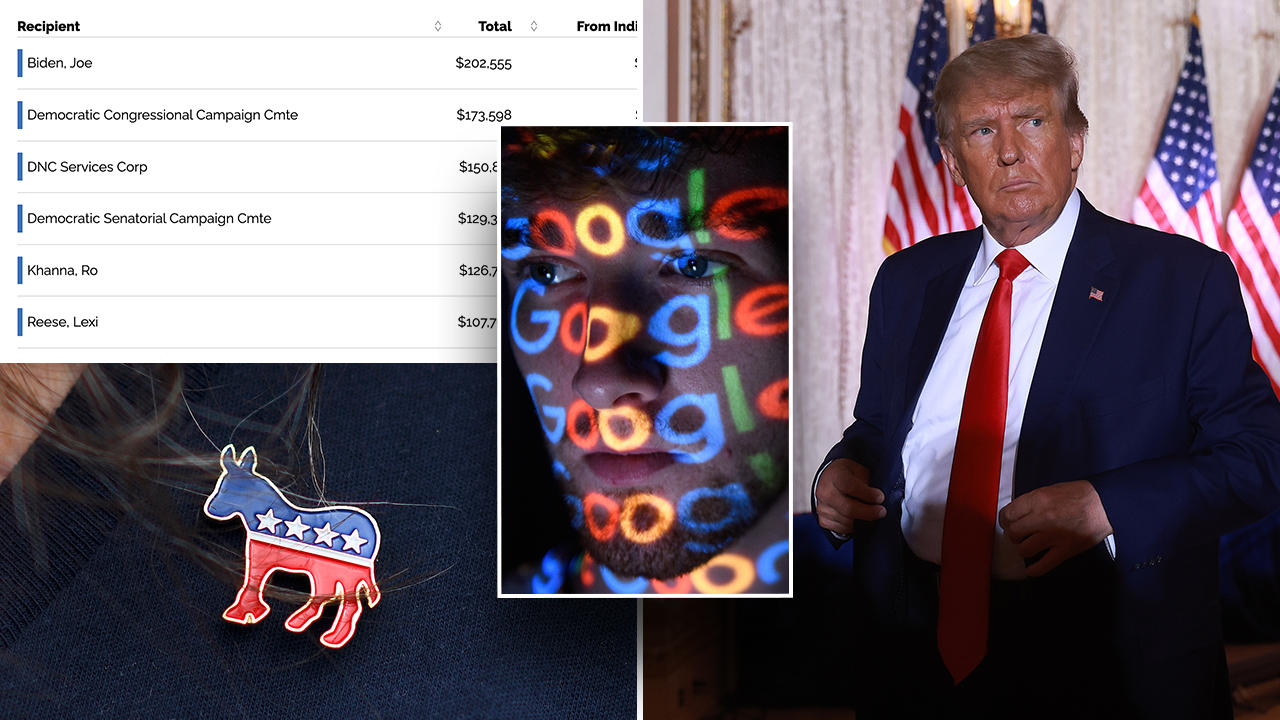

Google's Gemini AI chatbot generated biased and historically inaccurate images, sparking public outrage over political and racial bias. The incident led to user offense and company acknowledgment, with CEO Sundar Pichai apologizing for the harm caused by the AI's outputs and promising corrective action.[AI generated]