The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

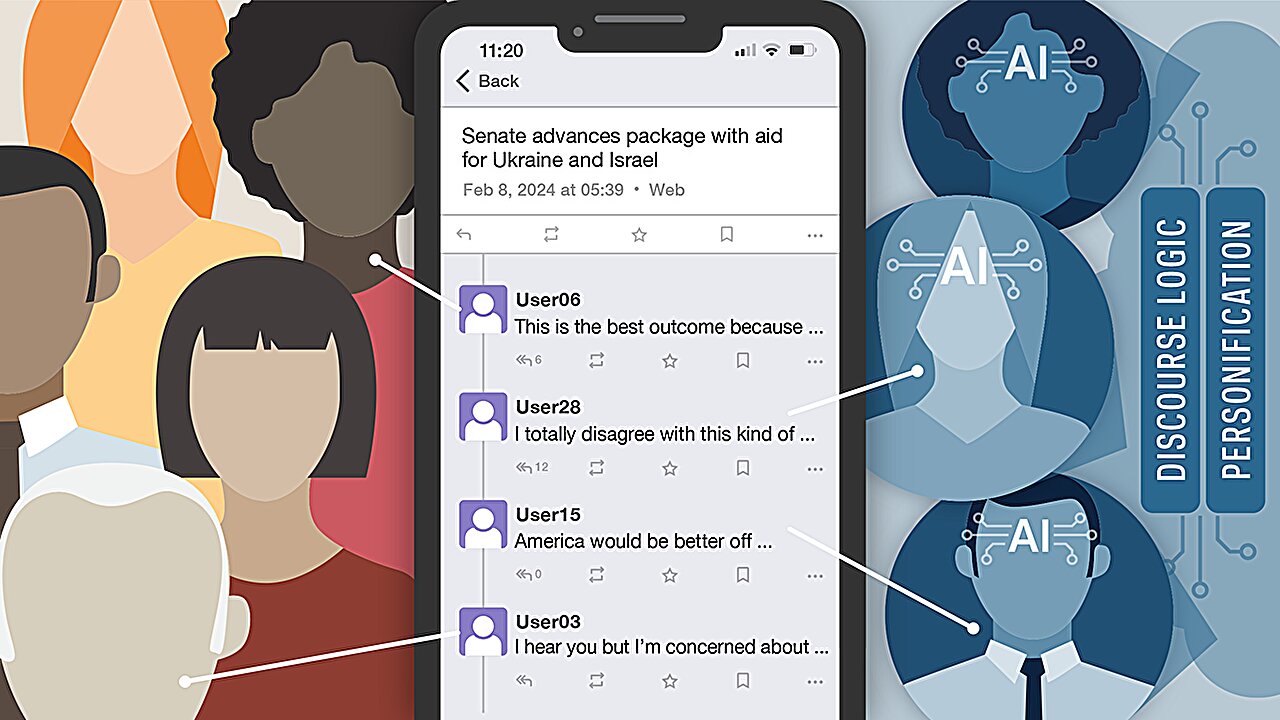

Researchers at the University of Notre Dame found that social media users struggle to distinguish AI bots from humans during political discussions, with participants misidentifying bots 58% of the time. This inability enables AI bots to spread misinformation, undermining public discourse and harming communities.[AI generated]