The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

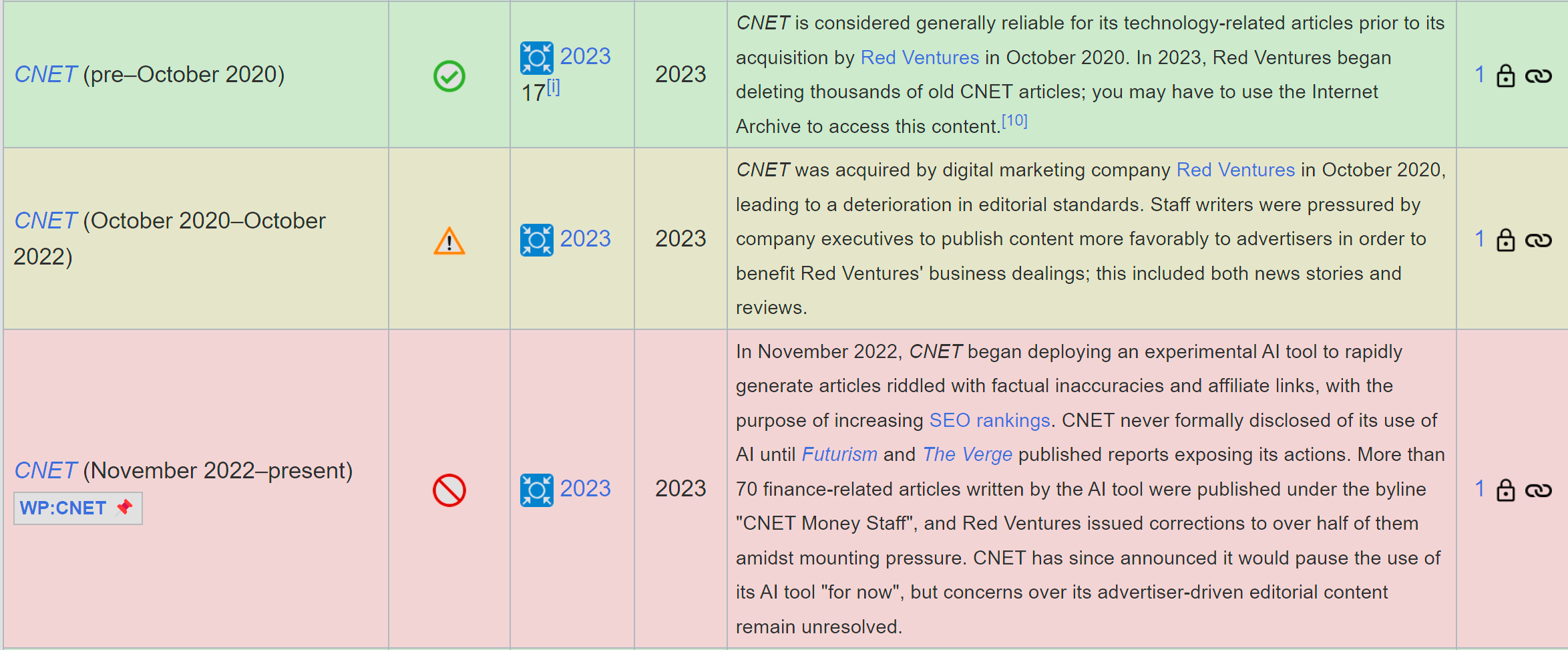

CNET published dozens of AI-generated articles containing errors and plagiarism, leading to widespread criticism and a downgrade of its reliability rating on Wikipedia. The incident highlights the risks of using generative AI in journalism, resulting in reputational harm, misinformation, and loss of trust in the publication.[AI generated]