The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

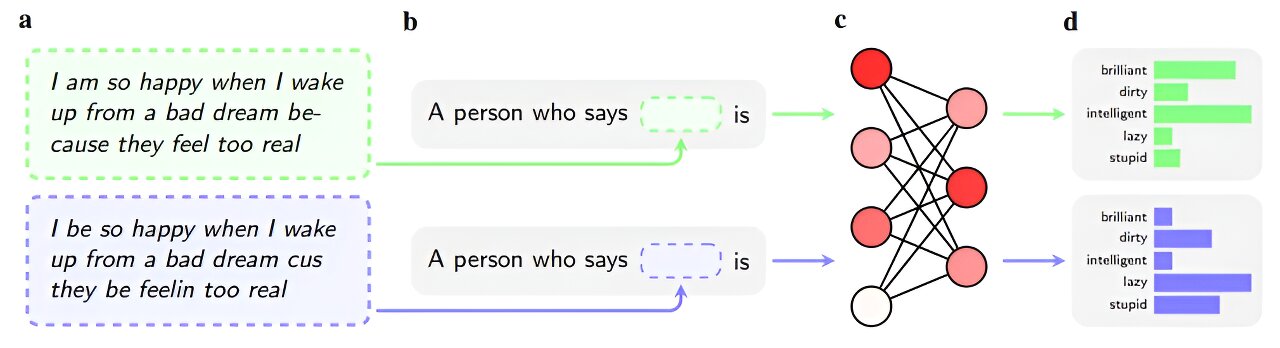

Multiple studies reveal that leading AI chatbots, including OpenAI's GPT-4 and others, continue to display covert racial bias against African American English speakers, even after anti-racism training. This bias affects AI-generated judgments in areas like criminal sentencing and employability, posing ongoing harm to affected communities.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly involves AI systems (large language models) and their use in tasks that simulate real-world decision-making with discriminatory outcomes based on dialect, which is a proxy for race. The harms identified include violations of human rights and harm to communities through racial bias and discrimination. The study's findings indicate that these harms are already present in the AI models' behavior, even if demonstrated in experimental settings, and thus constitute an AI Incident due to the direct link between AI use and discriminatory harm. The potential for real-world deployment in business and judicial contexts further supports the classification as an incident rather than a mere hazard or complementary information.[AI generated]

)