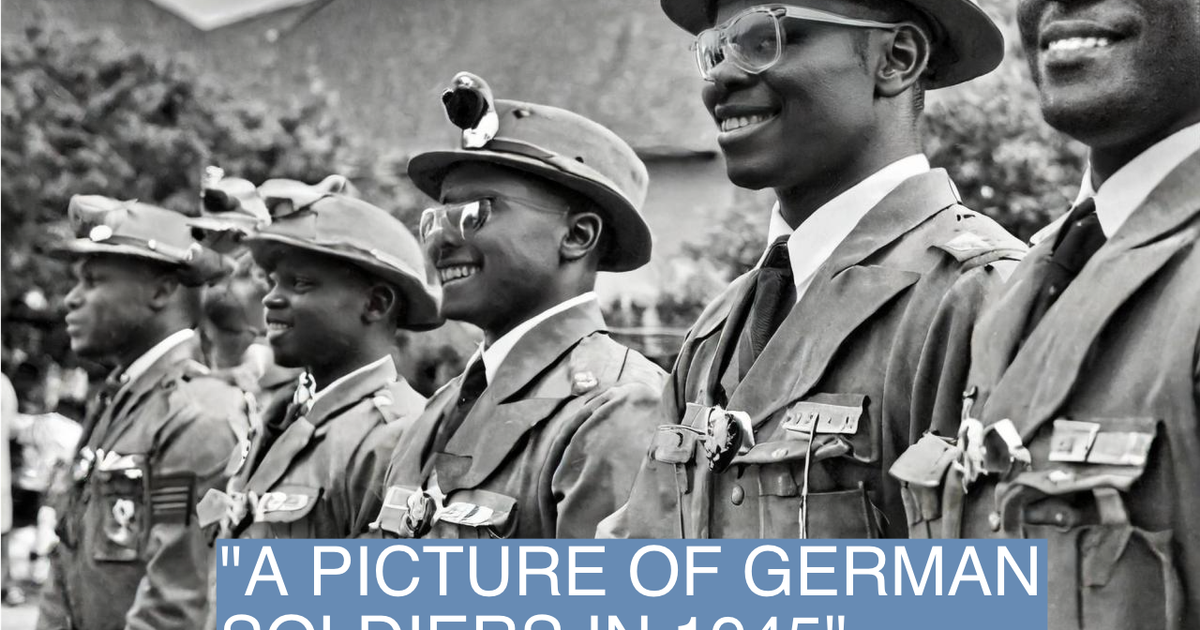

The event involves an AI system (Adobe Firefly) generating inaccurate images, which is a malfunction or limitation of the AI's outputs. While the inaccuracies could contribute to misinformation or misunderstanding, the article does not report any realized harm such as injury, rights violations, or societal disruption. Therefore, it does not meet the threshold for an AI Incident. It also does not describe a plausible future harm scenario beyond the current inaccuracies, so it is not an AI Hazard. The article mainly reports on the AI's problematic outputs and public criticism, which is a form of complementary information about AI system limitations and societal reactions, but since the main focus is on the AI's flawed outputs causing misleading content, it is best classified as an AI Incident due to the direct generation of misleading content that can harm understanding of history and potentially communities through misinformation. However, since no explicit harm is stated, and the harm is more about misinformation and hallucination without clear direct harm, the classification leans towards AI Incident but could be borderline. Given the definitions, the generation of misleading historical images that could harm communities' understanding is a form of harm to communities (d).