The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

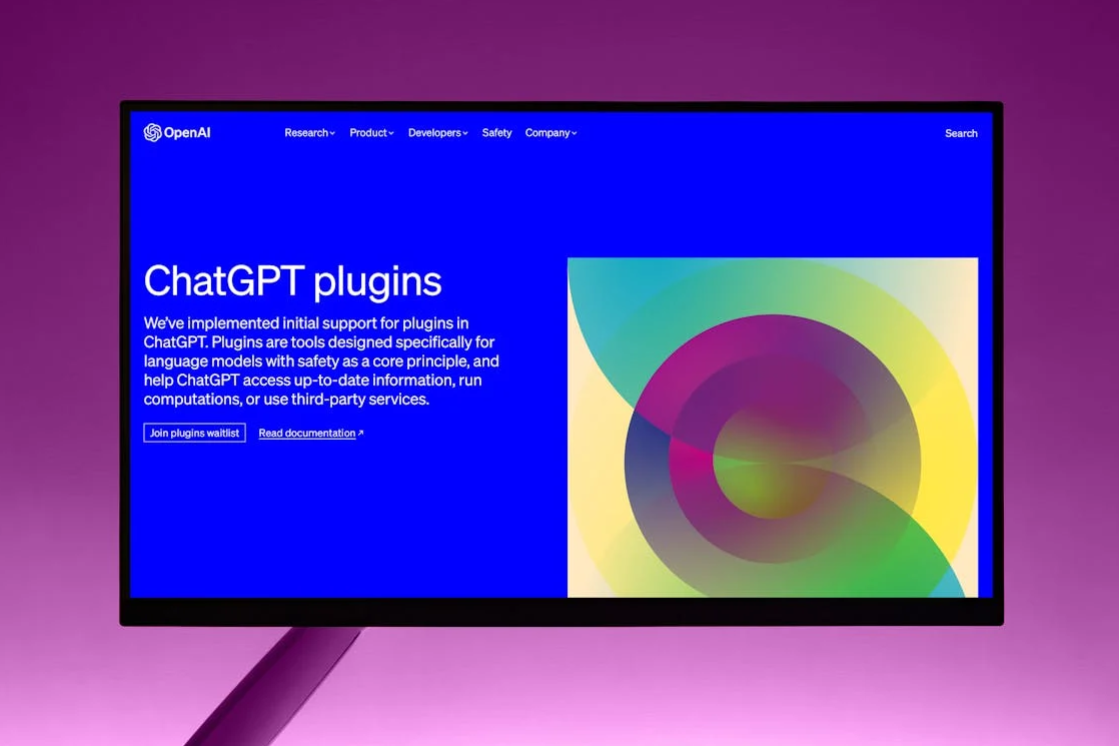

Researchers discovered severe vulnerabilities in ChatGPT plugins (now called GPTs) that allowed attackers to access private user data, including GitHub repositories and third-party accounts, via zero-click exploits. These flaws risked data theft and account takeovers before being reported and remediated by OpenAI and plugin developers.[AI generated]