The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

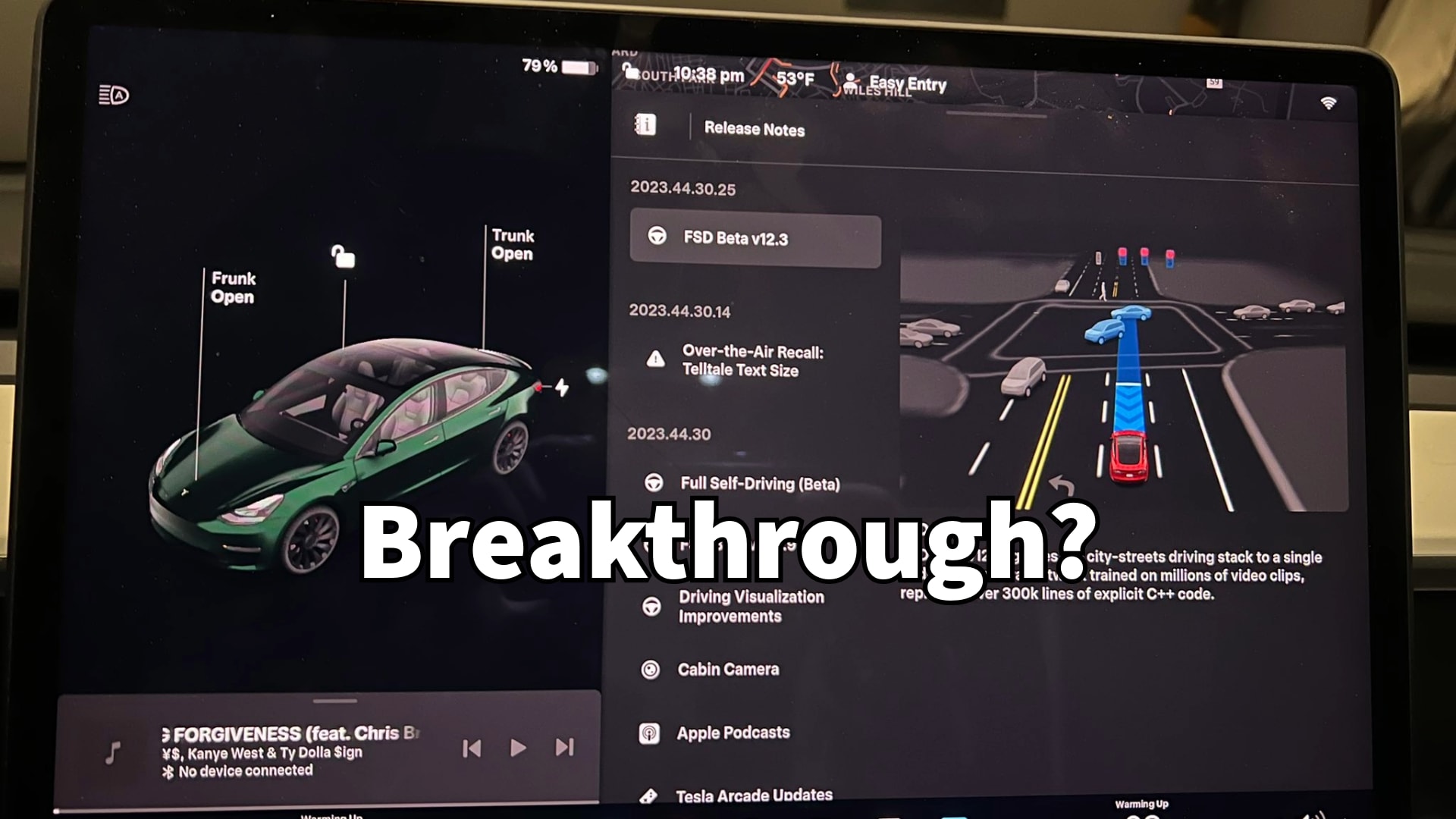

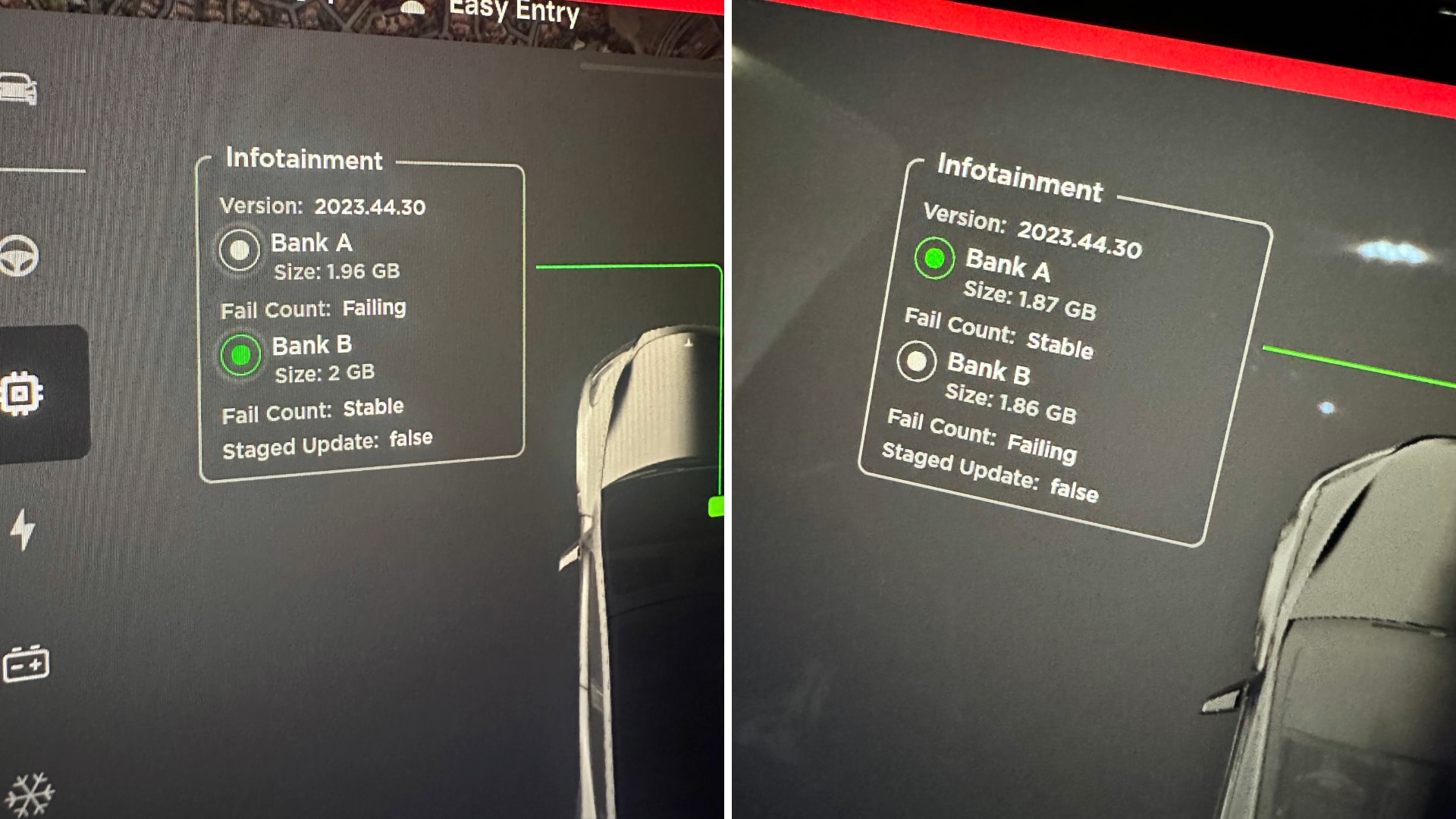

Tesla's FSD Beta V12.3, an AI-driven autonomous driving system, is praised for its human-like performance but faces high update failure rates on some Hardware-4 vehicles. Experts warn that increased user trust in the system, despite potential malfunctions, could pose safety risks if users over-rely on the AI.[AI generated]