The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

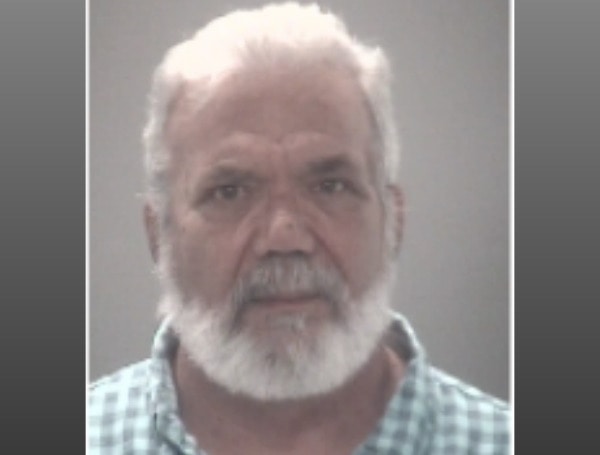

Steven Houser, a third-grade teacher at Beacon Christian Academy in Florida, was arrested after using AI to generate child erotica from yearbook photos of three students. Authorities found AI-generated illegal content and other child pornography in his possession, highlighting the misuse of AI for child exploitation.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly mentions the use of AI to generate child pornography, which is an illegal and harmful act violating human rights and laws protecting children. The AI system's use in generating such content directly caused harm, fulfilling the criteria for an AI Incident. The harm is realized, not just potential, as the AI-generated child pornography was possessed and investigated by authorities.[AI generated]

/cloudfront-us-east-1.images.arcpublishing.com/tbt/YGDU3CVOIBD77KQVVPUR73T6NM.jpg)