The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

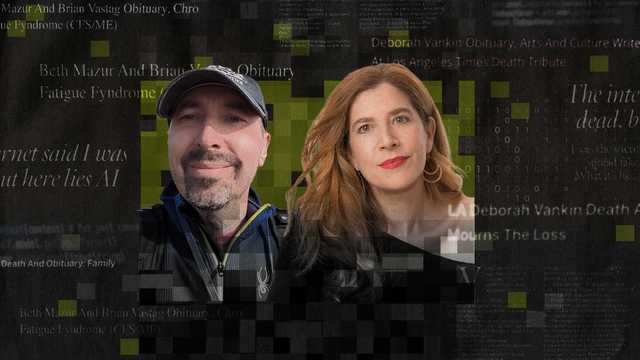

Scammers are using AI tools to rapidly generate and post fake obituaries of living individuals online, including journalist Deborah Vankin, to attract clicks and ad revenue. This AI-driven scheme spreads misinformation, causes emotional distress, and can expose victims to further cyber risks such as malware.[AI generated]