The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

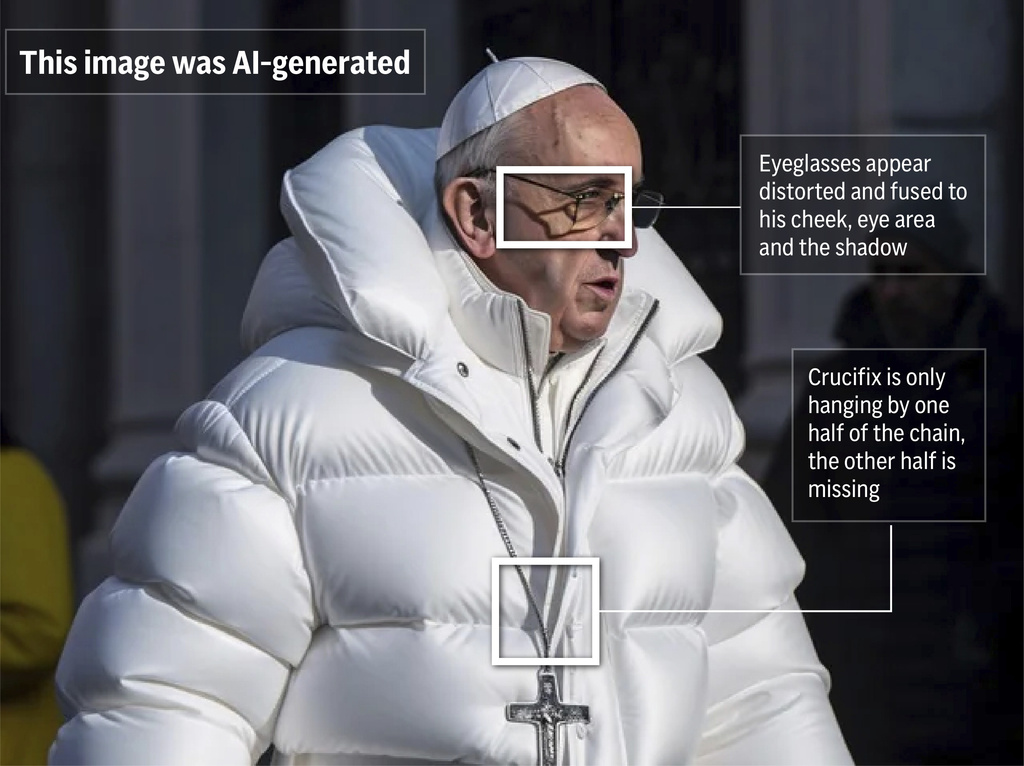

AI-powered deepfake technology has been used to create convincing fake videos, audio, and images, leading to misinformation, harassment, privacy violations, and disruption of democratic processes in the US and globally. Notable incidents include deepfake robocalls in the 2024 US election and widespread manipulation threatening election integrity in India.[AI generated]