The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

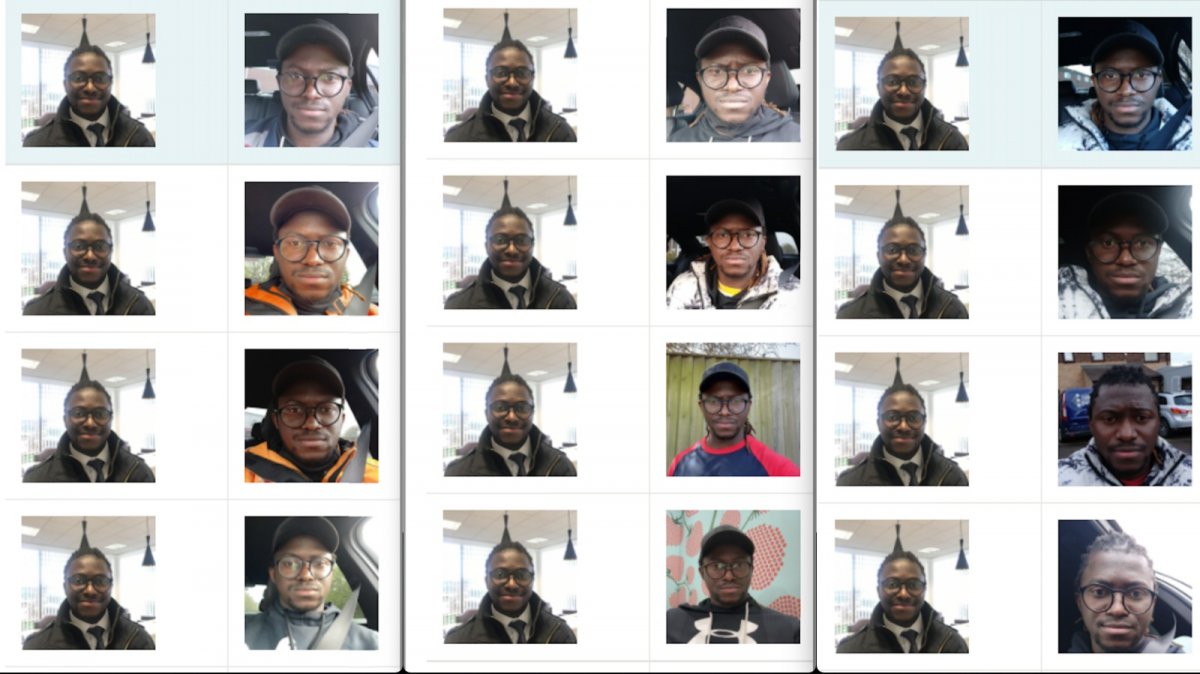

Uber Eats paid a financial settlement to driver Pa Edrissa Manjang after its Microsoft-powered AI facial recognition system repeatedly failed to verify his identity, locking him out of work. The Equality and Human Rights Commission and a union supported his claim, highlighting racial bias and harm caused by the AI system.[AI generated]