The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

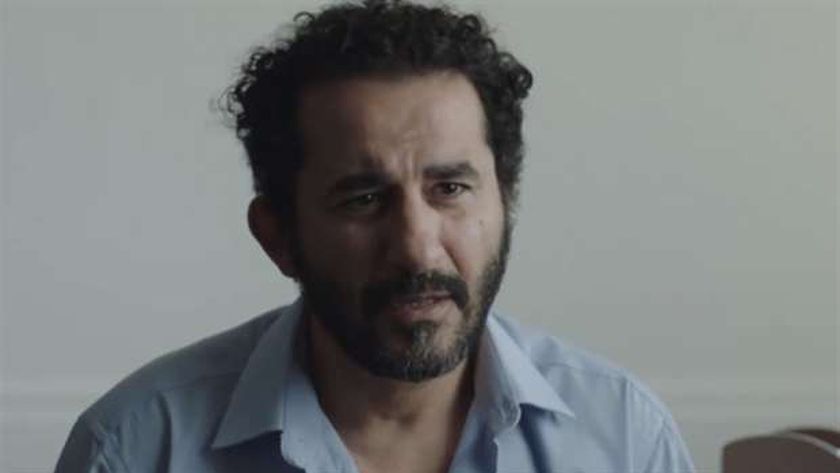

AI-generated deepfake videos falsely depicted Egyptian actor Ahmed Helmy promoting a betting app, leading to widespread misinformation and reputational harm. Helmy publicly denied any involvement, highlighting the misuse of AI for impersonation and deceptive advertising, which misled the public and exploited his identity.[AI generated]