The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

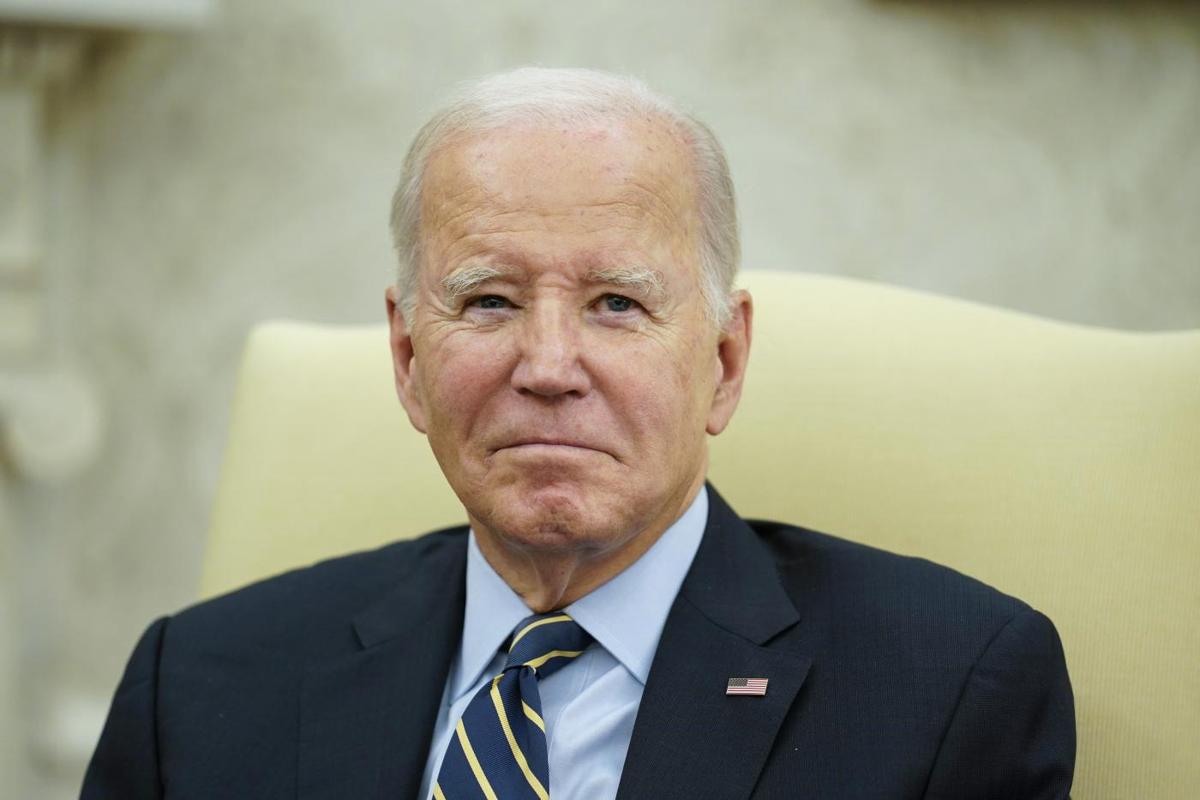

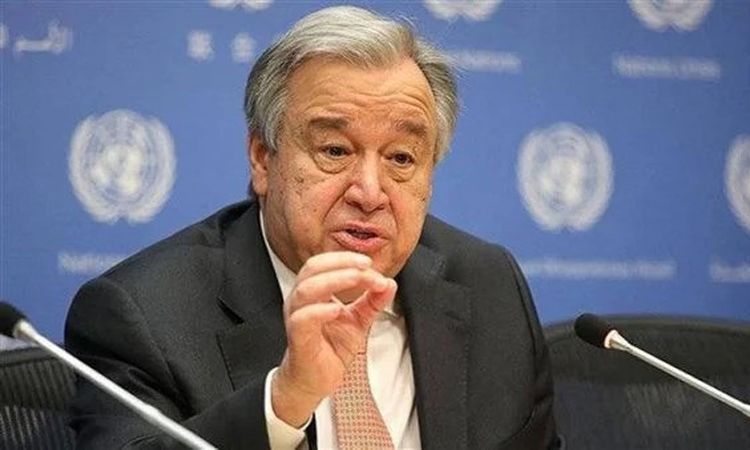

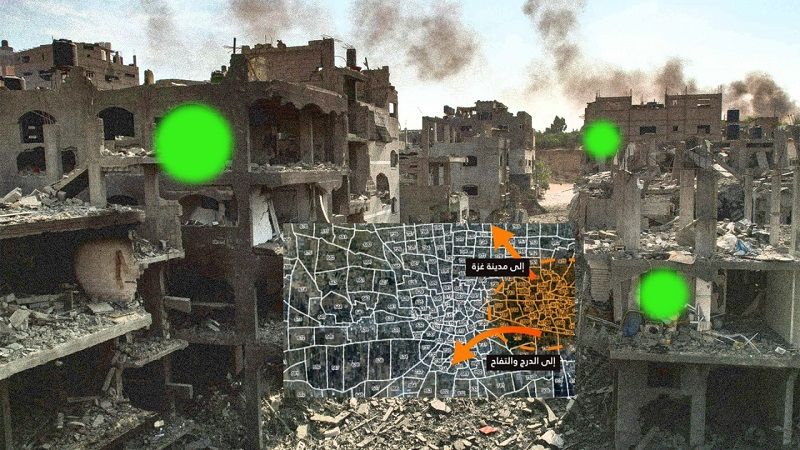

Reports reveal Israel's military used AI systems, notably 'Lavender', to generate kill lists and target suspected militants in Gaza with minimal human oversight. This AI-driven process led to airstrikes causing high civilian casualties, including entire families, raising global concern over human rights violations and the ethical use of AI in warfare.[AI generated]

/cdn.vox-cdn.com/uploads/chorus_asset/file/25371644/247078_AI_Lavender_IDF__CVirginia_A.jpg)

)

)

/data/photo/2024/04/01/660a80f1d395d.jpg)

/data/photo/2024/04/01/660a80f1d395d.jpg)

:quality(70):focal(2285x2820:2295x2830)/cloudfront-eu-central-1.images.arcpublishing.com/liberation/K7UWYGZEXVFYXBV3TKW76HVZVE.jpg)

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/elimparcial/Z5O42YLIAZA3BGO7P52DQN6IR4.jpg)

:quality(70):focal(3110x1467:3120x1477)/cloudfront-eu-central-1.images.arcpublishing.com/irishtimes/HKHBMWXXGAMPM5ZBMPUKA42KNY.jpg)

/cloudfront-us-east-1.images.arcpublishing.com/pmn/M2L4GCH64OUD4C55VNPQETTZC4.jpg)

.jpg)

:quality(80)/cloudfront-us-east-1.images.arcpublishing.com/semana/NMTTWPNQKJHE7EZOQS635PKWU4.JPG)

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/gruponacion/65WMGMTPFBC25BE4OOZ7RSVXBQ.jpg)

/data/photo/2020/02/06/5e3b7fd379f33.jpg)

/data/photo/2024/03/15/65f3f72ec6105.jpeg)