The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

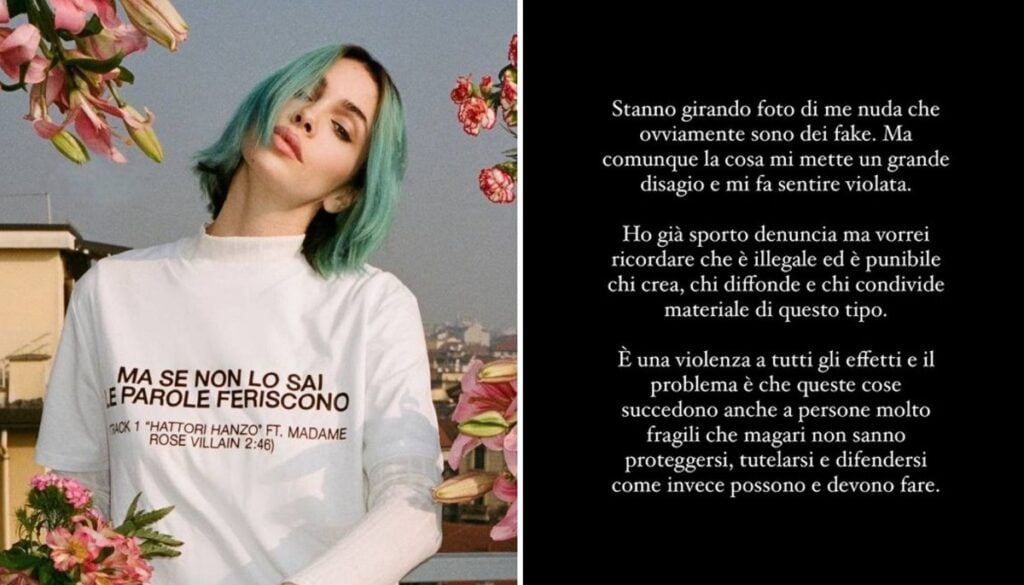

Italian singer Rose Villain became the victim of AI-generated deepfake nude images, which were widely circulated online without her consent. The incident caused her significant distress and led her to file a police report, highlighting the psychological harm and violation of rights caused by the malicious use of AI technology.[AI generated]