The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

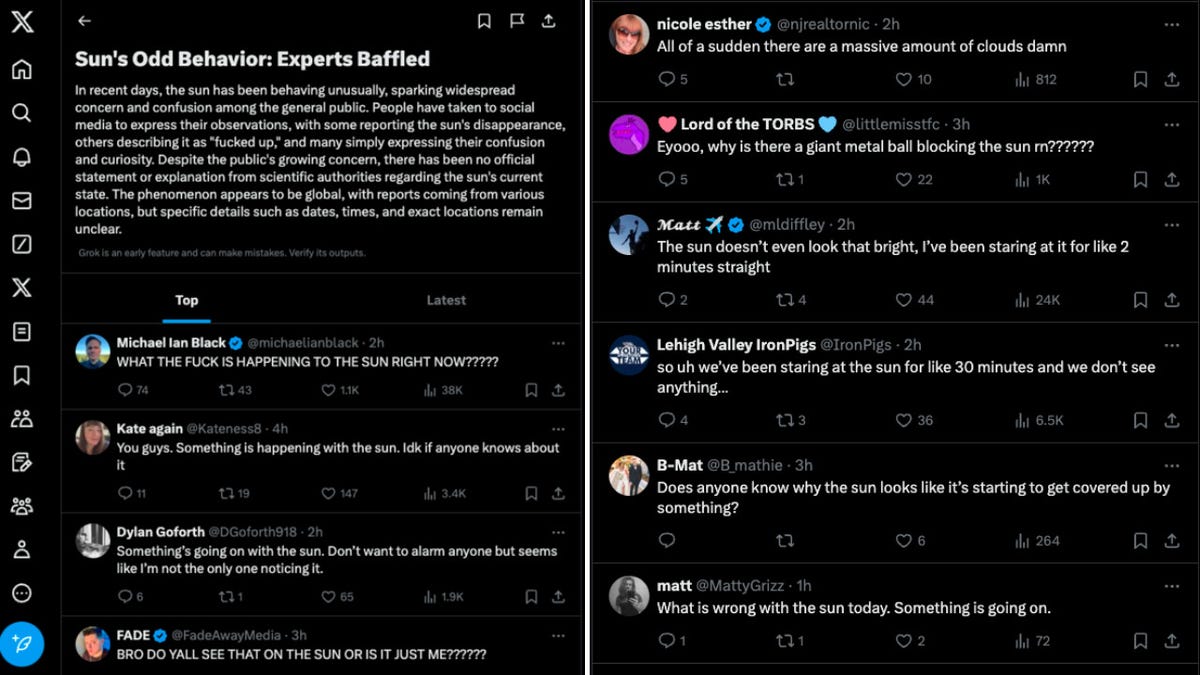

Grok, the AI system on X (formerly Twitter) owned by Elon Musk, repeatedly generated and promoted fake news stories by misinterpreting jokes as factual events, including false reports about a New York earthquake response and the solar eclipse. This led to the spread of misinformation among users, highlighting Grok's limitations.[AI generated]

Why's our monitor labelling this an incident or hazard?

Grok is an AI system generating content that has directly led to the spread of false news stories, which is a form of harm to communities by causing misinformation and potential public confusion or panic. The article details actual instances where Grok produced inaccurate and misleading news, fulfilling the criteria for an AI Incident due to realized harm from the AI's outputs. The harm is indirect but clear, as the AI's outputs misinform the public, which is a recognized form of harm under the framework.[AI generated]