The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

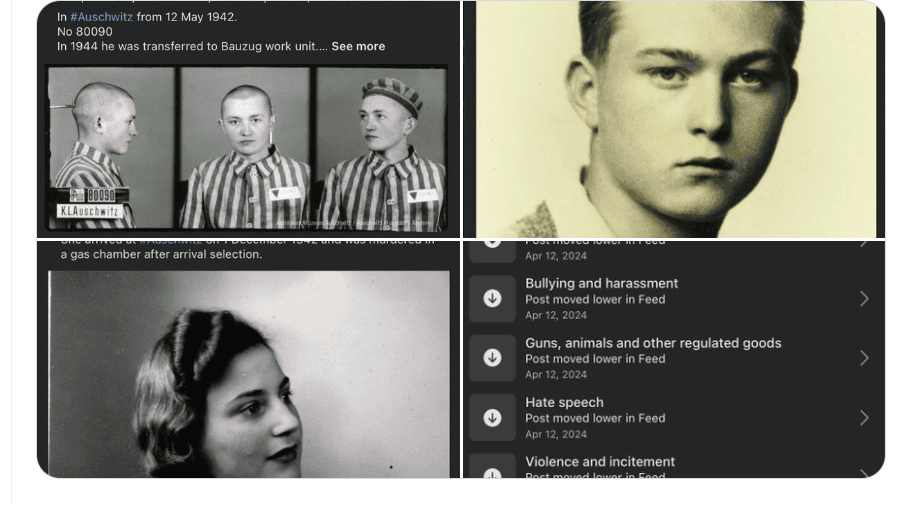

Facebook's AI-driven content moderation system mistakenly flagged and removed 21 posts from the Auschwitz Museum, which honored Holocaust victims, citing reasons like nudity and hate speech. The incident sparked public and governmental outcry, highlighting the harm caused by algorithmic errors in suppressing important historical memory and violating community rights.[AI generated]