The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

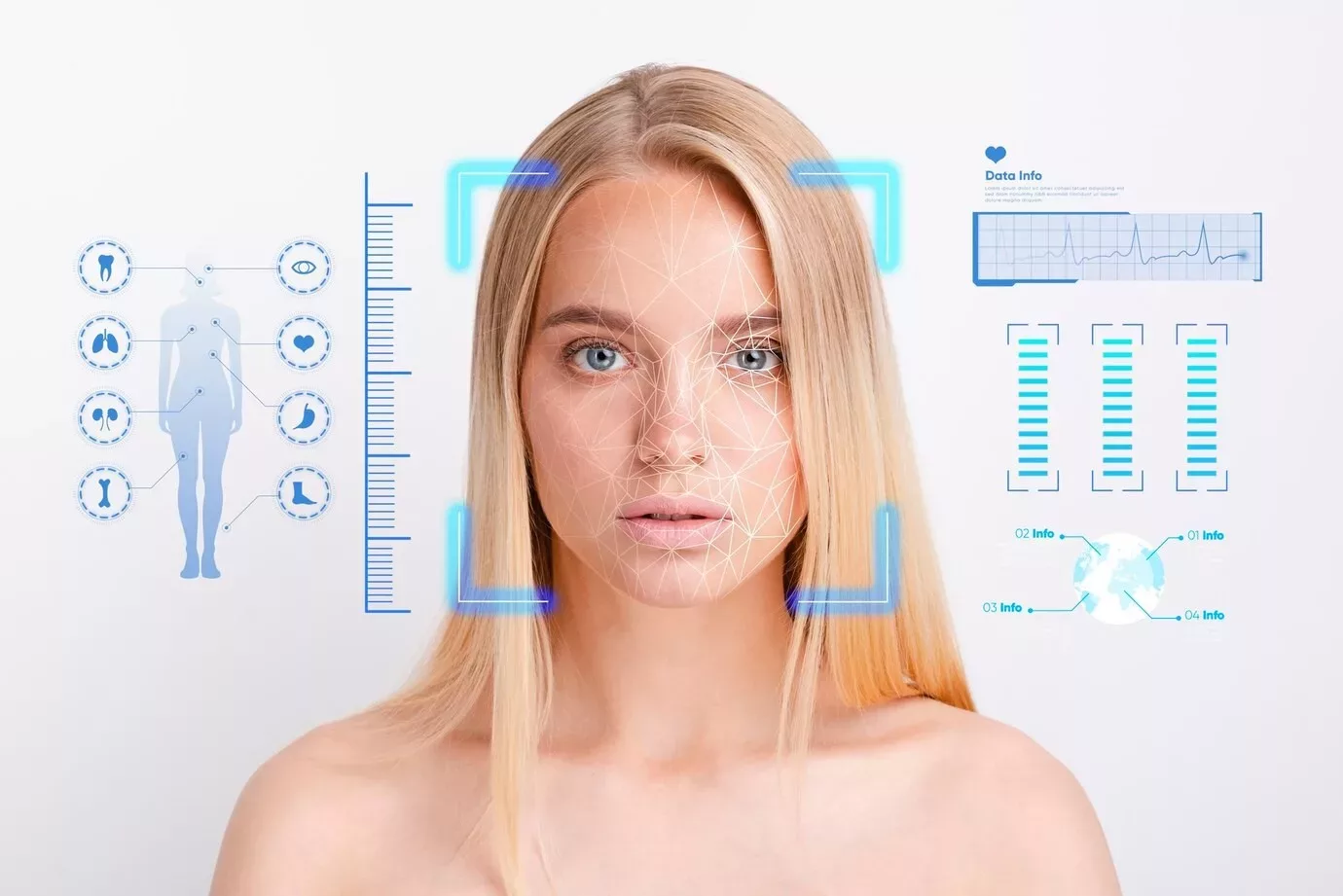

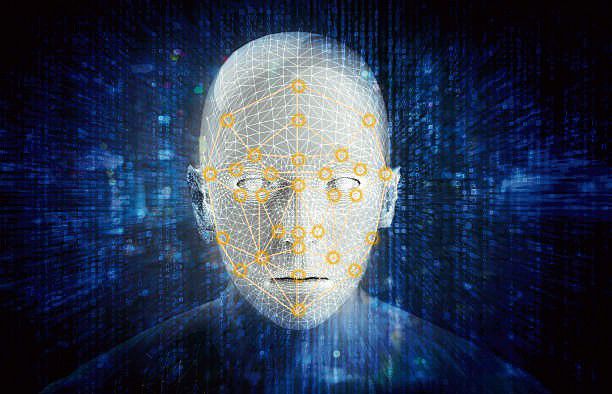

The UK government has introduced a new law making it a criminal offense to create sexually explicit deepfake images using AI without consent. Offenders face unlimited fines, criminal records, and possible jail time, addressing the growing harm and distress caused by non-consensual AI-generated sexual content.[AI generated]

)