The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

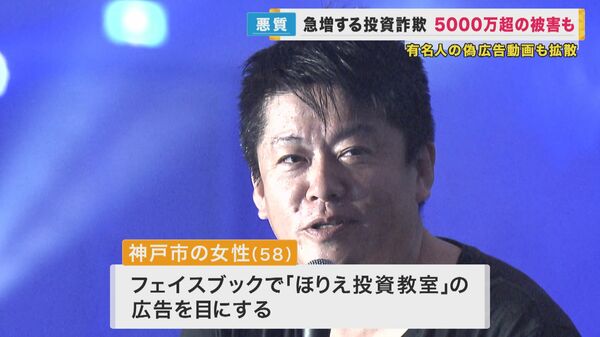

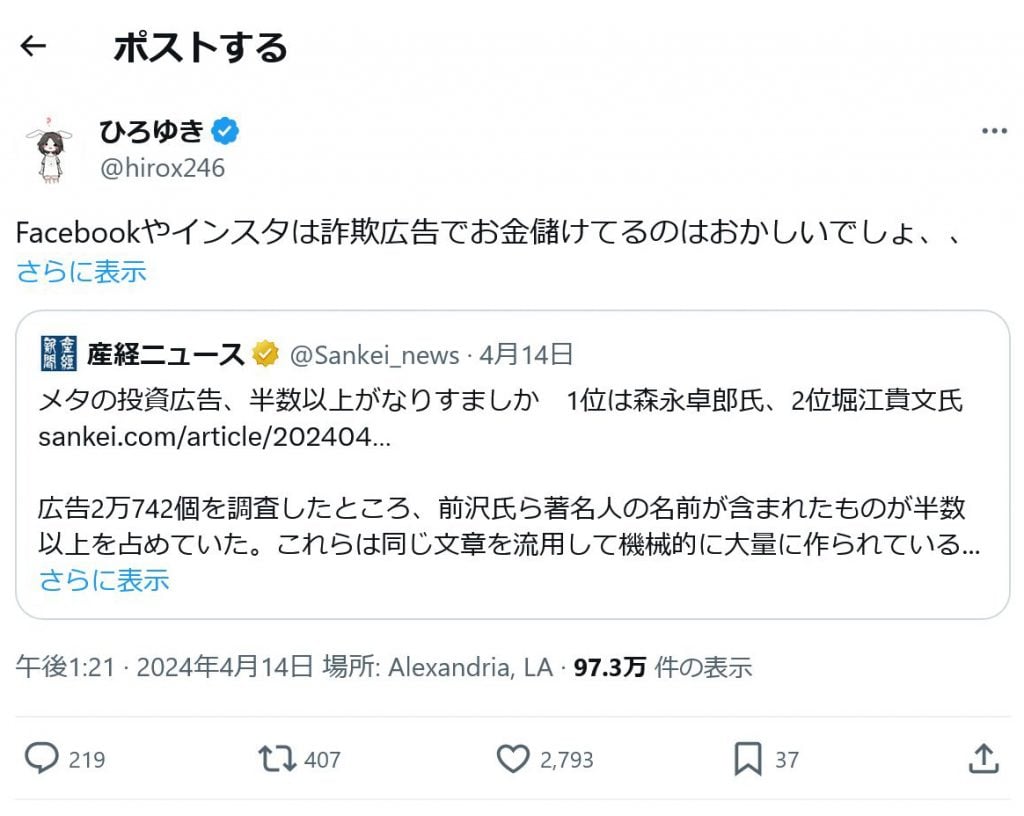

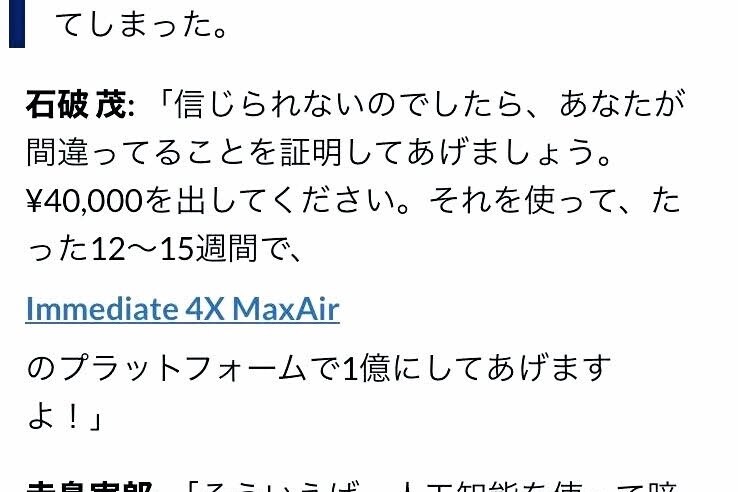

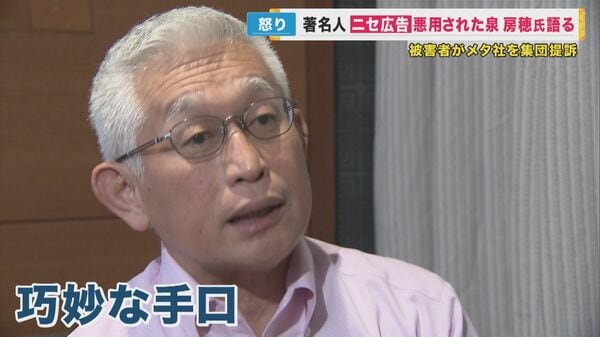

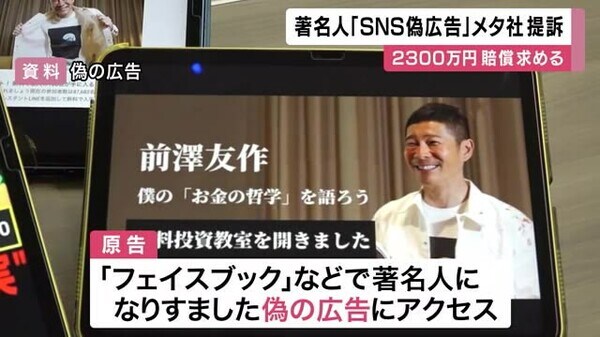

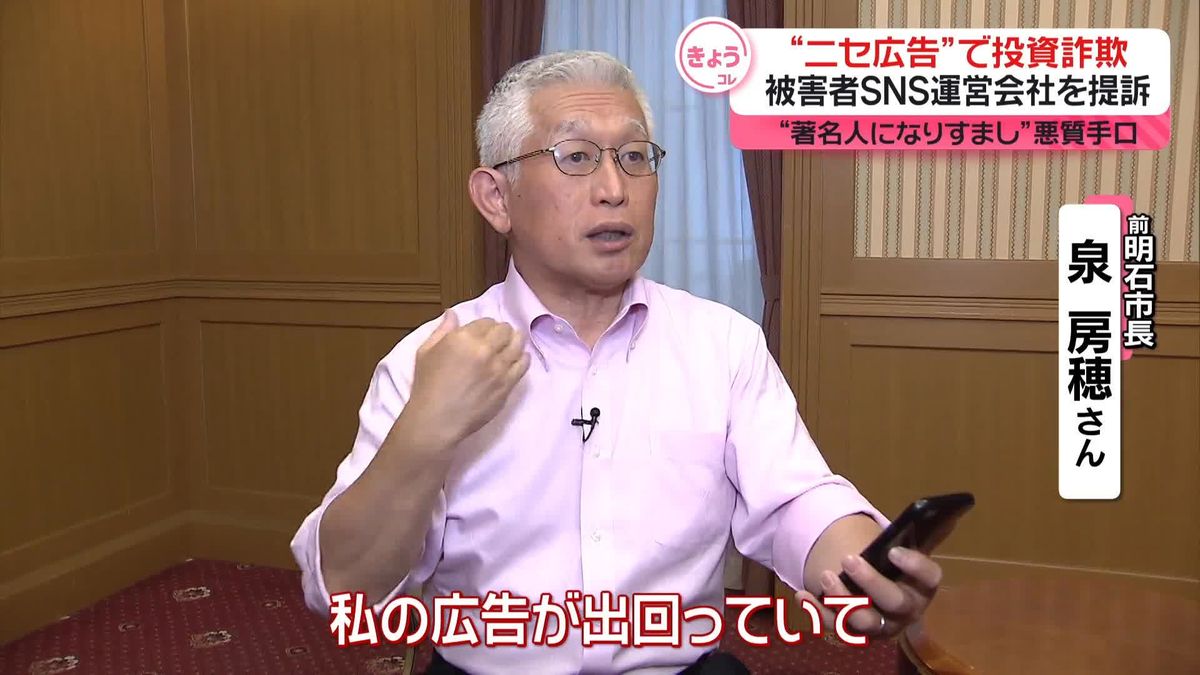

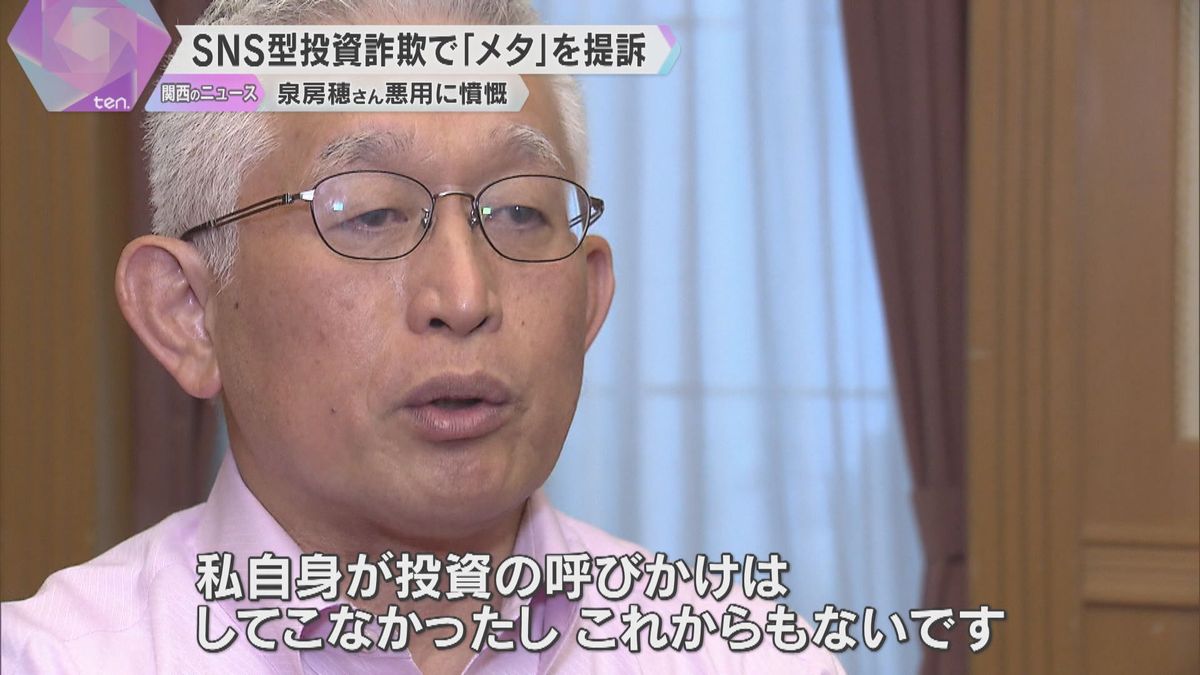

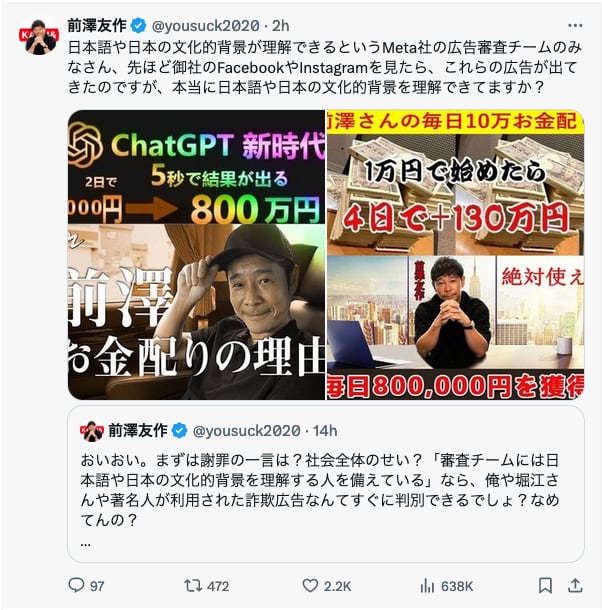

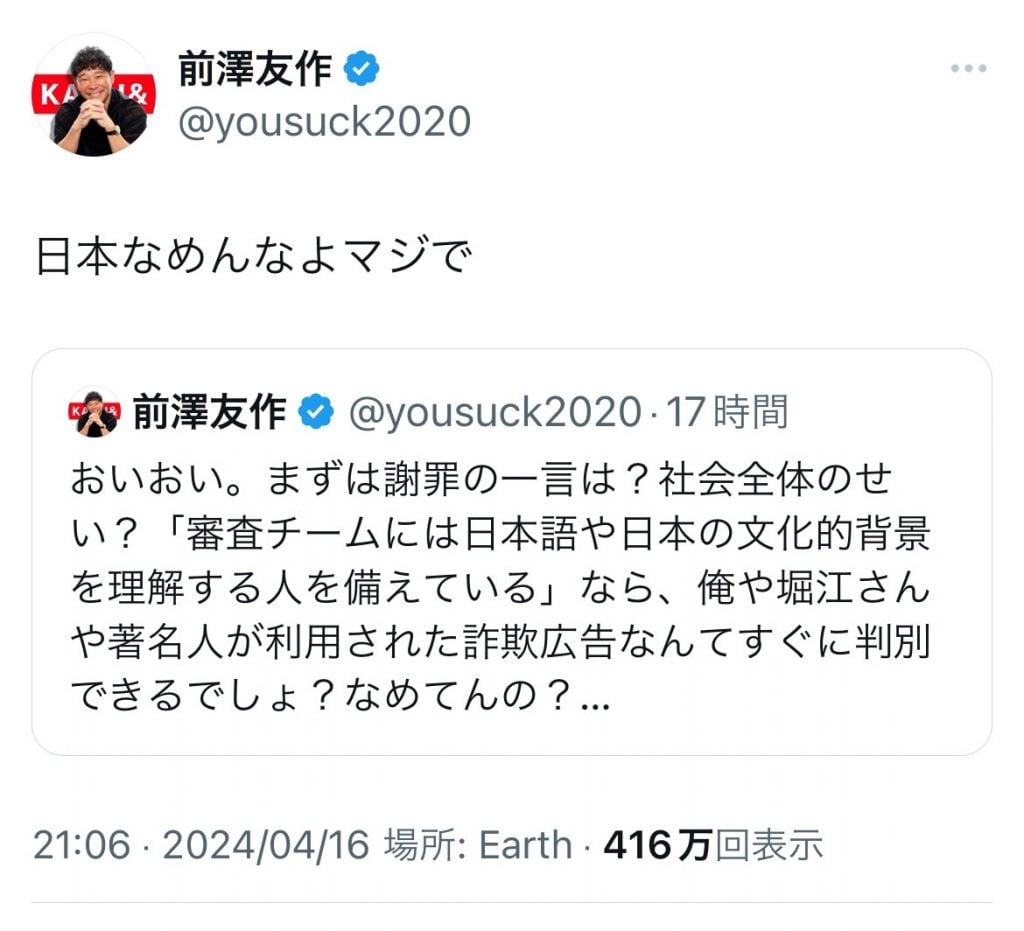

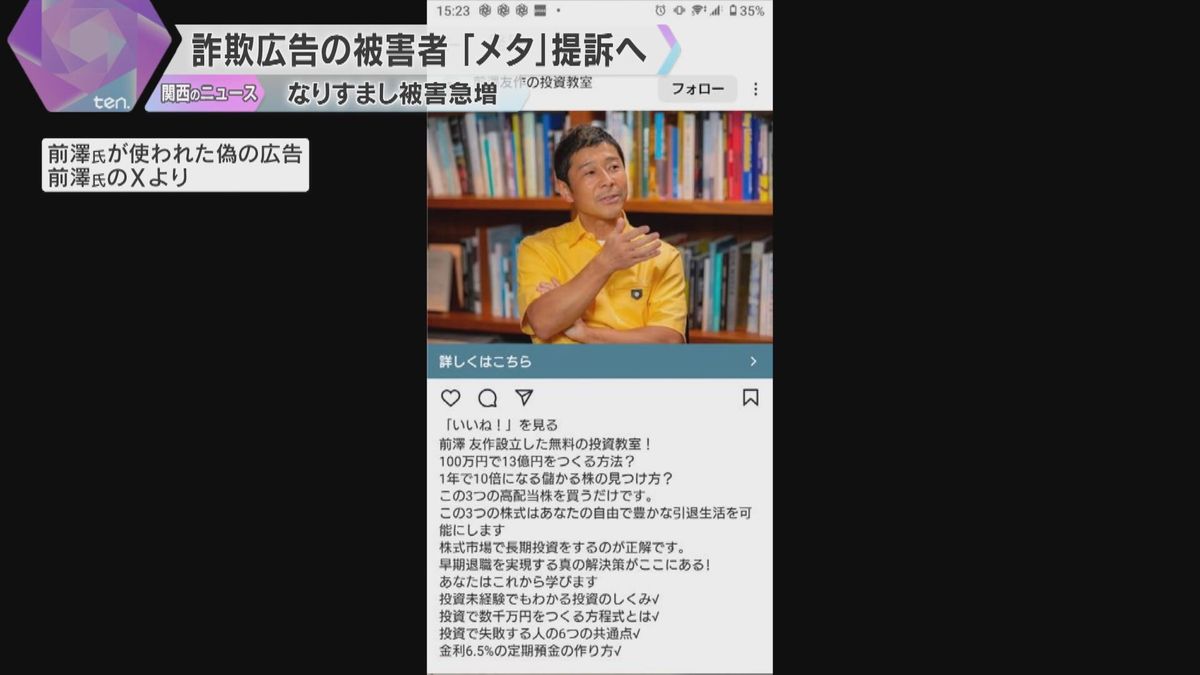

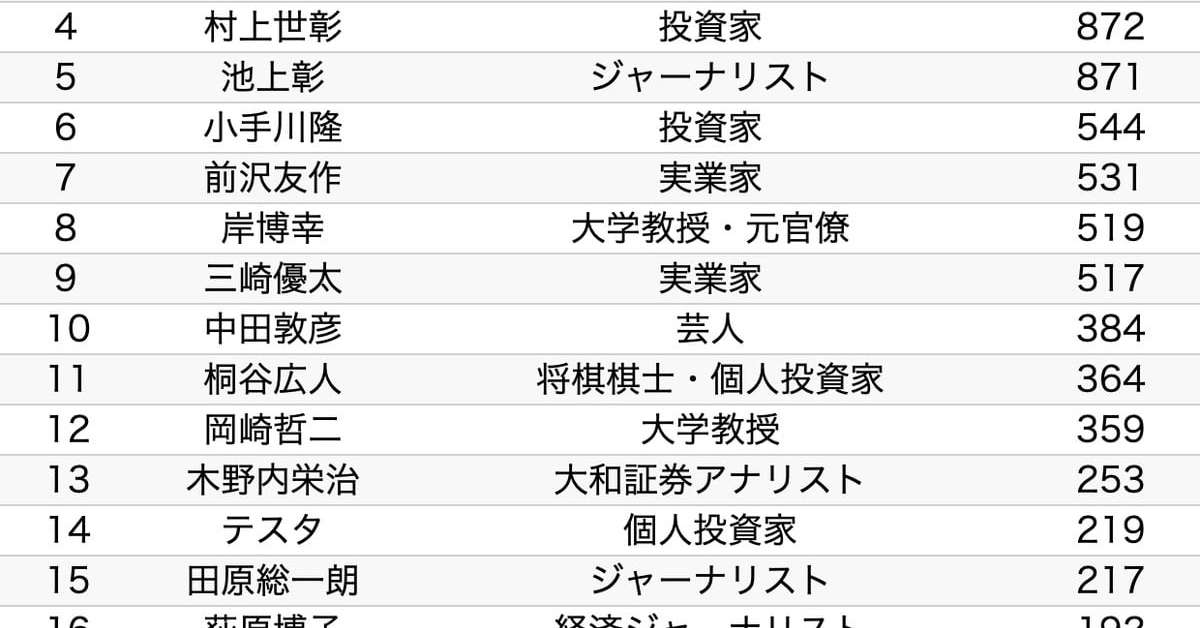

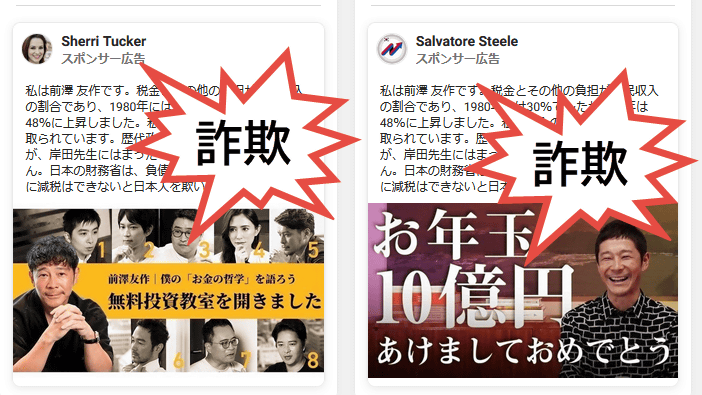

Meta's AI-driven ad platform on Facebook enabled fraudulent ads impersonating celebrities, leading to financial losses for users. Four victims are suing Meta's Japan unit for damages. Japanese politicians have demanded Meta consider halting ads, criticizing the company's insufficient response to the ongoing scam ad problem.[AI generated]

:quality(50)/cloudfront-ap-northeast-1.images.arcpublishing.com/sankei/FZWBZ3MY4JBUPGJ3IXZ6K3VRJQ.jpg)

/cloudfront-ap-northeast-1.images.arcpublishing.com/sankei/K4CYJVEDHJM63PEJENJXUOZZR4.jpg)

/cloudfront-ap-northeast-1.images.arcpublishing.com/sankei/JDLMXGGXJJC7DLYSGULHLFGOSY.jpg)