The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

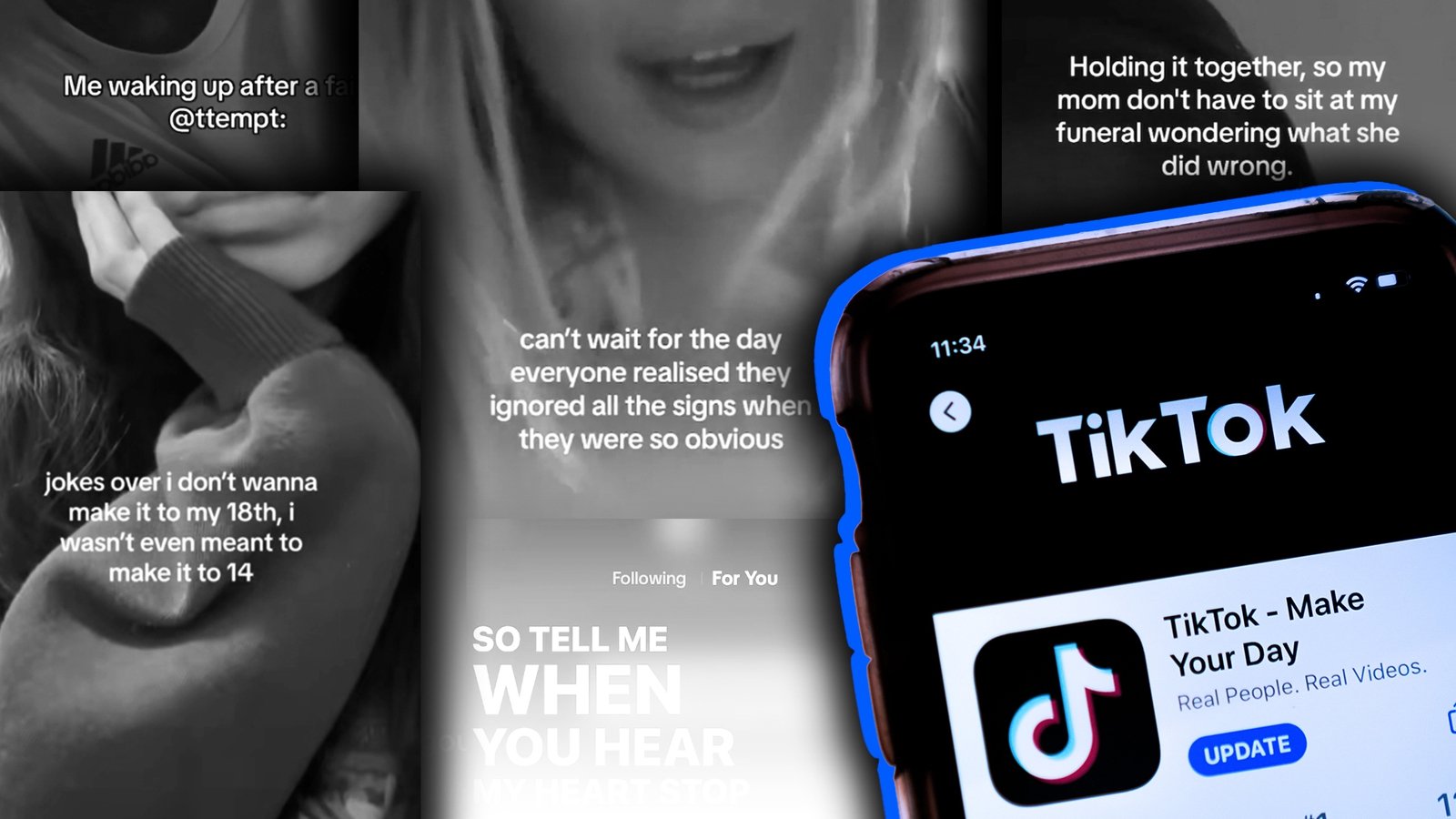

An RTÉ Prime Time investigation found that TikTok's AI-driven recommendation system exposed accounts set as 13-year-olds to videos about self-harm and suicide within minutes. The incident has led to real harm, with over 140 children contacting support services about self-harm since February, raising concerns about the platform's impact on youth mental health.[AI generated]

:quality(70)/cloudfront-eu-central-1.images.arcpublishing.com/irishtimes/VHFAVTA4U5C7FE2ZTPOGOKHXN4.jpg)