The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

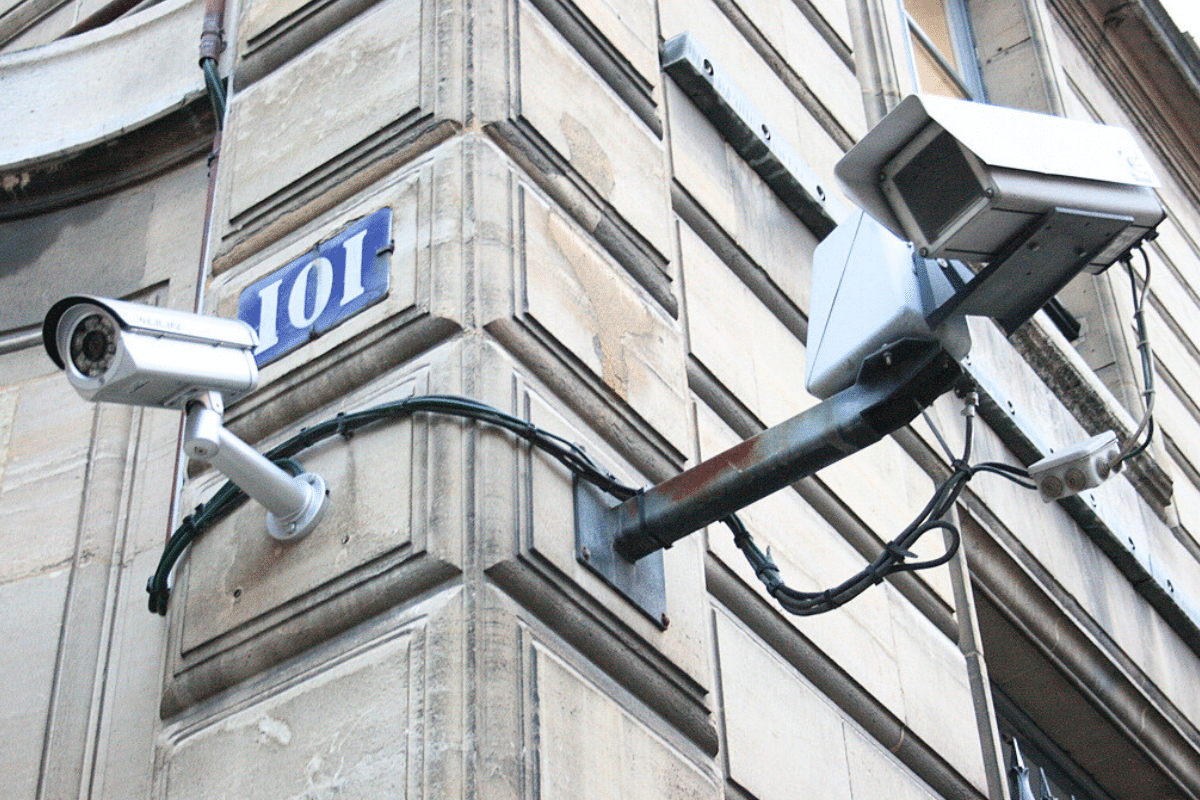

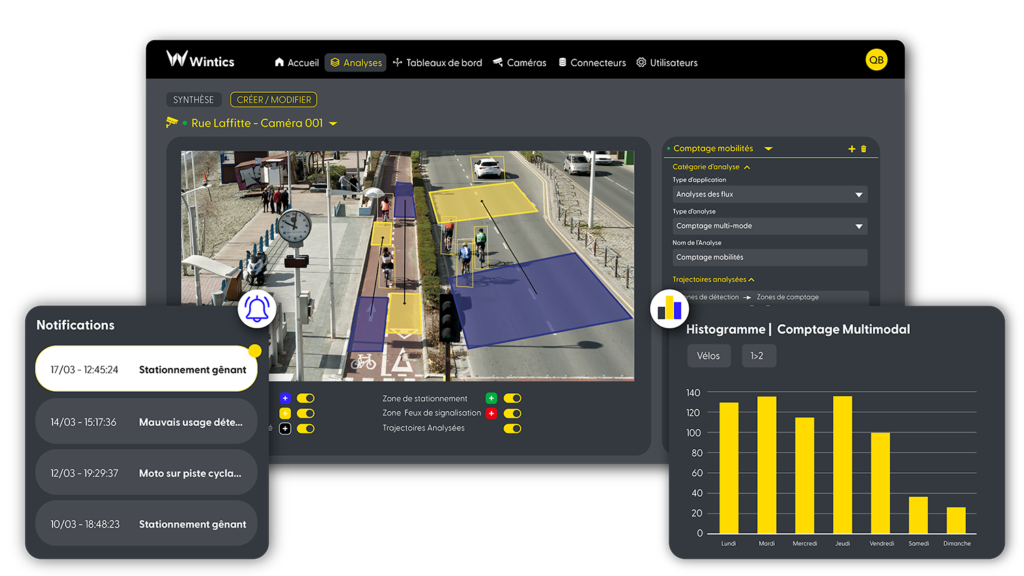

Paris police authorized SNCF and RATP to conduct large-scale trials of AI-powered video surveillance during major events, including a football match and concert. The Cityvision AI system analyzes live camera feeds for security threats. While no harm has occurred, the deployment raises concerns about privacy and potential rights violations.[AI generated]