The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

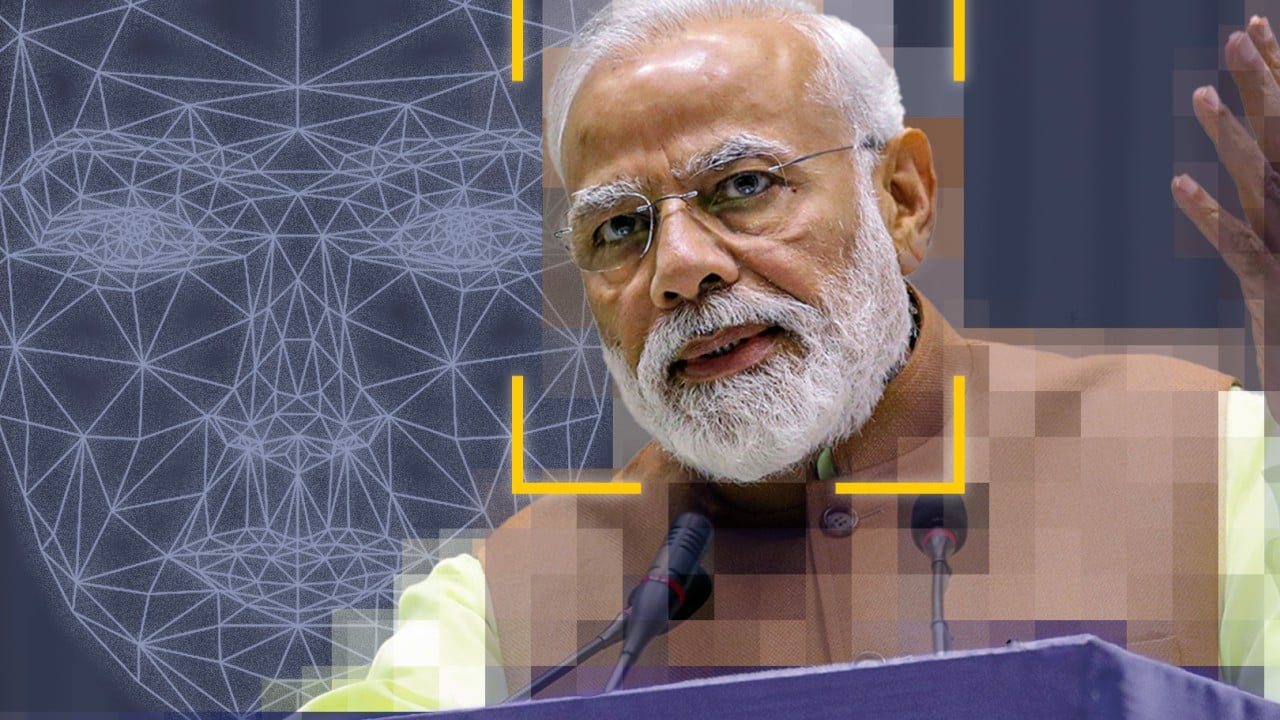

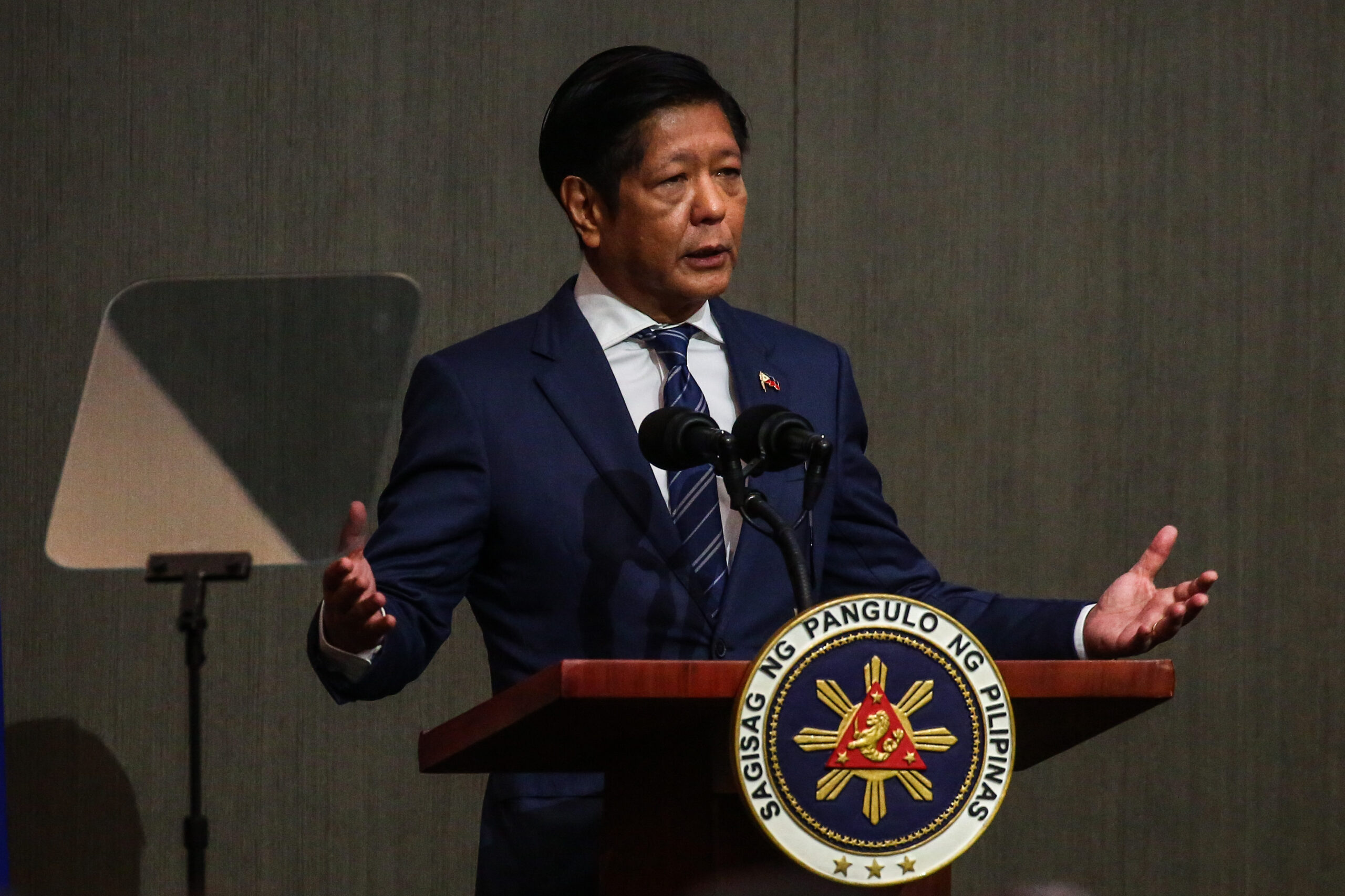

AI-generated deepfake audio and video falsely depicting President Ferdinand Marcos Jr. ordering military action against China circulated widely online, causing alarm over misinformation and potential foreign policy disruption. The Philippine government has launched an investigation and pledged legal action against those responsible for creating and spreading the manipulated AI content.[AI generated]