The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

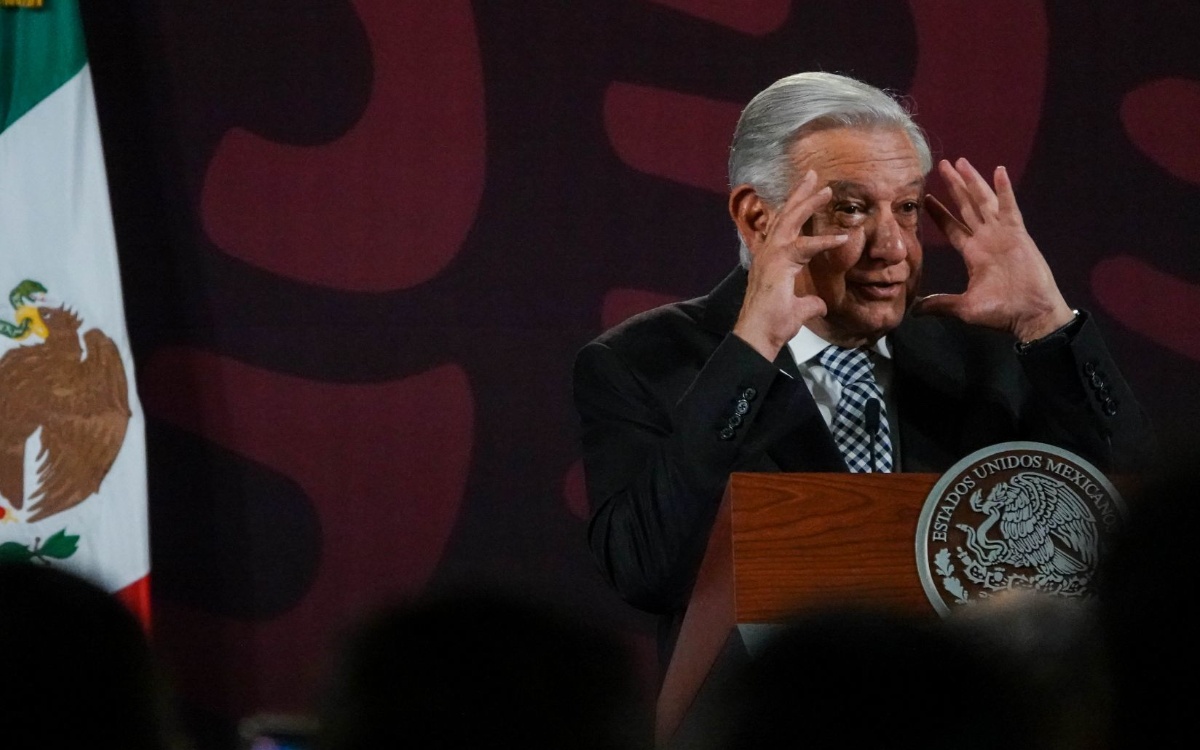

AI-generated deepfake videos and audio impersonating President Andrés Manuel López Obrador have been circulating online, promoting fraudulent Pemex investment schemes. The Mexican government and financial authorities have warned the public about these scams, highlighting the risks of AI-driven misinformation and the financial harm caused by such deceptive content.[AI generated]

:quality(70)/cloudfront-us-east-1.images.arcpublishing.com/sdpnoticias/EQPMAK2OTZCIJKF5AAC5V62UQ4.jpg)