The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

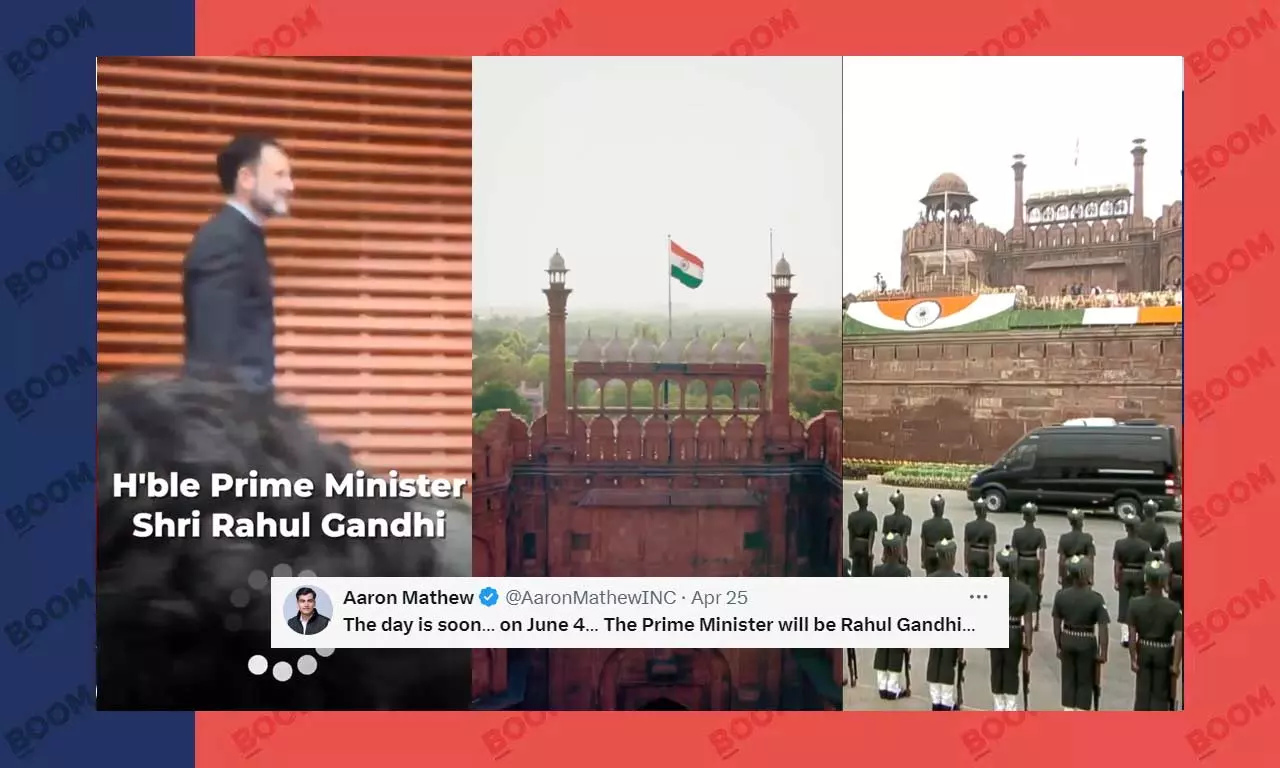

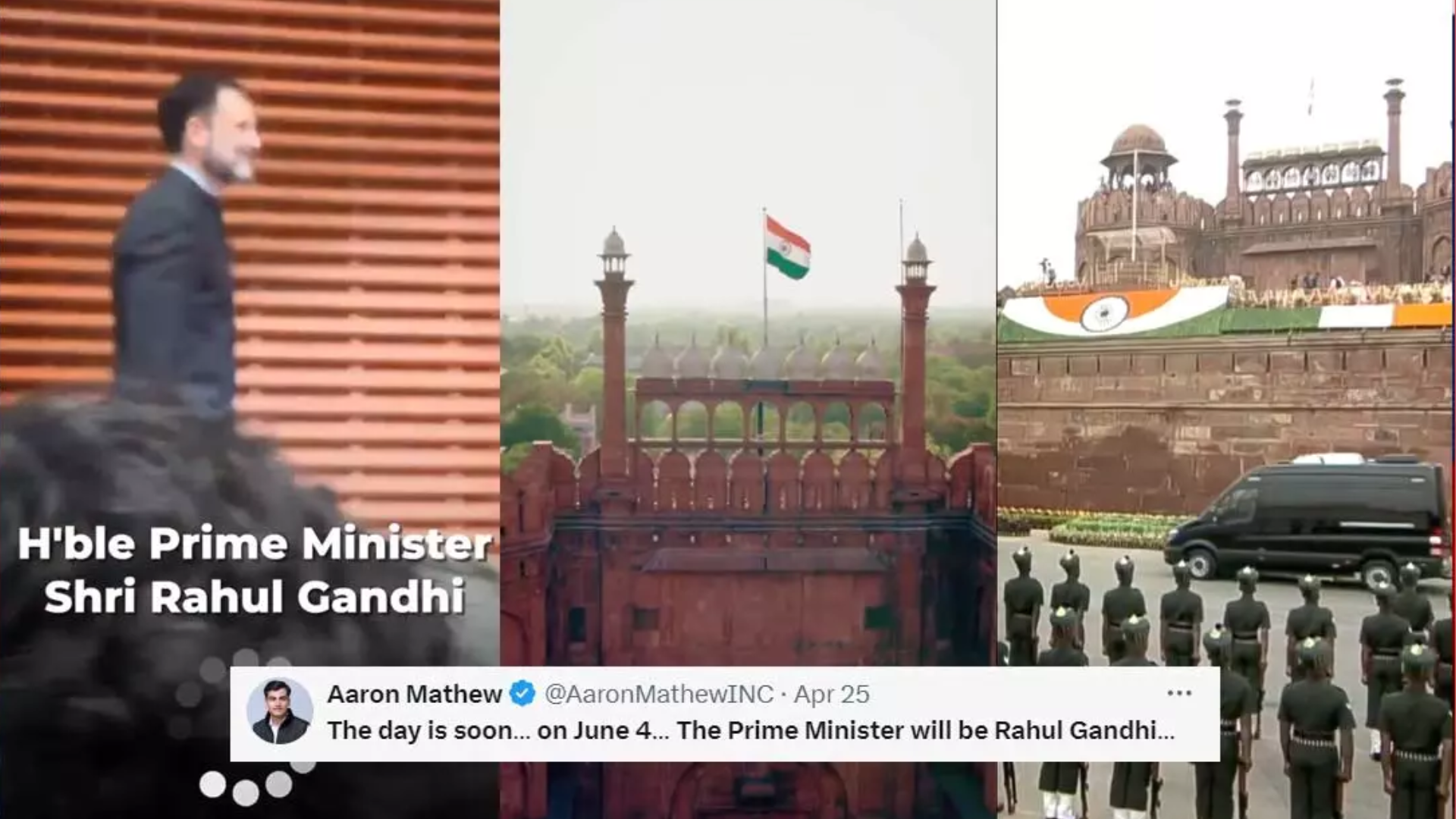

An AI-generated voice clone of Rahul Gandhi, falsely depicting him swearing in as Prime Minister, went viral on social media during the 2024 Lok Sabha elections. Fact-checkers confirmed the audio was AI-generated, raising concerns about deepfake-driven misinformation influencing public opinion and election integrity.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves the use of AI systems (AI voice cloning) to generate manipulated audio content. The AI-generated deepfake has been disseminated on social media, which can mislead the public and disrupt the democratic process, constituting harm to communities. Since the AI system's use has directly led to misinformation with potential societal harm, this qualifies as an AI Incident under the framework.[AI generated]