The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

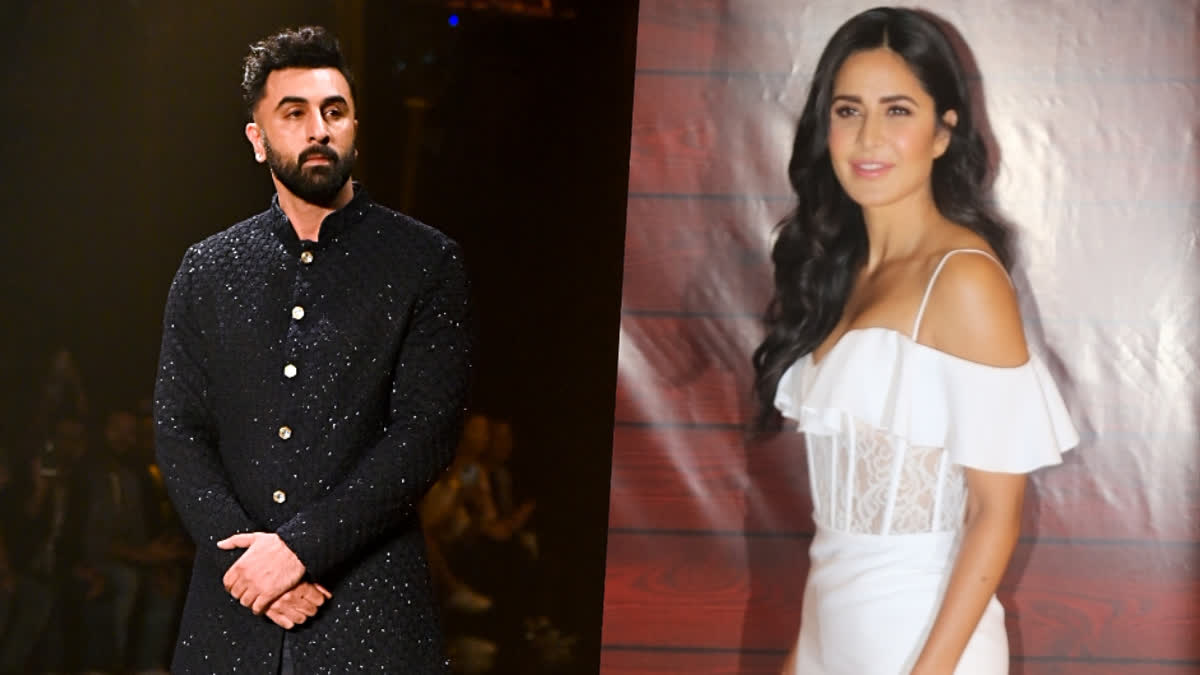

A deepfake video of Bollywood actress Katrina Kaif speaking French has gone viral, raising concerns about AI misuse. The video, originating from a 2017 event, uses AI to generate a French voiceover, misleading viewers and potentially violating privacy and intellectual property rights. This incident highlights the growing issue of deepfake technology in media.[AI generated]

-1714558209084.jpg)

_2024-5-3-4-57-11_thumbnail.jpg)