The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

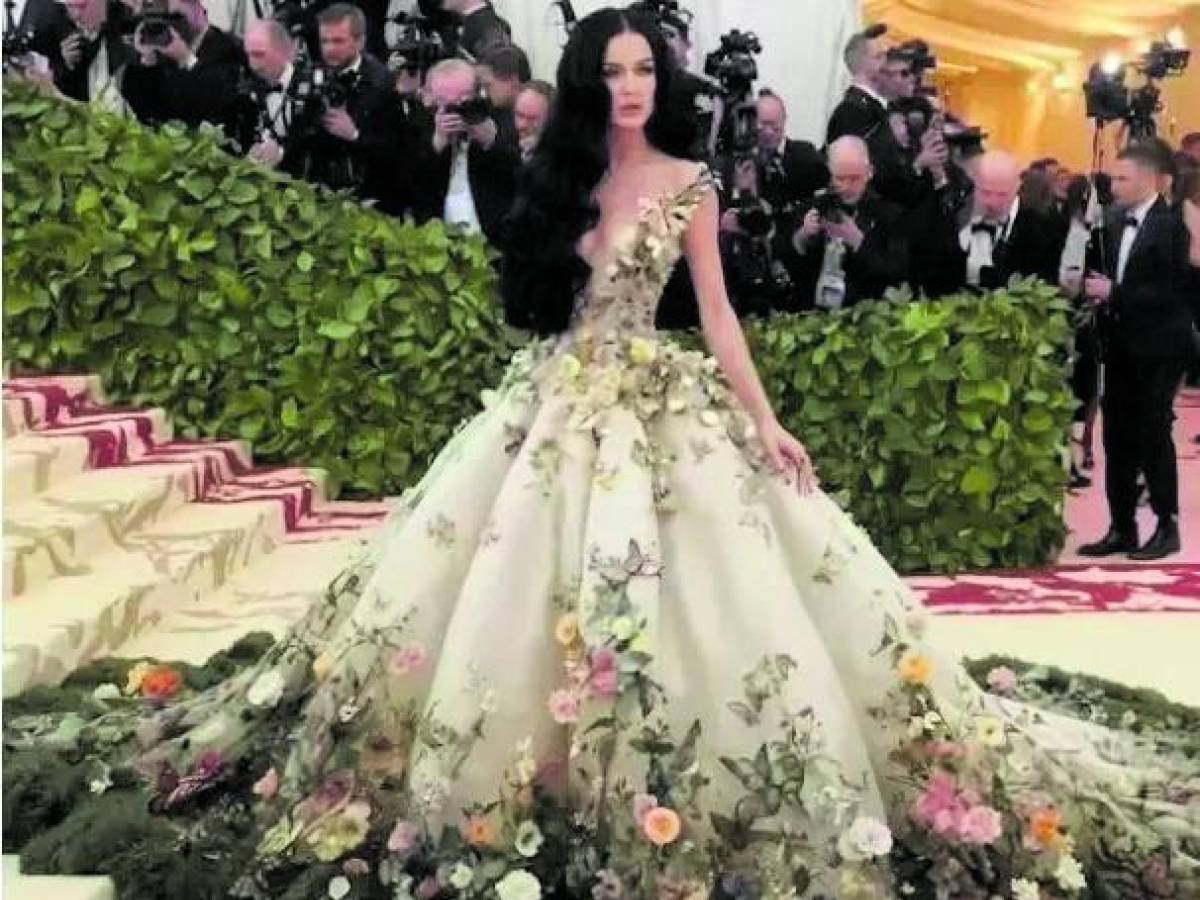

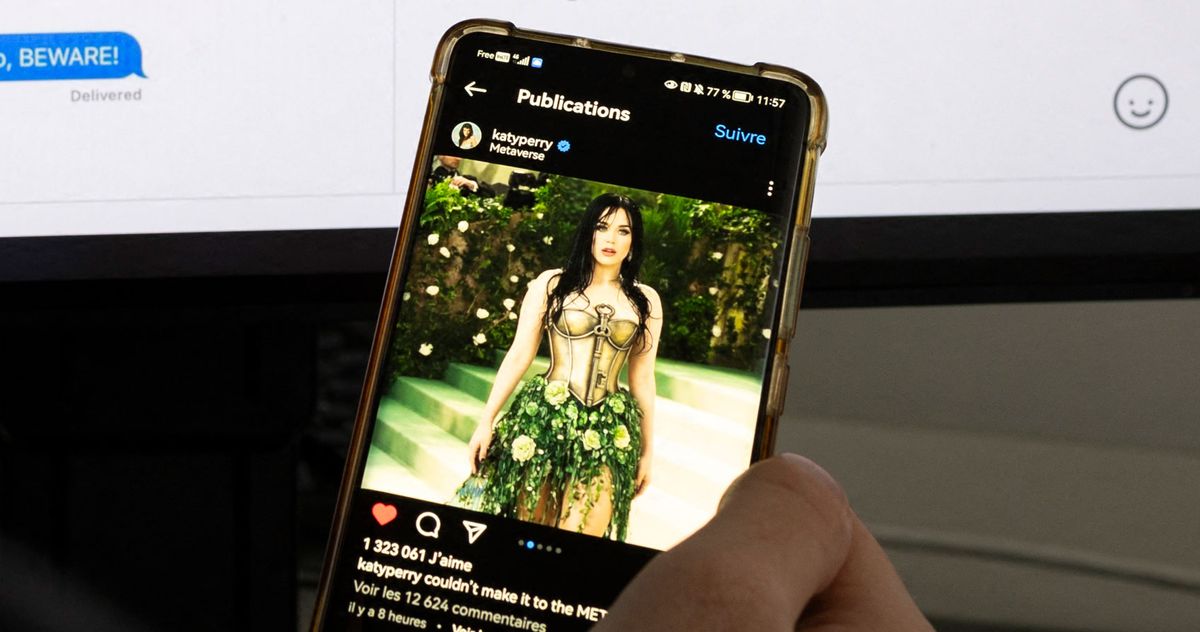

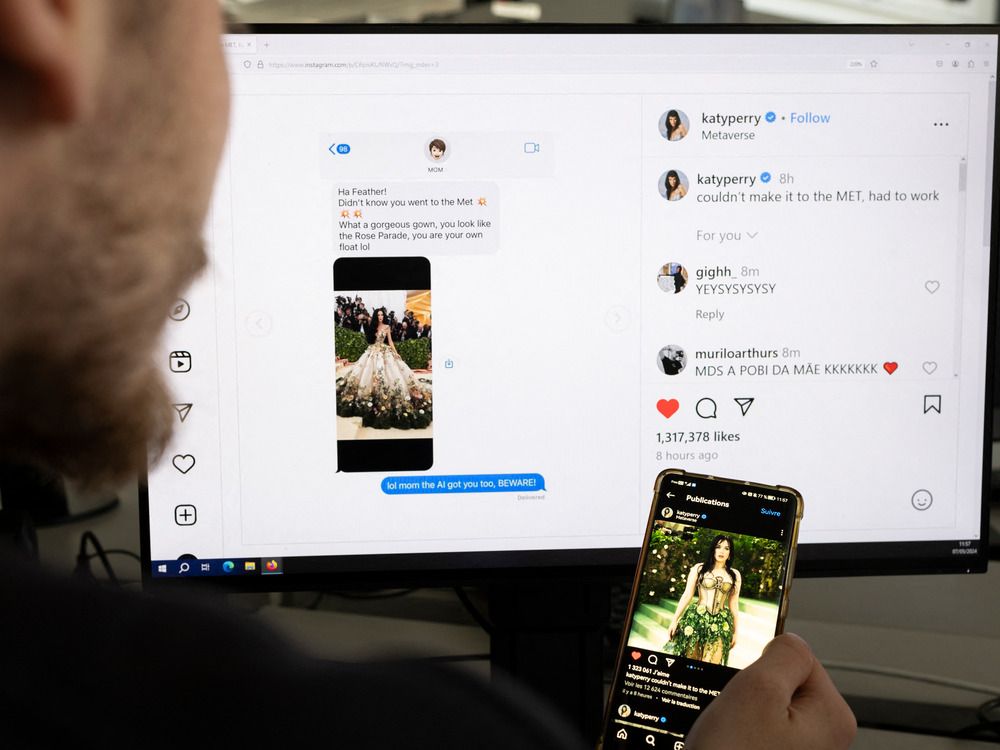

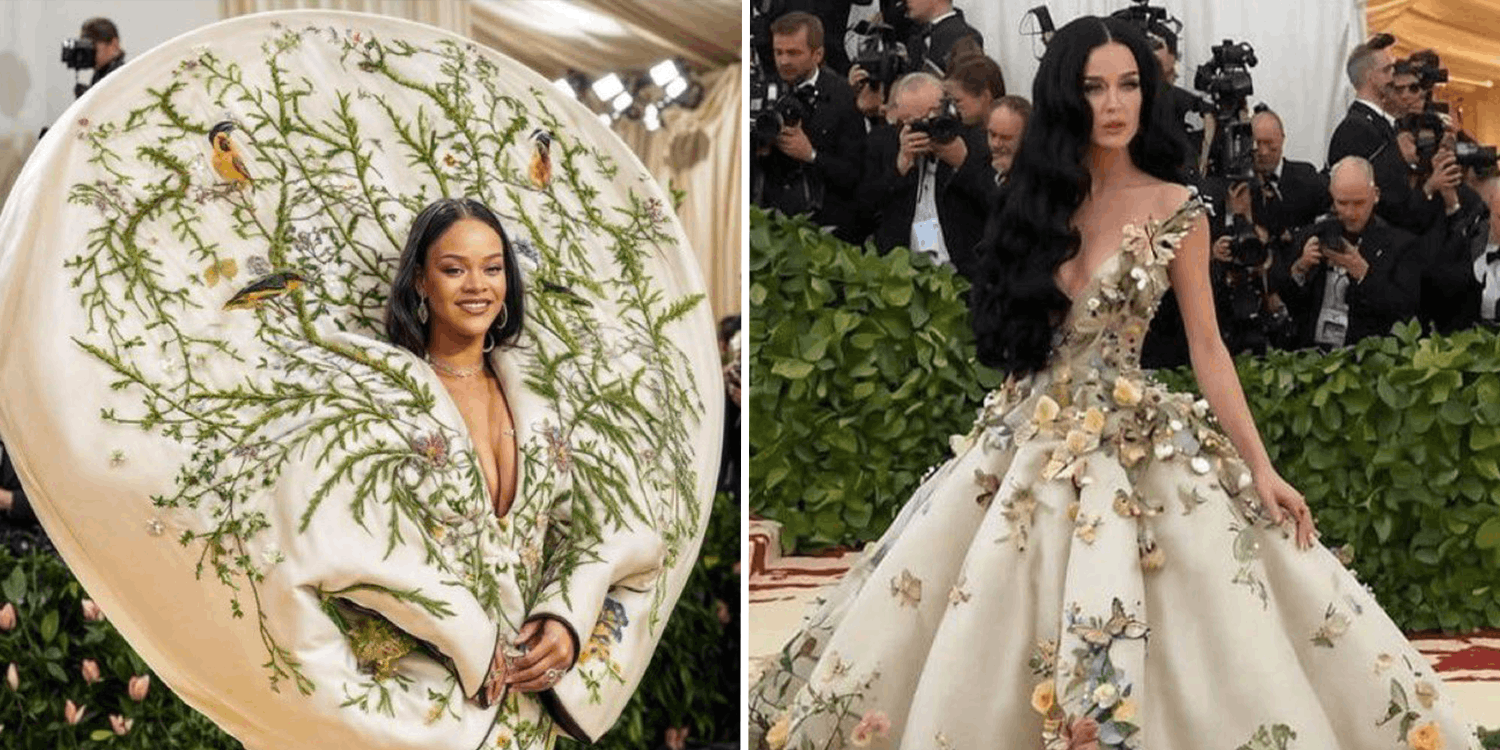

AI-generated deepfake images of Katy Perry at the Met Gala misled many, including her mother, despite her absence from the event. The incident highlights privacy and intellectual property concerns as the images circulated widely on social media, deceiving numerous internet users.[AI generated]

/shethepeople/media/media_files/5Yv1EPbIwpl4gBWYGNwB.webp)