The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

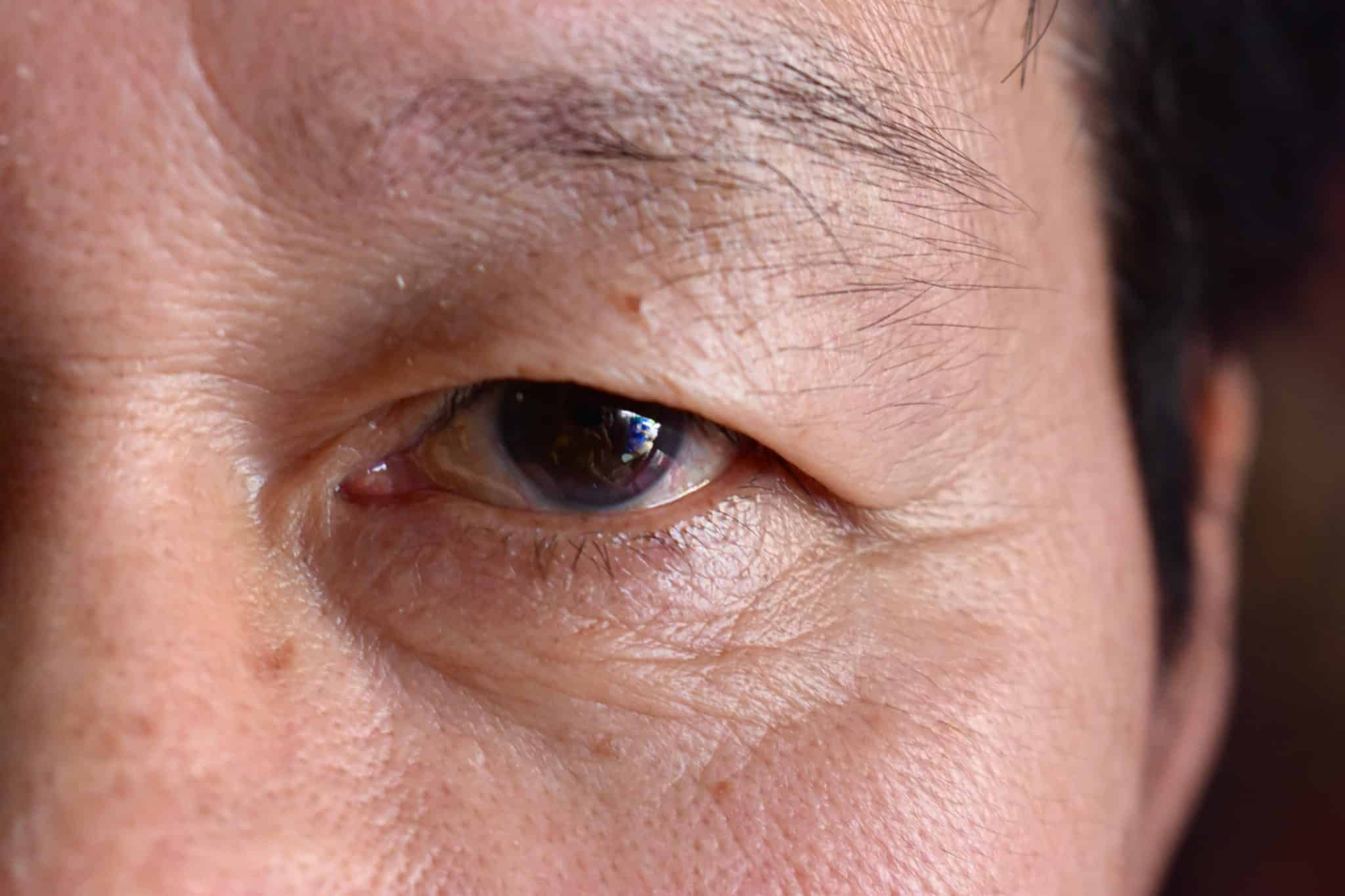

Thailand's government is collecting iris and facial biometrics from Myanmar nationals using AI-enabled systems to streamline healthcare services. Over 10,000 individuals have been affected, sparking concerns among rights groups about potential privacy violations, human rights risks, and the misuse or security vulnerabilities of sensitive biometric data.[AI generated]