The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

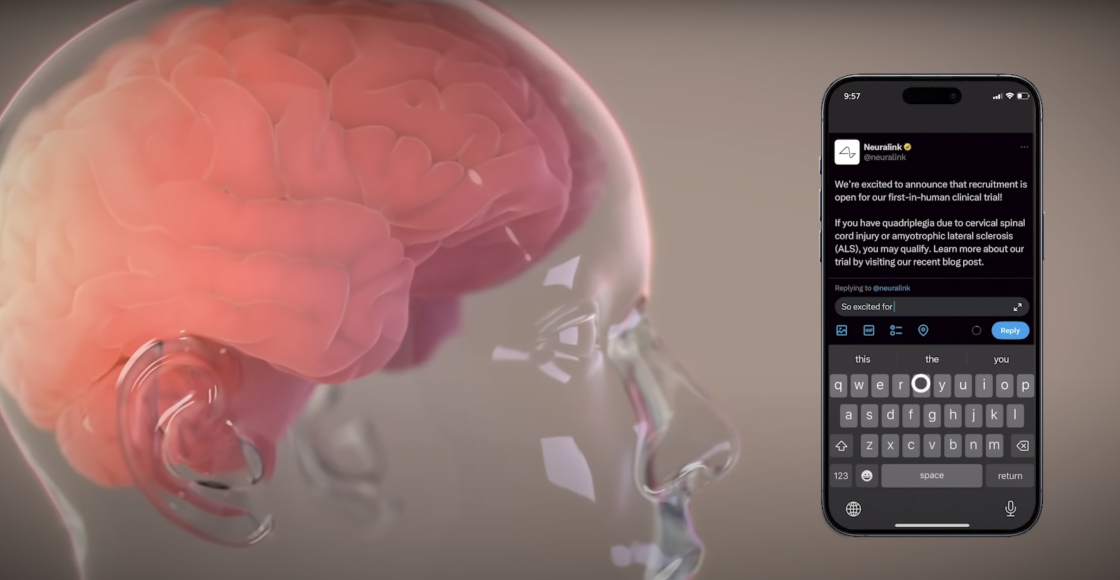

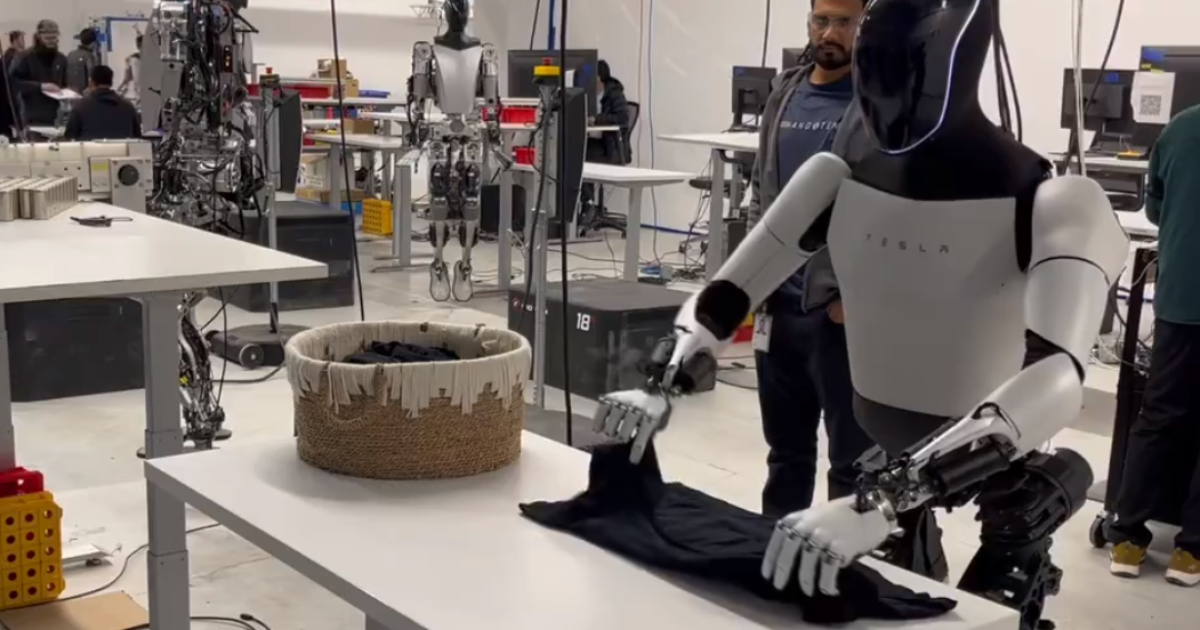

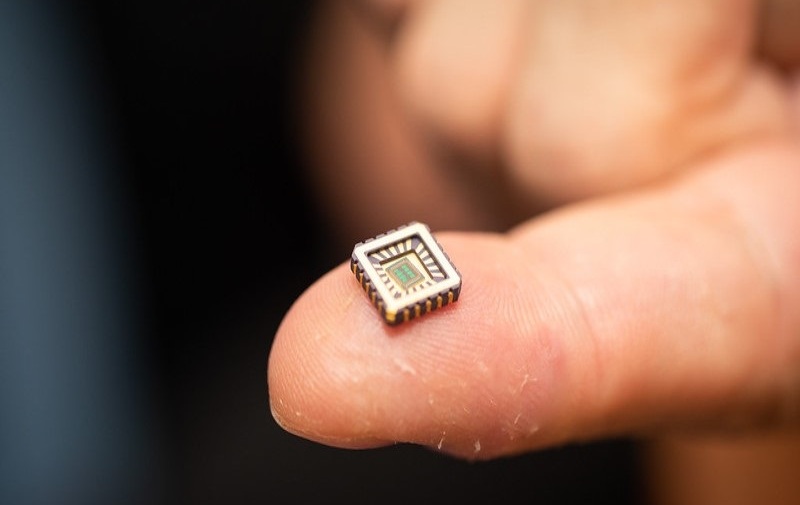

Elon Musk's Neuralink has faced issues with its brain implant, as wires in the device reportedly became loose in its first human trial. Despite knowing about this design flaw from animal testing, Neuralink proceeded with the trial. The FDA was aware of the issue but approved the trial, raising safety concerns.[AI generated]

:quality(85)/cloudfront-us-east-1.images.arcpublishing.com/infobae/OK7S5VSETT32IHICCQFD7LC5QA.jpg)

:quality(70):focal(770x324:780x334)/cloudfront-us-east-1.images.arcpublishing.com/elfinanciero/G6S4W6JGIJAQPLTFSJTGYGF7RM.jpg)