The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

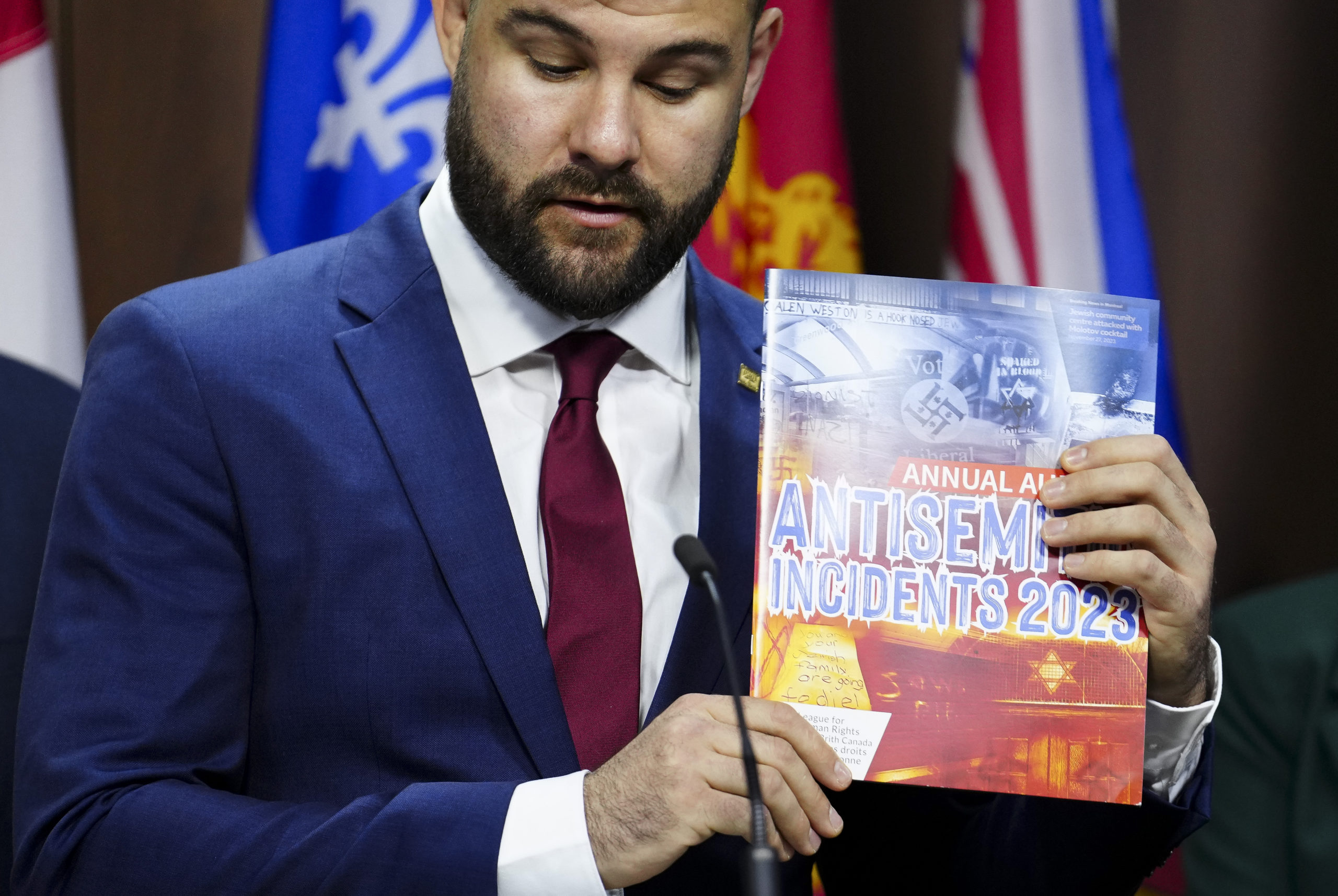

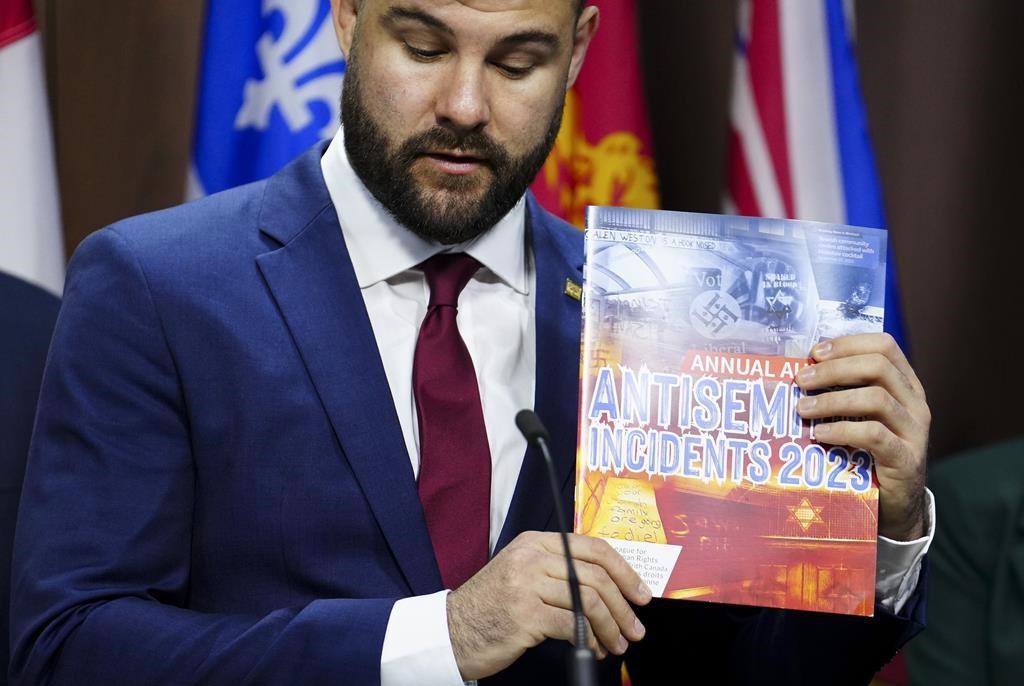

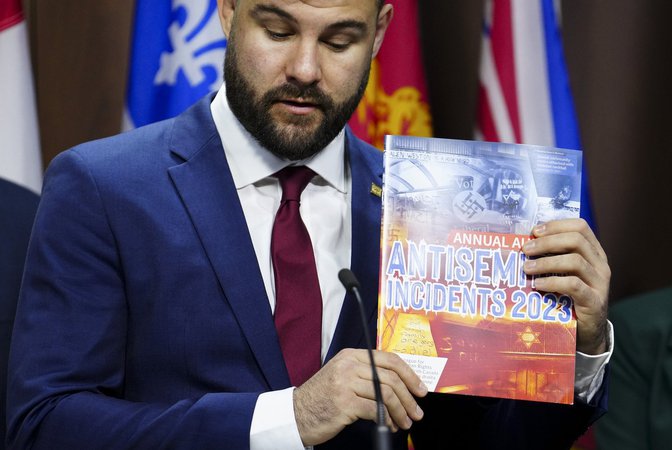

Experts and researchers are increasingly concerned about the rise of AI-generated hate content, including altered historical videos and racist imagery. Hate groups, such as white supremacists, are early adopters of these technologies, amplifying antisemitic, Islamophobic, and racist messages. A viral AI-altered video of Hitler delivering antisemitic remarks exemplifies this troubling trend.[AI generated]