The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

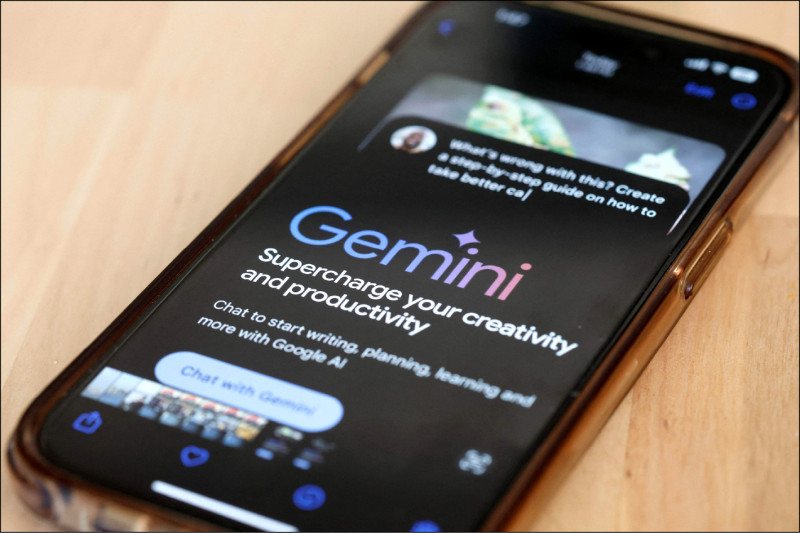

Google’s generative AI model Gemini, tested in Simplified Chinese, praised Xi Jinping as an “outstanding leader,” echoed Chinese Communist Party propaganda on Taiwan, and refused to address Xinjiang rights. U.S. legislators warn that its pro-Beijing bias could spread misinformation and urge Google to filter training data more robustly.[AI generated]