The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

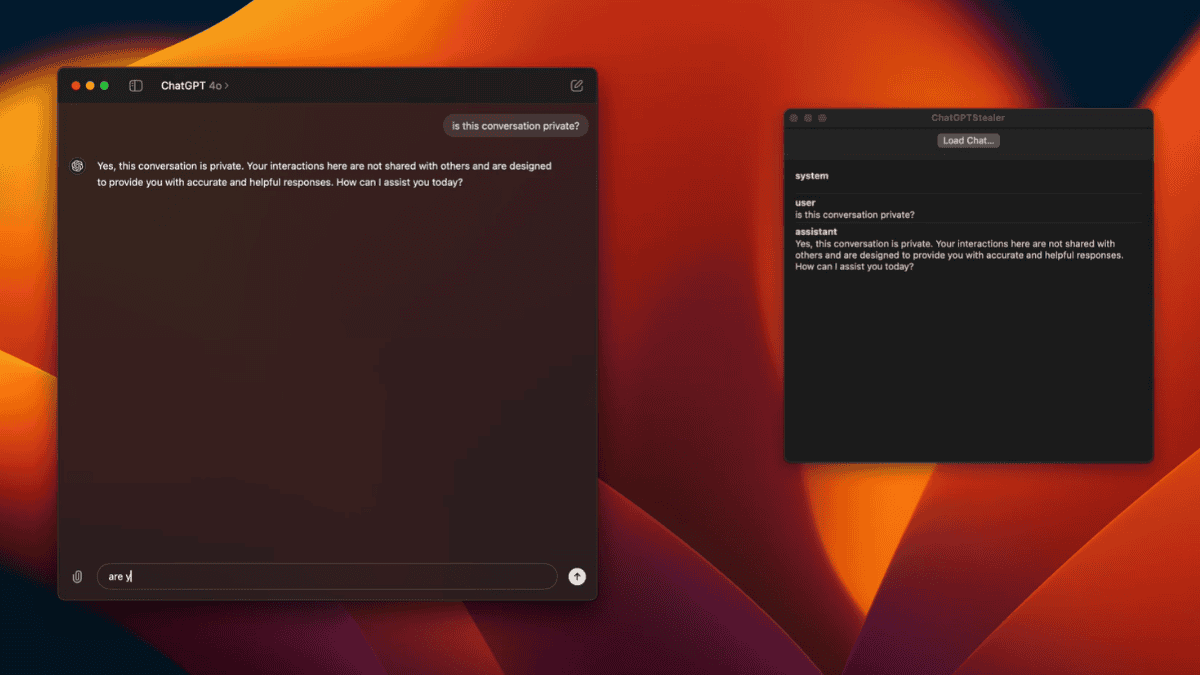

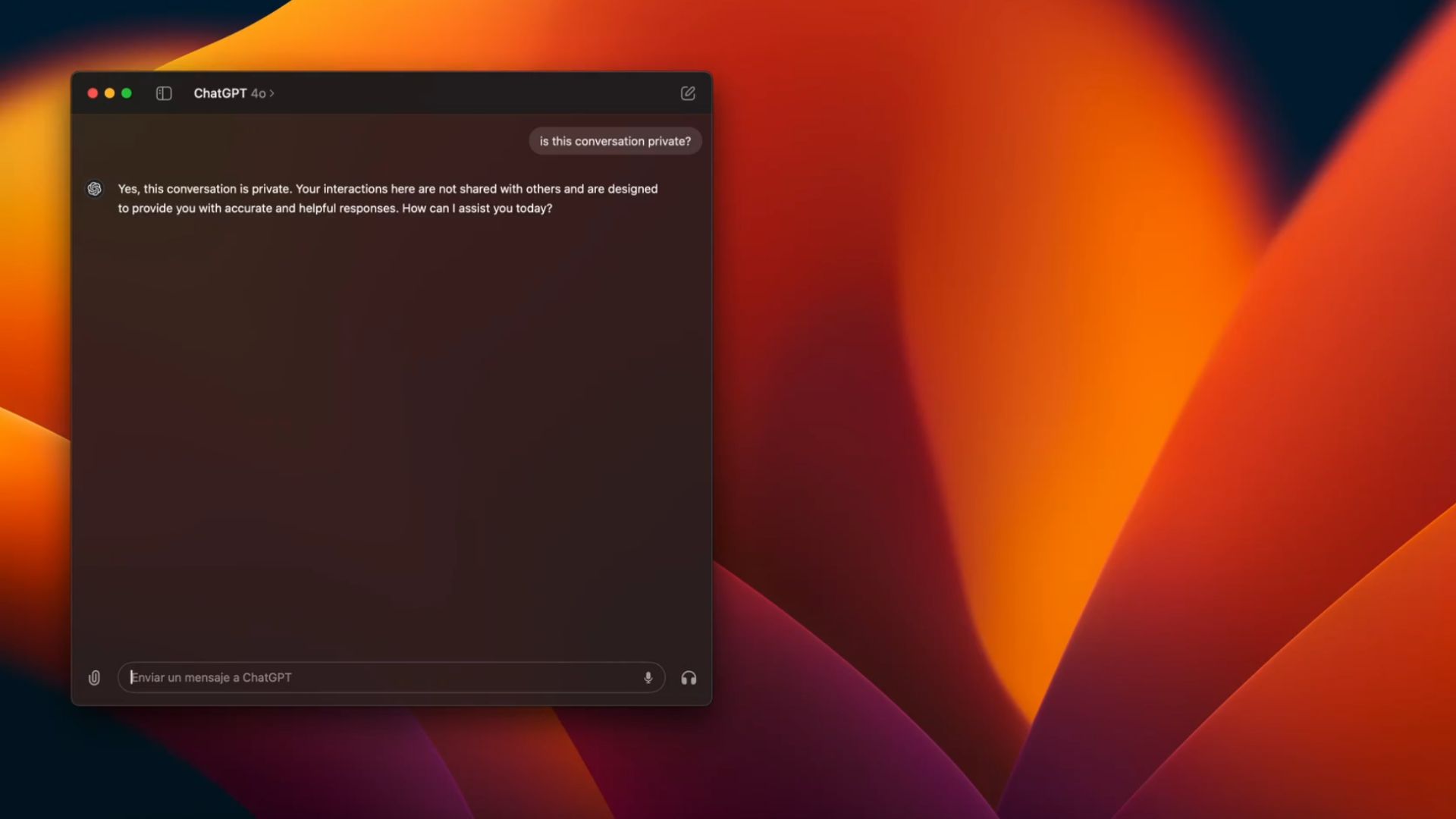

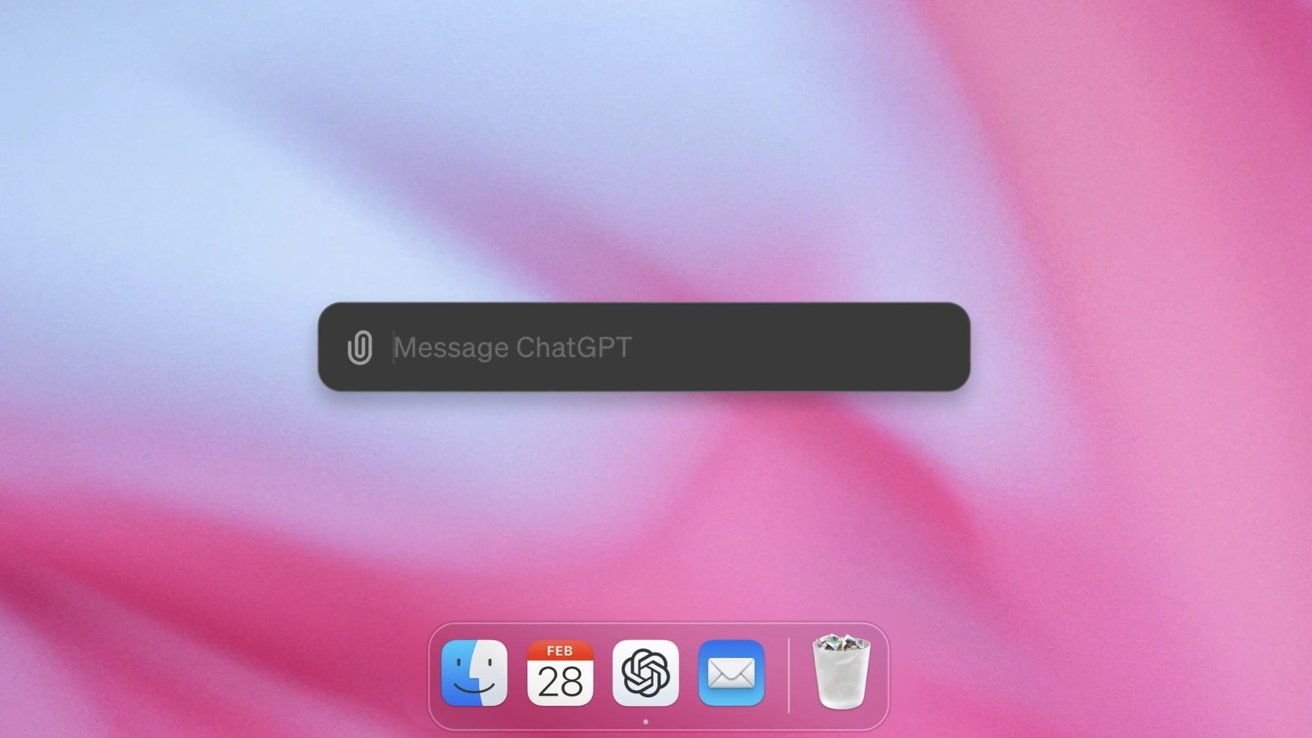

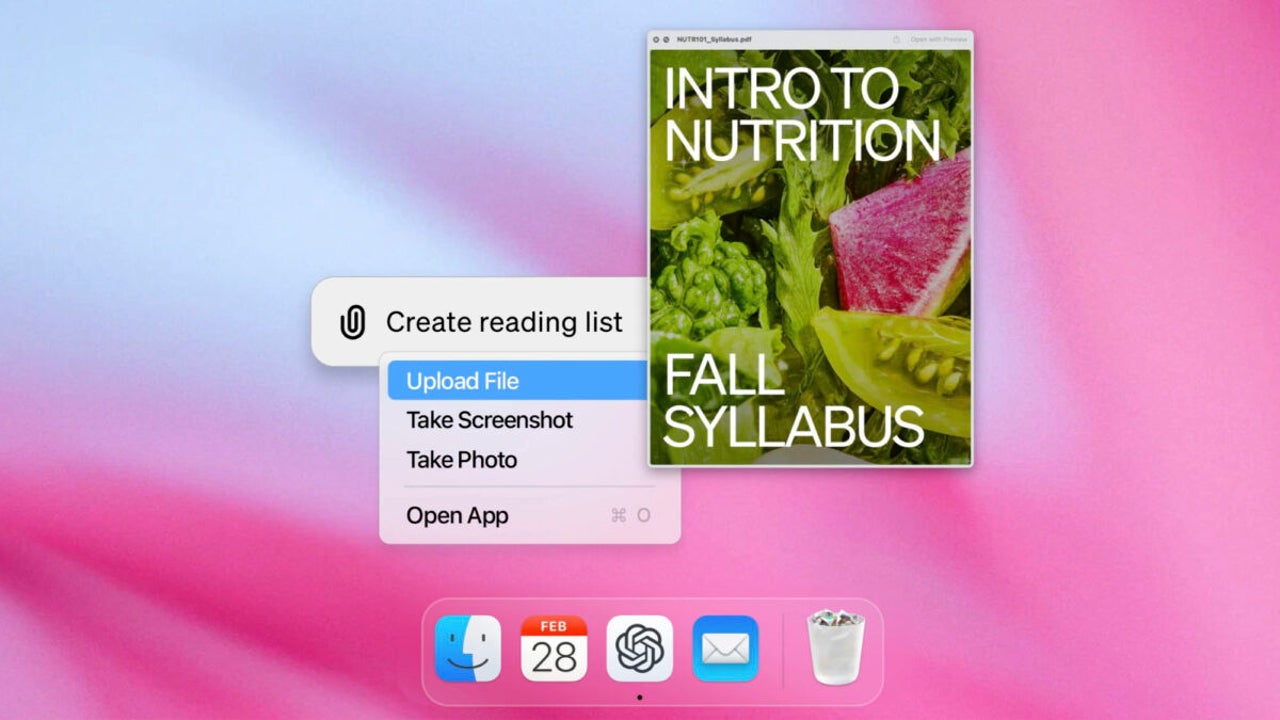

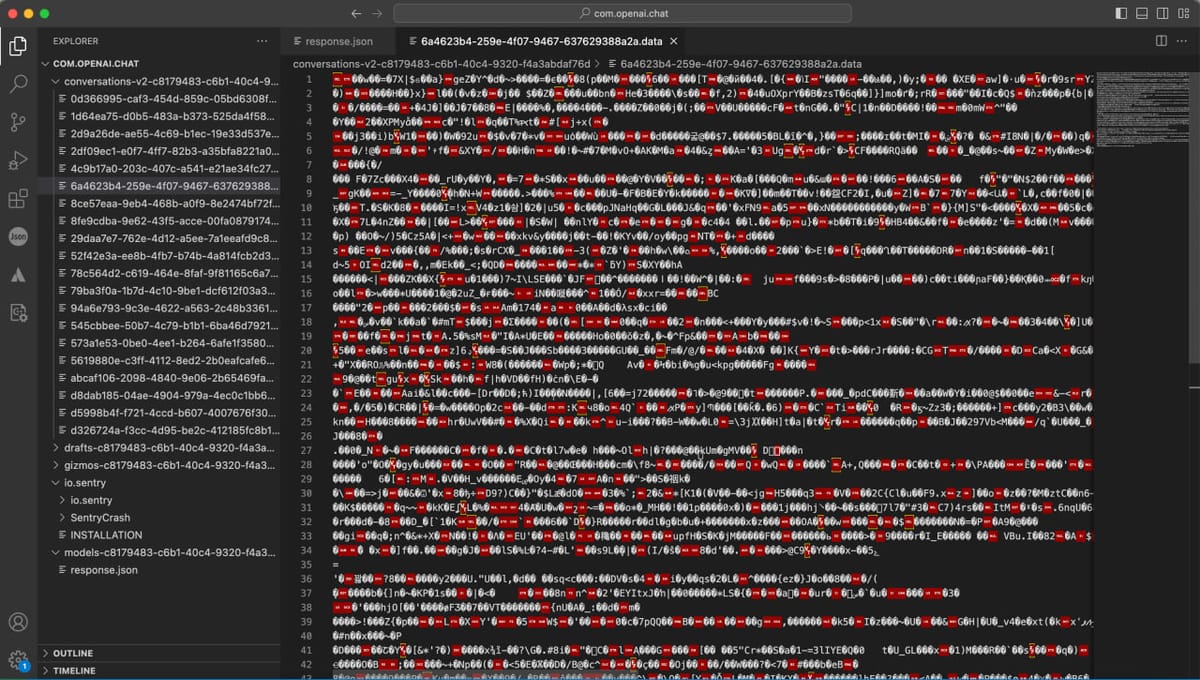

A vulnerability in the ChatGPT app for macOS, discovered by developer Pedro José Pereira Vieito, exposed user conversations by storing them in plain text without encryption. This posed a significant privacy risk. OpenAI quickly addressed the issue by releasing an update that encrypts stored conversations, enhancing user data protection.[AI generated]

:quality(85)/cloudfront-us-east-1.images.arcpublishing.com/infobae/EFSIFSF2Q2CWAPJ7ZXVS5PMHBQ.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/25462005/STK155_OPEN_AI_CVirginia_B.jpg)

/https://www.ilsoftware.it/app/uploads/2023/07/Progetto-senza-titolo-20-4.jpg)