The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

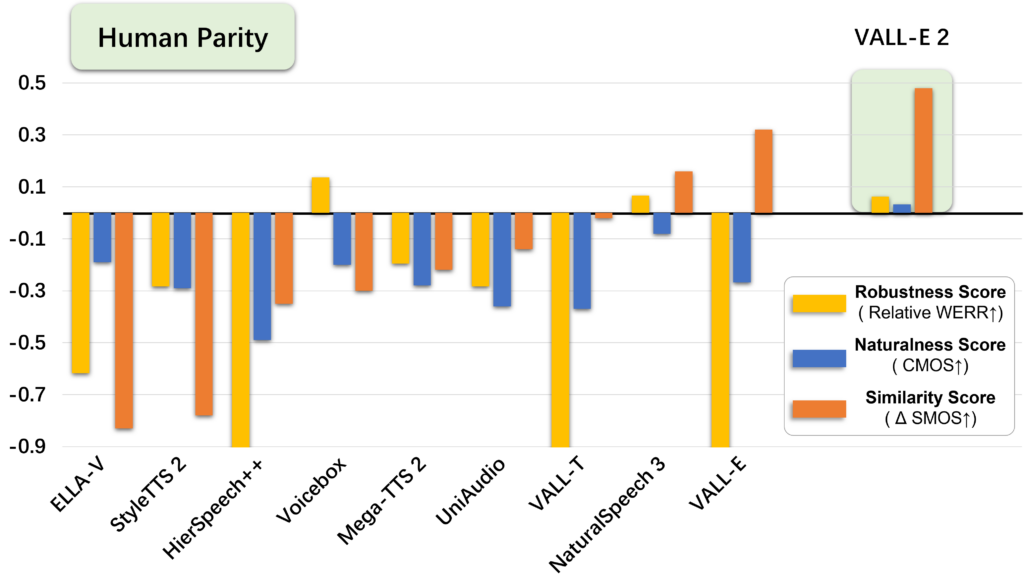

Microsoft has developed VALL-E 2, an AI capable of perfectly imitating human voices from just a few seconds of audio. This technology, which captures emotional tones, raises concerns about potential misuse, such as identity theft or fraud, prompting developers to consider it too dangerous for public release.[AI generated]

:format(jpg):quality(99)/f.elconfidencial.com/original/5b6/627/8e9/5b66278e98ab7e06d13a7c7731e2ff16.jpg)