The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

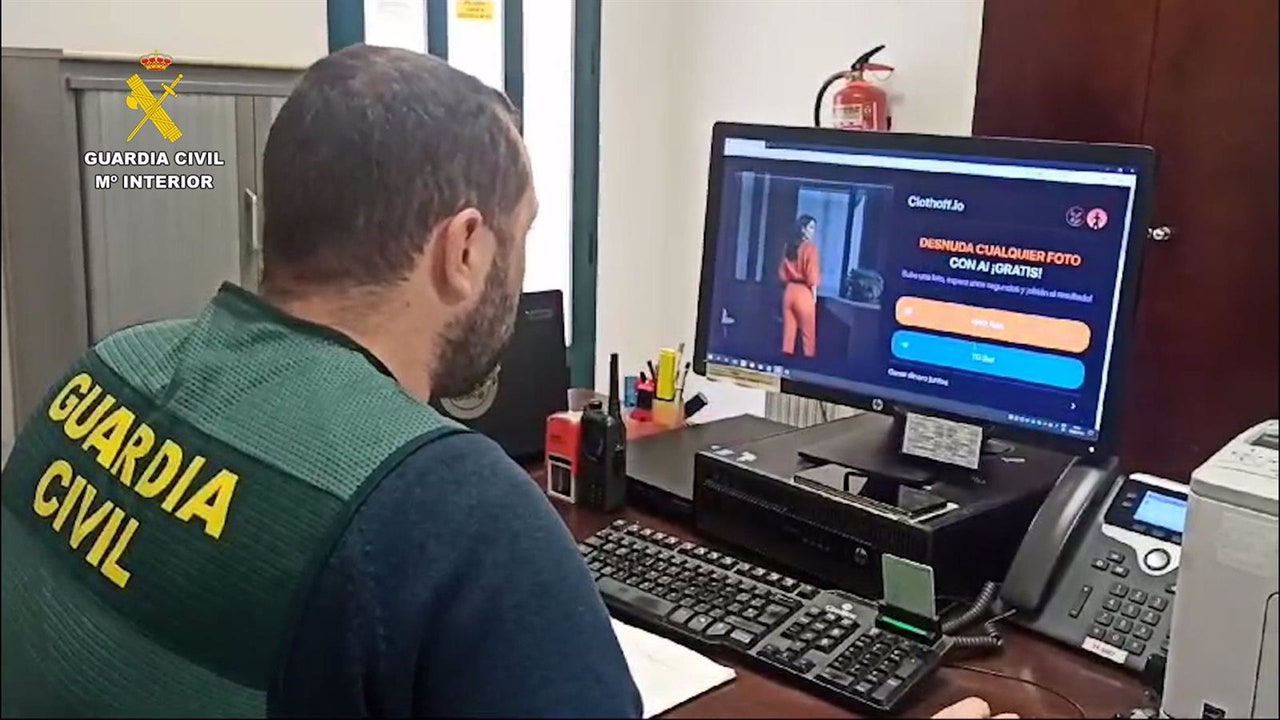

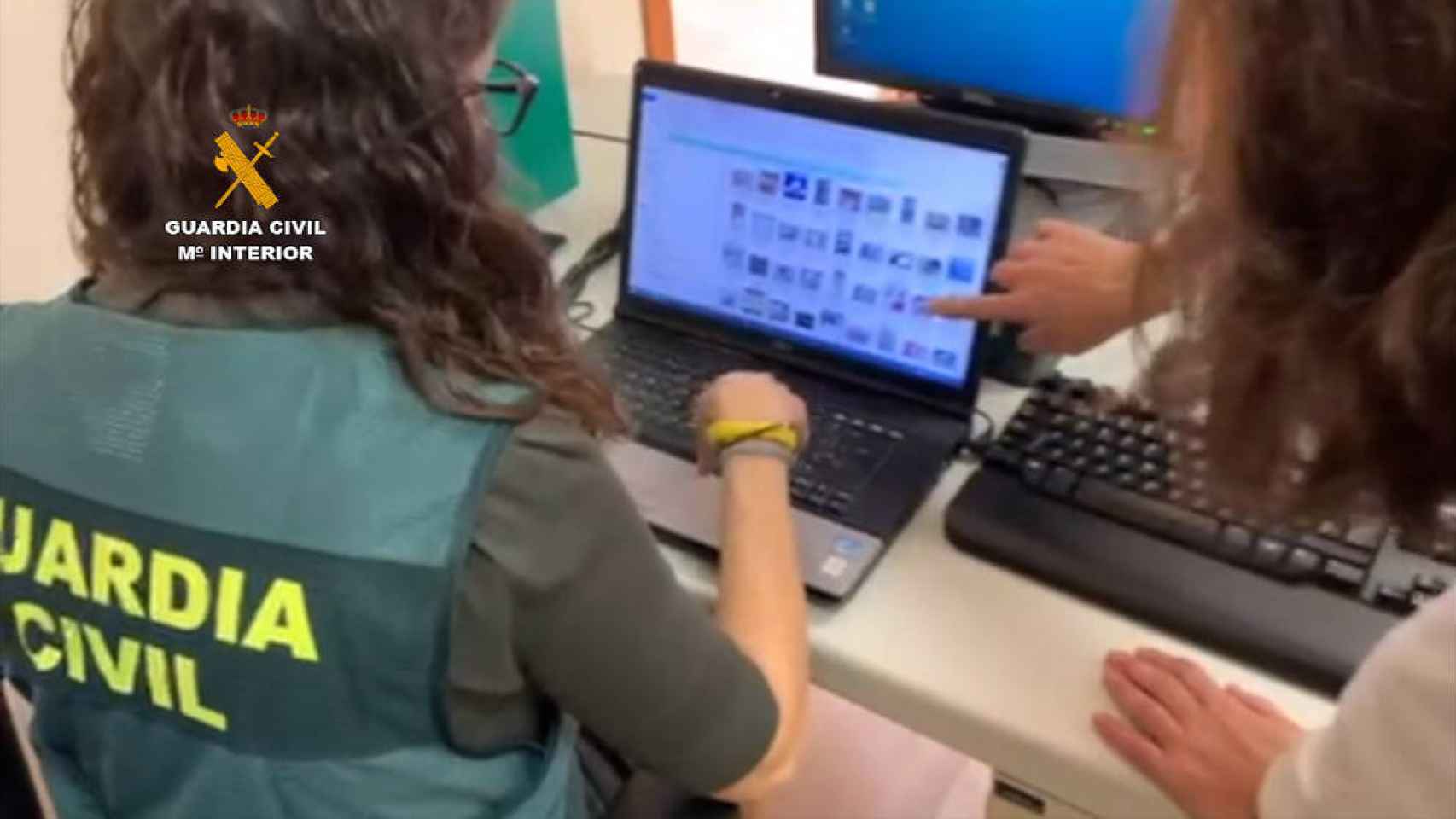

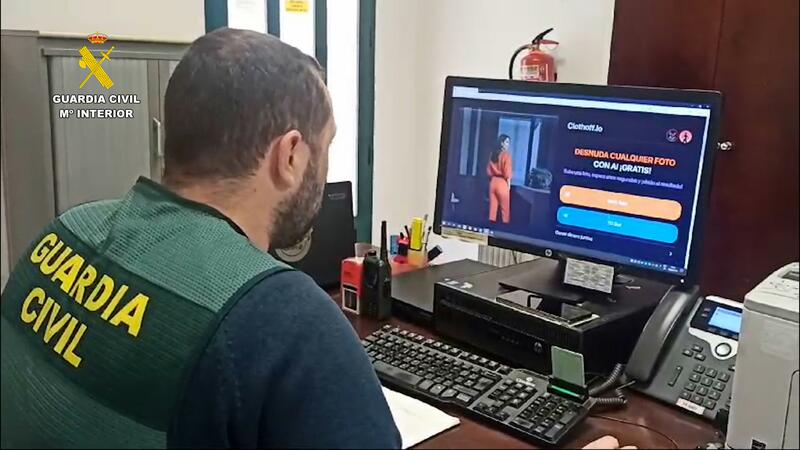

In Madrid, three minors were arrested for distributing AI-generated fake nude images of 13 adolescent girls. The images, created using mobile apps and websites, were shared via messaging apps. The Guardia Civil initiated the investigation in April, leading to the minors' arrest and involvement of the Juvenile Prosecutor's Office.[AI generated]

:format(jpg):quality(99)/f.elconfidencial.com/original/e35/404/fe6/e35404fe659c84028c0877149f05b088.jpg)