The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

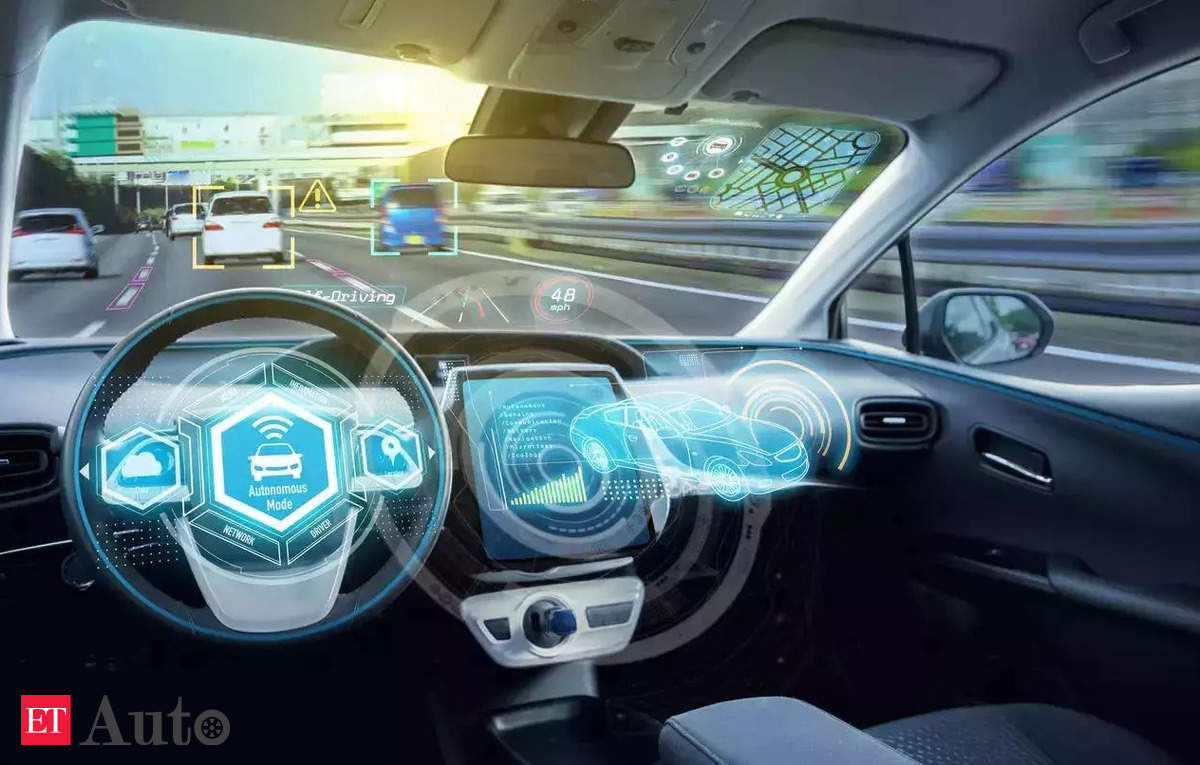

The US Commerce Department will issue proposed export controls next month to limit key connected-vehicle software components from China and other adversaries. Export controls chief Alan Estevez said critical driver-management and data-handling systems must be produced in allied nations to mitigate national security risks of malicious or faulty remote access.[AI generated]

:max_bytes(150000):strip_icc()/GettyImages-2161887177-9046f6f97d0748d39b3ad02ed60a0251.jpg)