The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

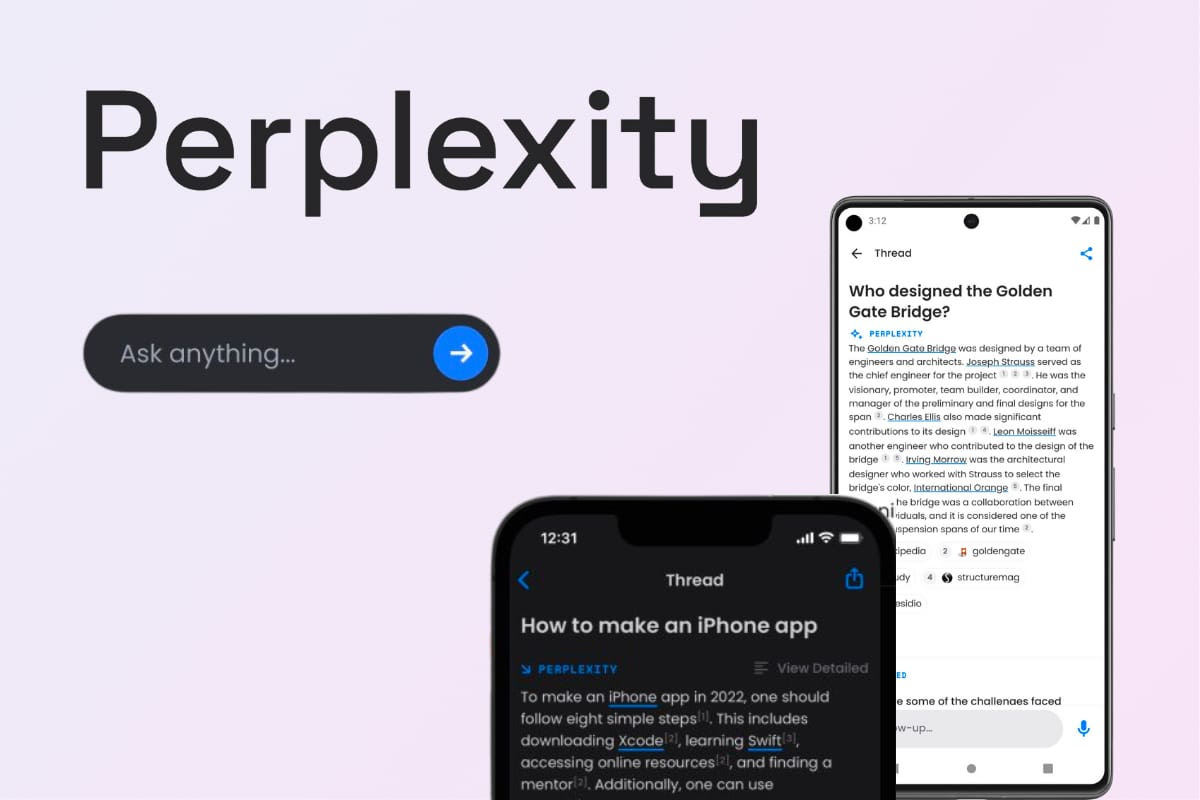

Condé Nast, publisher of Vogue, Wired and The New Yorker, sent a cease-and-desist letter to AI search engine Perplexity, accusing it of scraping and reproducing its pay-walled content without permission. The media group alleges plagiarism and copyright infringement after Perplexity’s web crawlers bypassed robots.txt to feed its AI responses.[AI generated]