.jpg?disable=upscale&width=1200&height=630&fit=crop)

The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

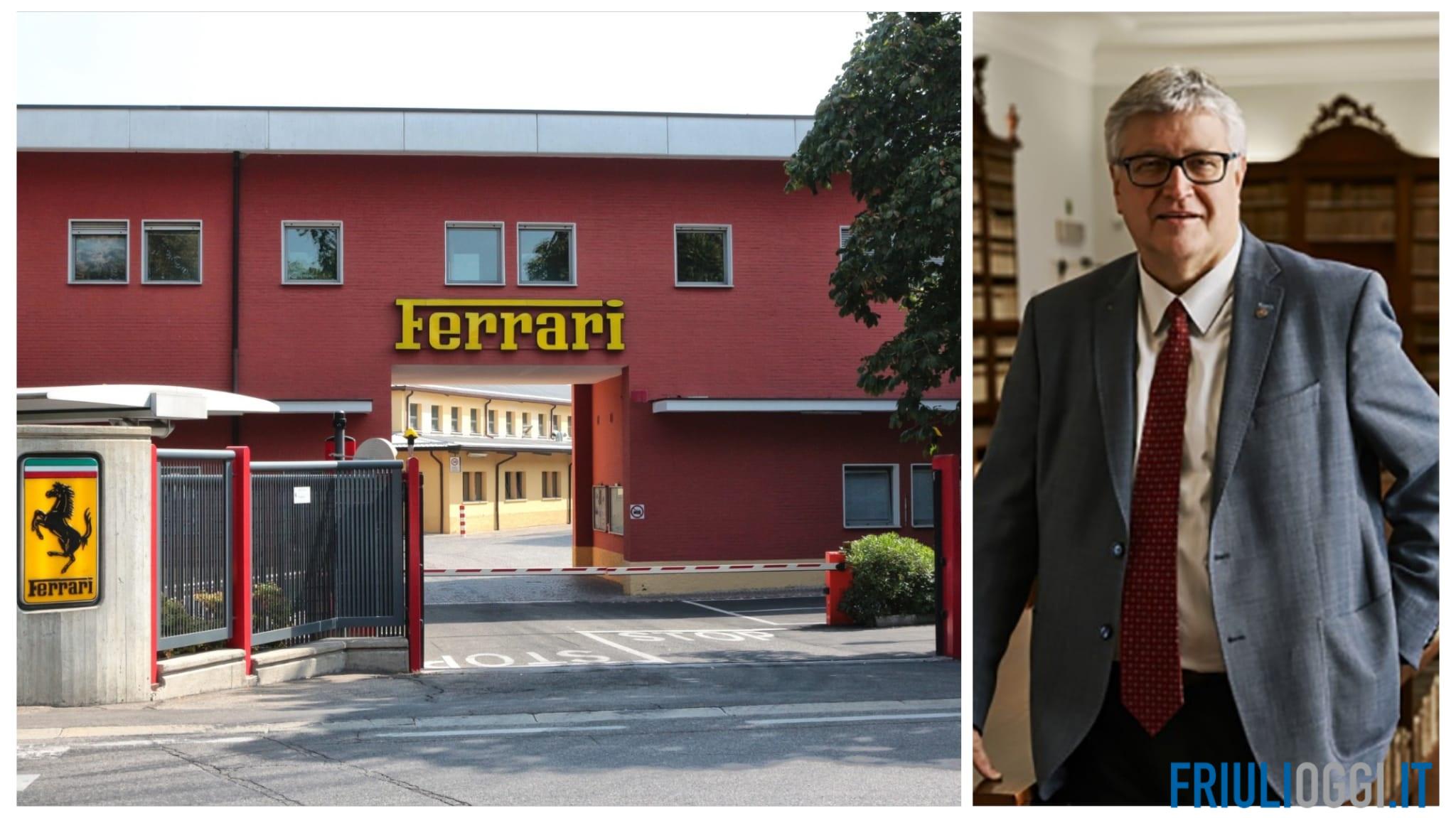

A Ferrari executive was targeted by a scammer using deepfake technology to impersonate CEO Benedetto Vigna's voice. The scam involved a fake acquisition deal communicated via WhatsApp and a phone call. The executive's suspicion led to a verification question, which the scammer couldn't answer, preventing potential financial harm.[AI generated]

)