The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

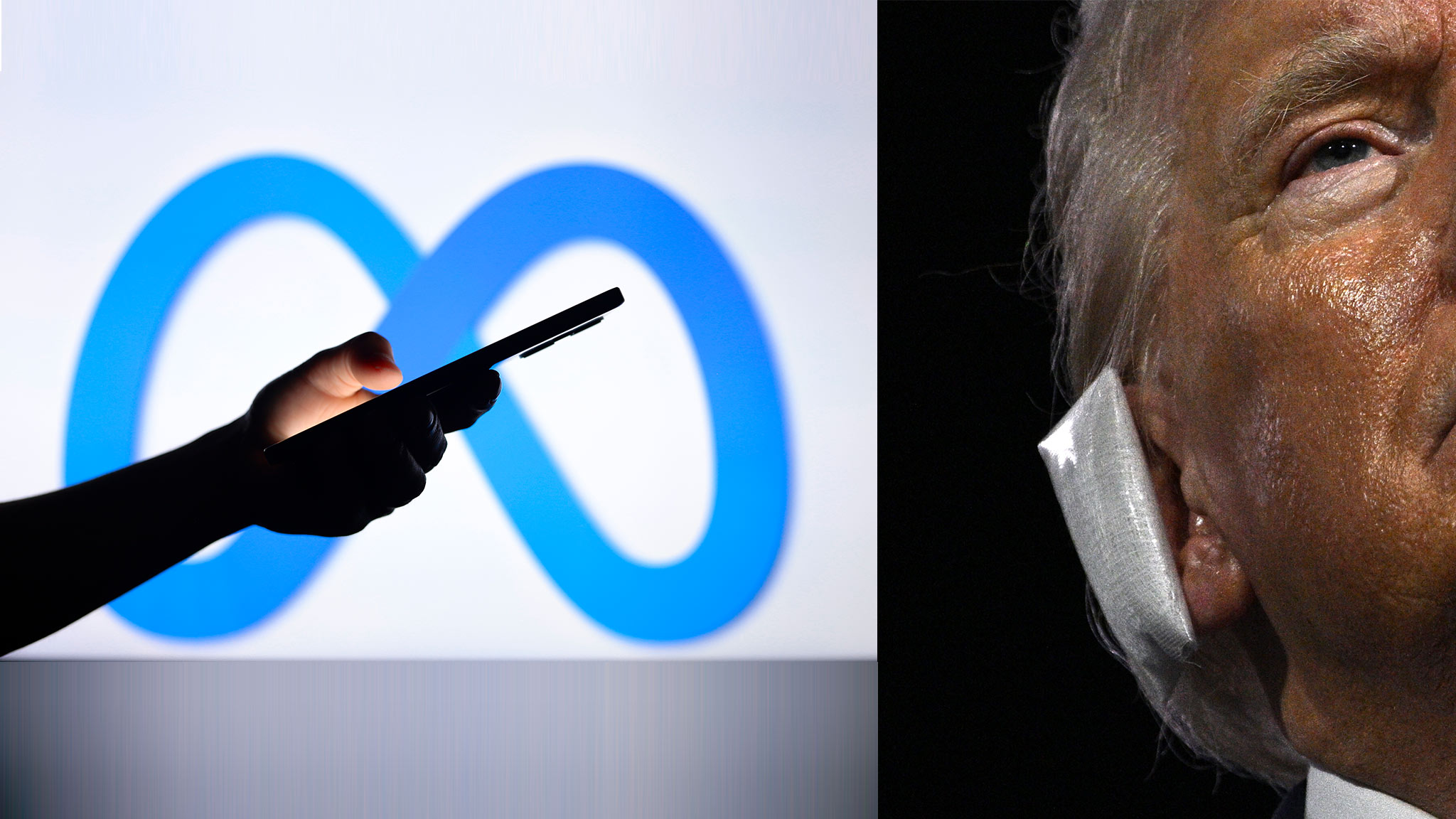

Meta's AI assistant incorrectly claimed that an assassination attempt on Donald Trump did not occur, despite being programmed to avoid the topic. This misinformation, attributed to AI 'hallucinations,' raised concerns about AI reliability and public trust. Meta is addressing the issue, acknowledging the potential harm of such misinformation.[AI generated]

/cdn.vox-cdn.com/uploads/chorus_asset/file/25334825/STK466_ELECTION_2024_CVirginia_E.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/23951554/VRG_Illo_STK175_L_Normand_DonaldTrump_Positive.jpg)

/https://www.ilsoftware.it/app/uploads/2024/05/Meta-AI.png)