The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

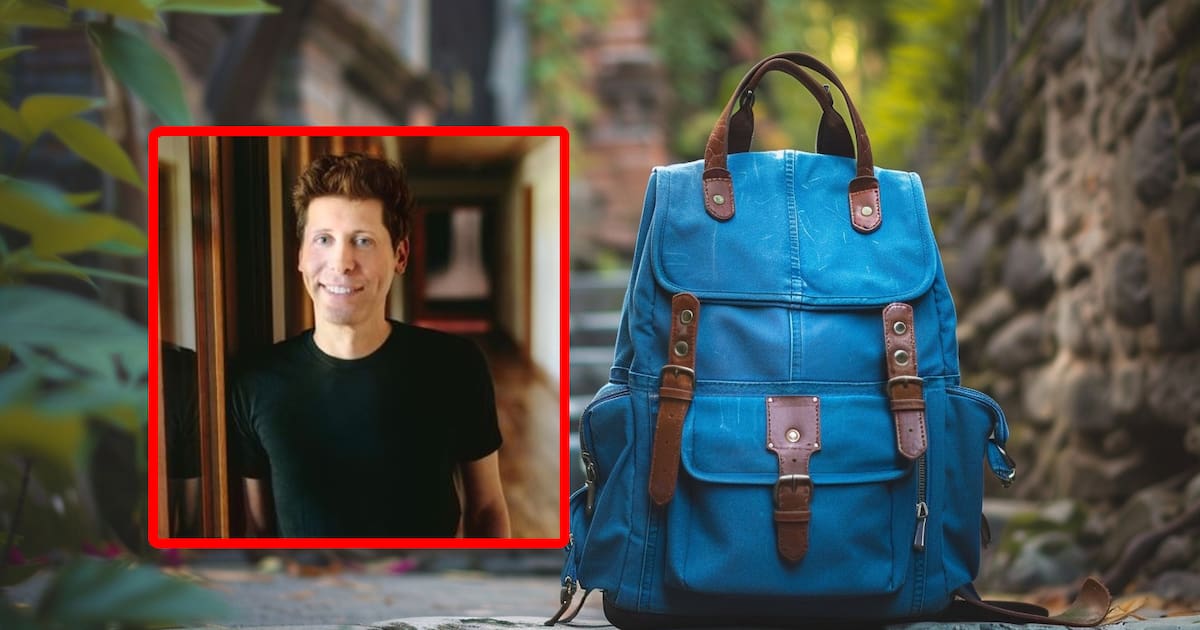

OpenAI CEO Sam Altman reportedly carries a “nuclear backpack” laptop containing codes to remotely deactivate ChatGPT servers in an emergency, mirroring the US president’s nuclear football. The device reflects industry concerns about uncontrolled AI posing existential threats and exemplifies a precautionary measure against potential AI disasters.[AI generated]

:quality(85)/cloudfront-us-east-1.images.arcpublishing.com/infobae/A4JTBJXZMGHKOPJ5FQHY2D3AD4.jpg)