The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

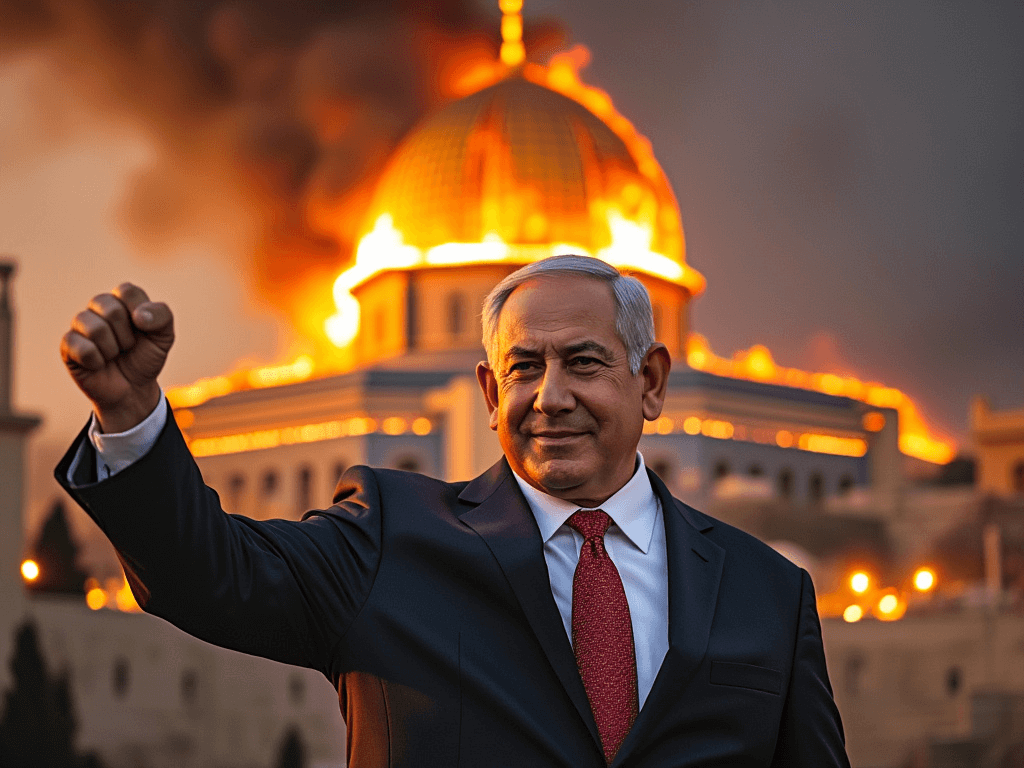

The Irish Data Protection Commission suspended X’s processing of EU users’ data after discovering personal posts scraped without consent to train its AI assistant Grok. Meanwhile, Elon Musk’s xAI rolled out Grok-2, an image generator on X lacking effective content filters, enabling users to produce violent, hateful and misleading deepfake images.[AI generated]

1722715534-0/600---400-(4)1722715534-0.png)