The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

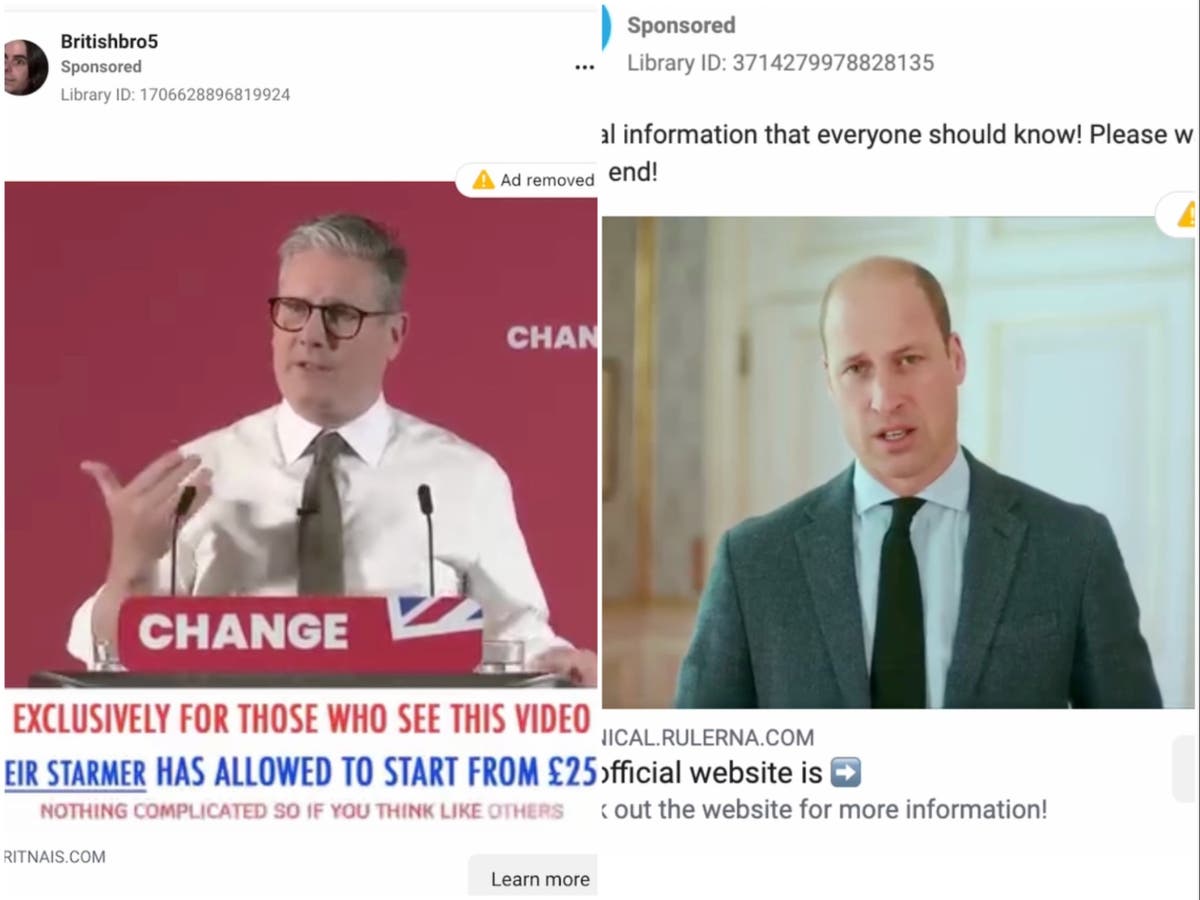

AI-generated deepfakes of UK Prime Minister Keir Starmer and Prince William are being used in fraudulent ads on Meta platforms to promote a scam cryptocurrency platform, "Immediate Edge." Researchers at Fenimore Harper identified over 250 such ads, misleading users into potential financial harm by falsely endorsing the scheme.[AI generated]