The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

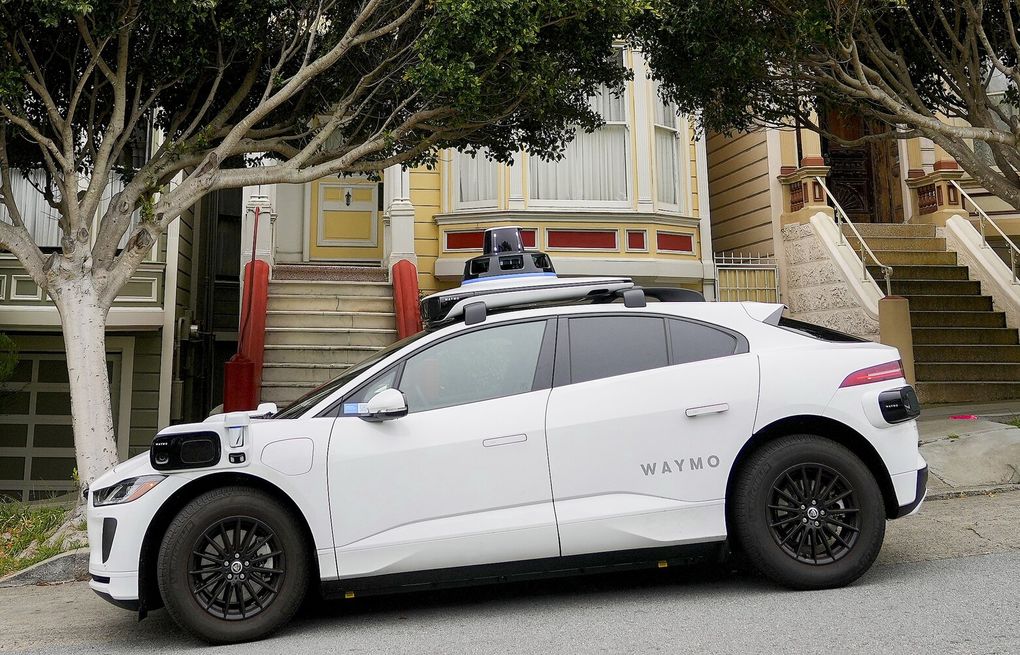

Waymo's self-driving cars in San Francisco have been causing noise disturbances by honking at each other in a parking lot, disrupting residents' sleep. Despite Waymo's attempts to fix the issue, the problem persisted, drawing global attention. The company has since promised a solution to stop the honking incidents.[AI generated]

/cdn.vox-cdn.com/uploads/chorus_asset/file/25578195/2156927517.jpg)

:max_bytes(150000):strip_icc():focal(749x0:751x2)/waymo-081624-2-a0eb48de72ca47e58e4830a264809635.jpg)

:quality(85)/cloudfront-us-east-1.images.arcpublishing.com/infobae/5QFXLHAWY4KZR3Z5JCK5OVCJOE.jpg)

:quality(85)/cloudfront-us-east-1.images.arcpublishing.com/infobae/NZQB64LRX5CAZL7PII2FVPFA74.jpg)

/cdn.vox-cdn.com/uploads/chorus_asset/file/24217227/226414_Waymo_Zeekr_ABassett_0001.jpg)