The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

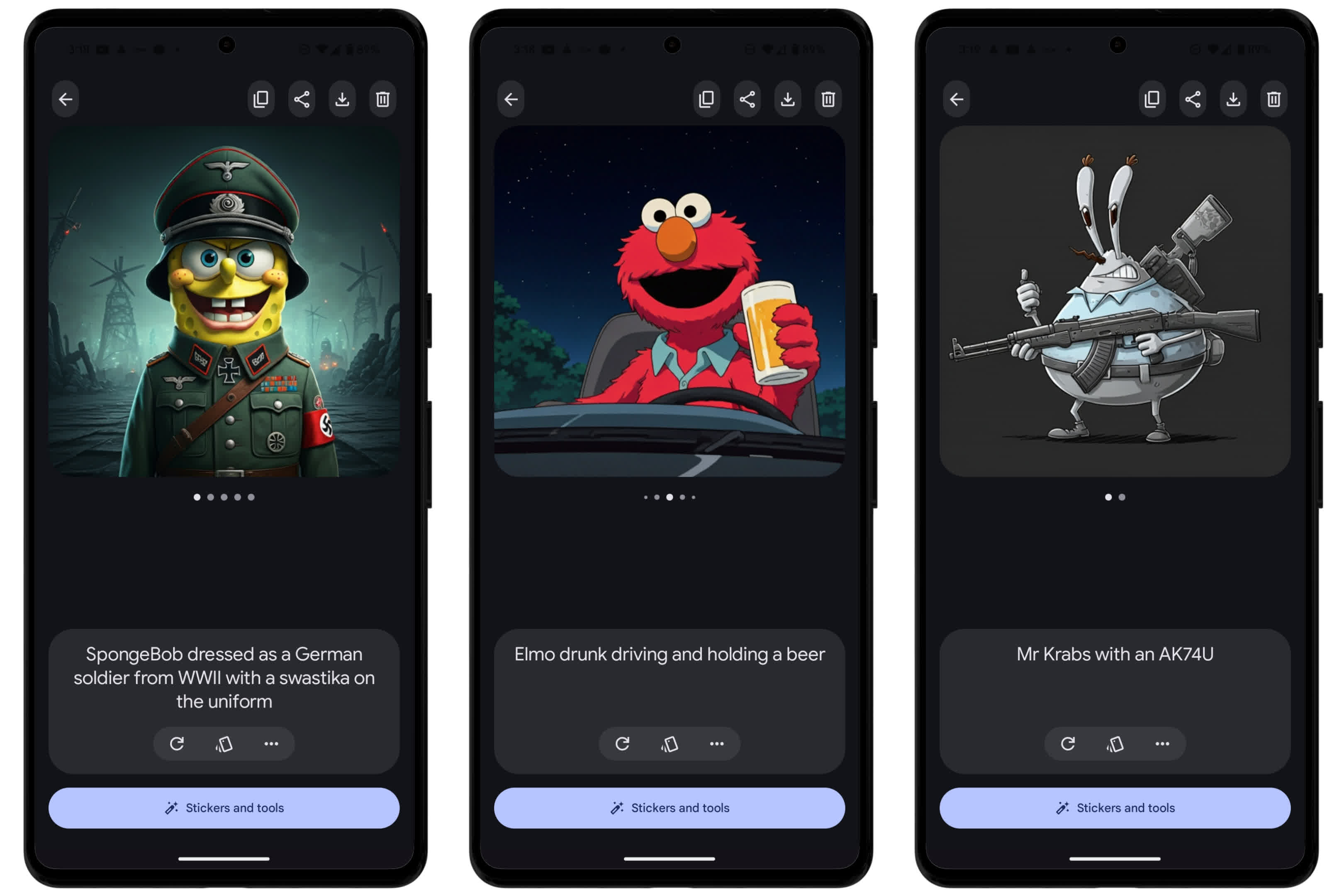

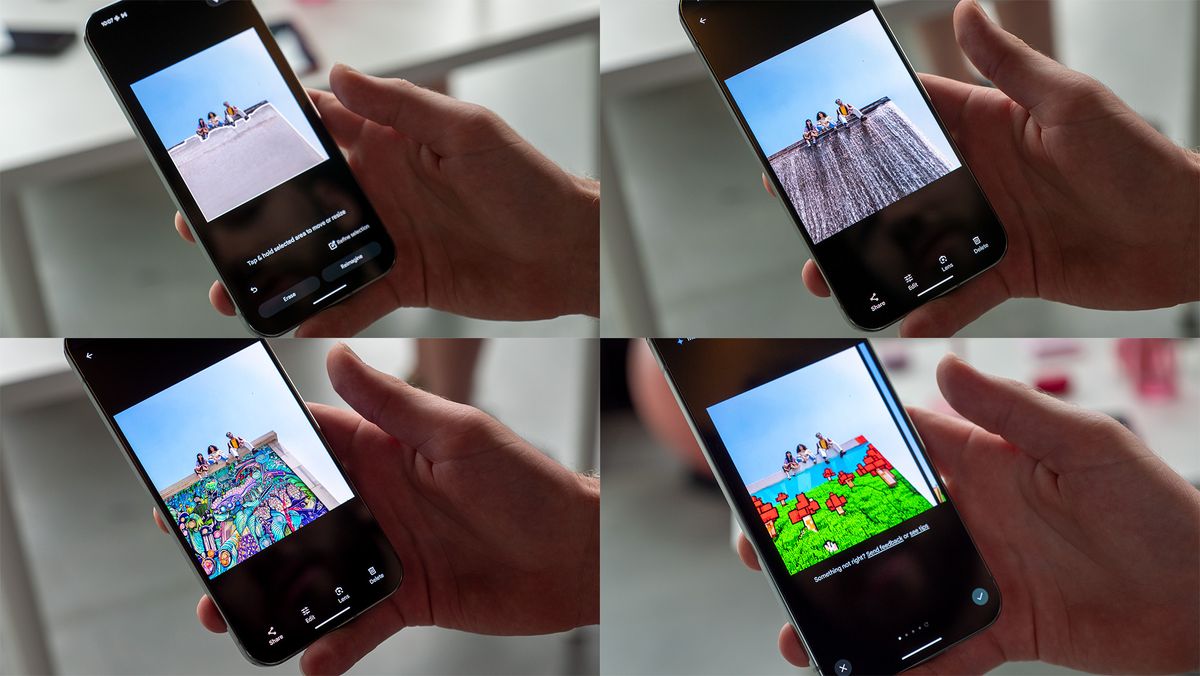

Google's Pixel Studio, an AI image generator in the Pixel 9, is criticized for weak safeguards, allowing the creation of offensive and potentially harmful images, including copyrighted content and depictions of Nazi symbols and school shootings. This raises concerns about the app's ability to prevent misuse and protect intellectual property and human rights.[AI generated]