The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

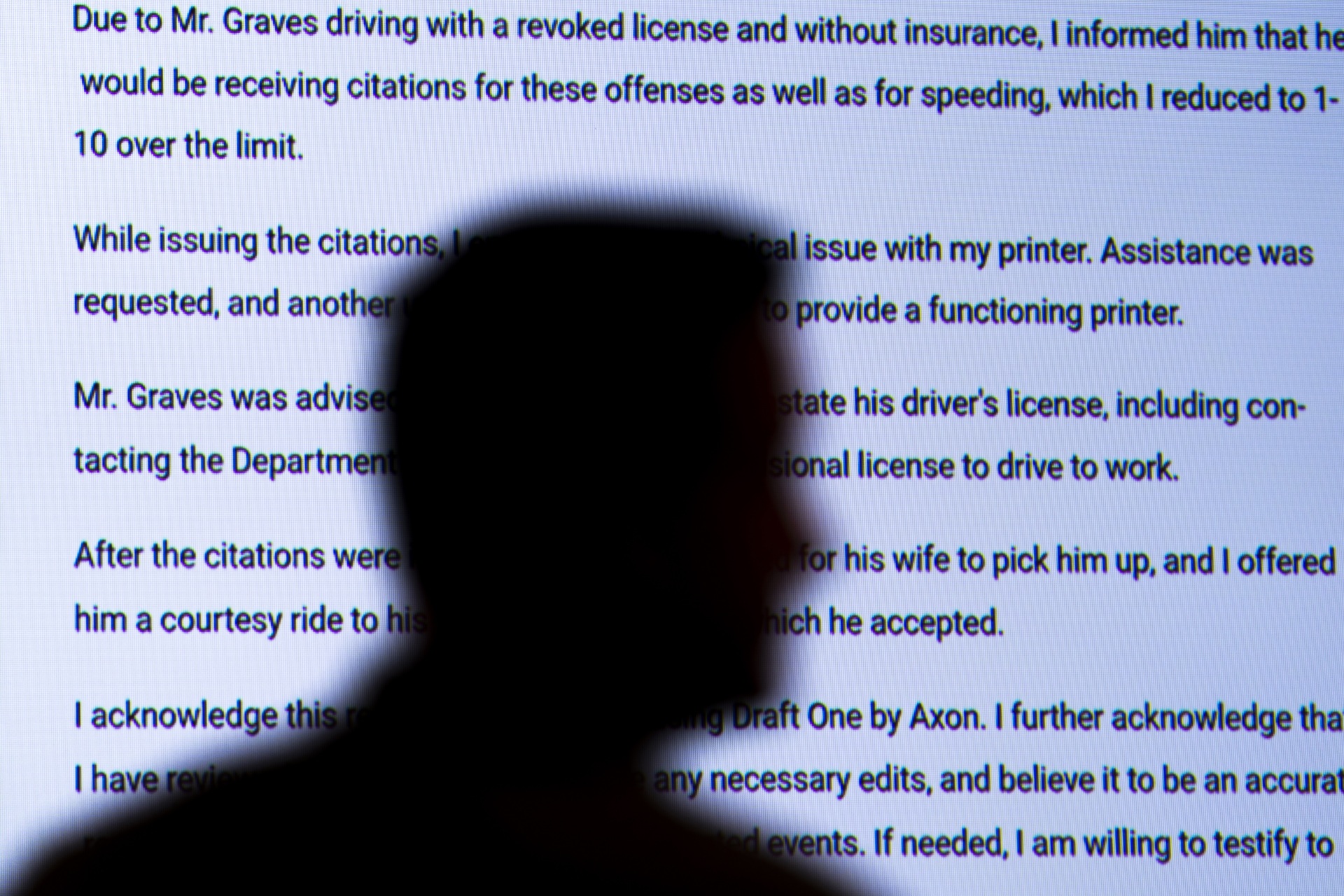

US police departments are considering using Axon's AI tool, "Draft One," to generate police reports from body camera audio using GPT-4. Experts warn that AI's tendency to "hallucinate" or produce errors could lead to wrongful imprisonment, raising concerns about human rights violations and legal obligations.[AI generated]