The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

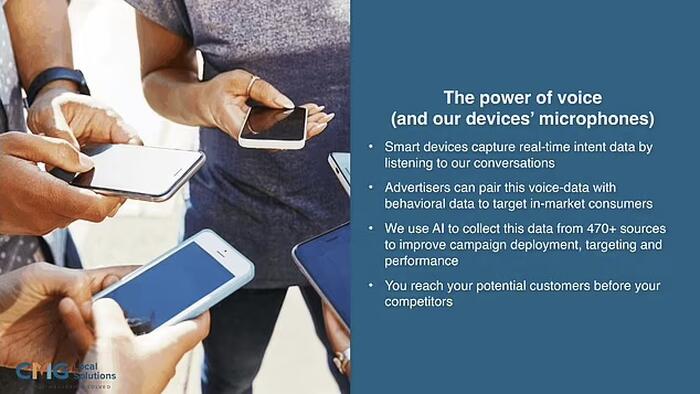

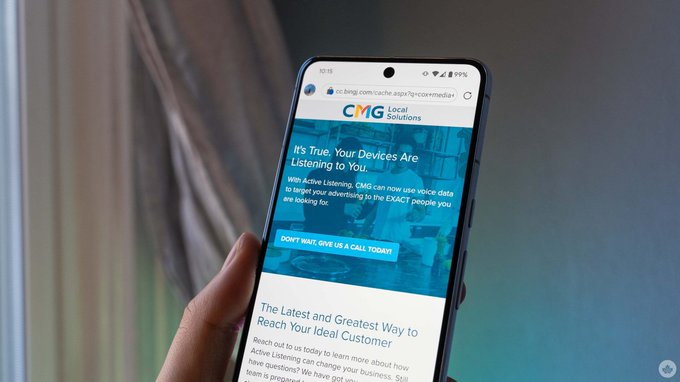

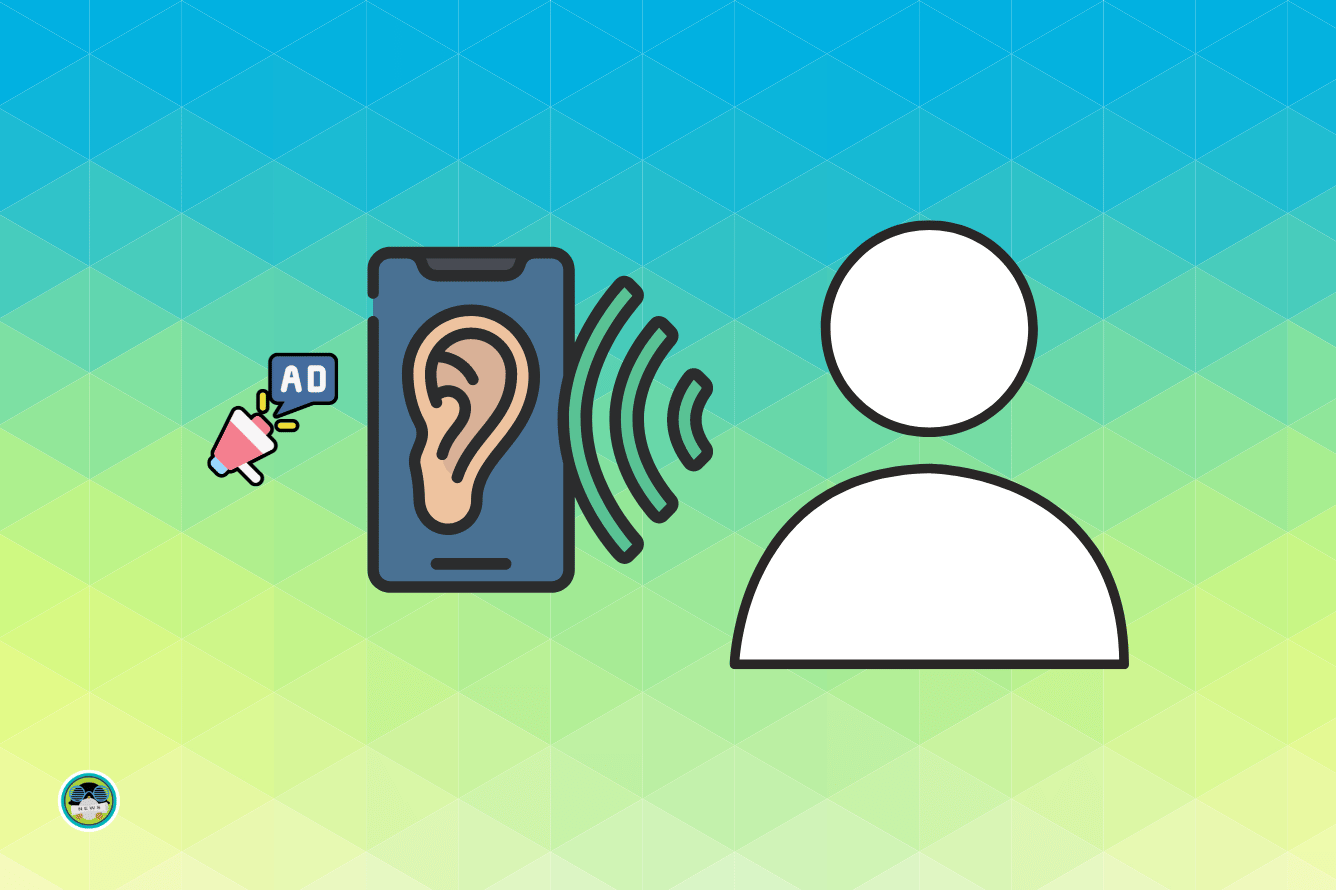

Cox Media Group admitted using AI-powered 'Active Listening' software to eavesdrop on smartphone conversations, targeting ads based on captured data. This practice, involving major clients like Meta, Google, and Amazon, raises significant privacy concerns due to unauthorized surveillance and data collection without user consent.[AI generated]