The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

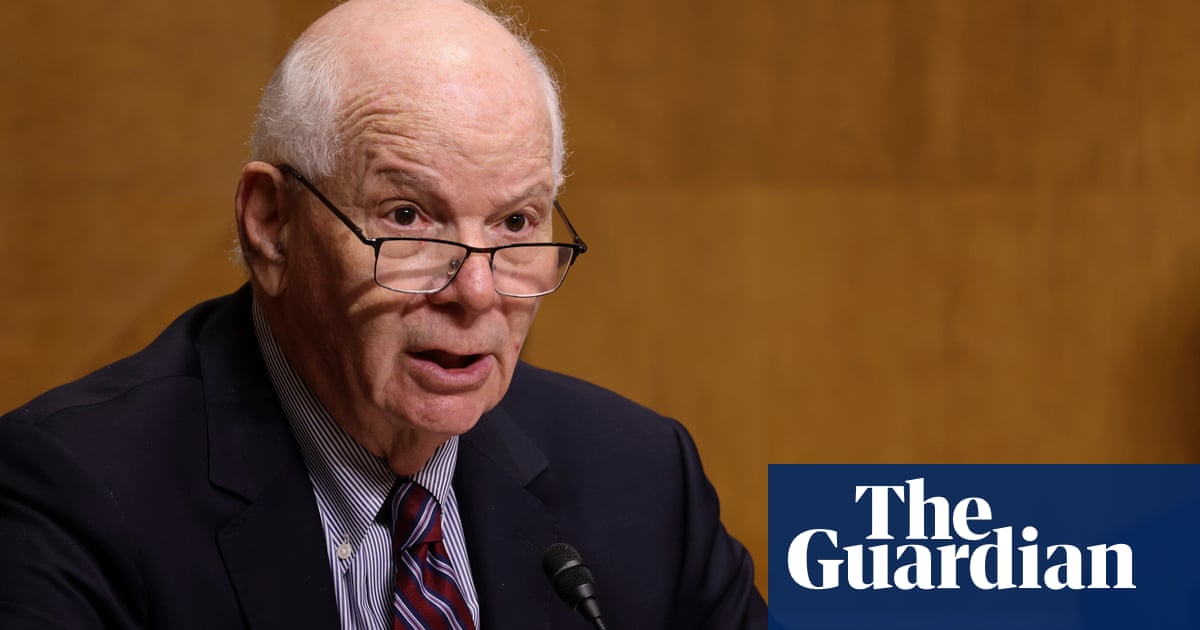

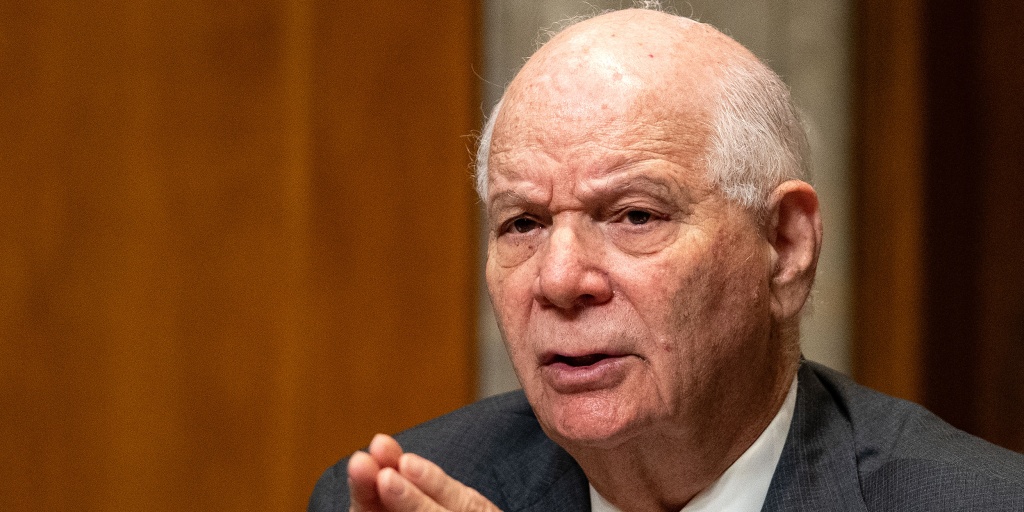

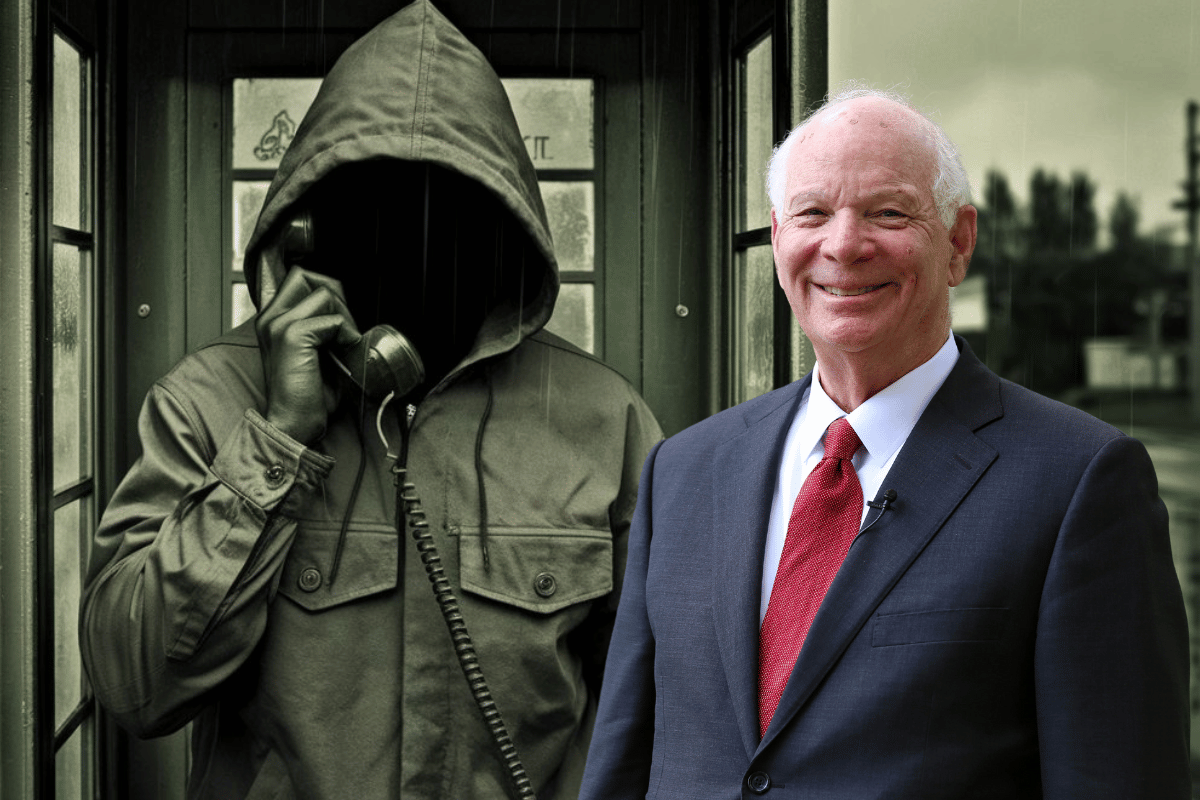

Senator Ben Cardin was targeted by a deepfake scammer posing as former Ukrainian Foreign Minister Dmytro Kuleba during a Zoom call. The deepfake technology convincingly mimicked Kuleba's appearance and voice, raising security concerns. Cardin became suspicious when the impersonator asked uncharacteristic questions, prompting Senate security to issue warnings.[AI generated]

/cdn.vox-cdn.com/uploads/chorus_asset/file/25330621/STK419_DEEPFAKE_CVIRGINIA_G.jpg)

.jpg?disable=upscale&width=1200&height=630&fit=crop)