The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

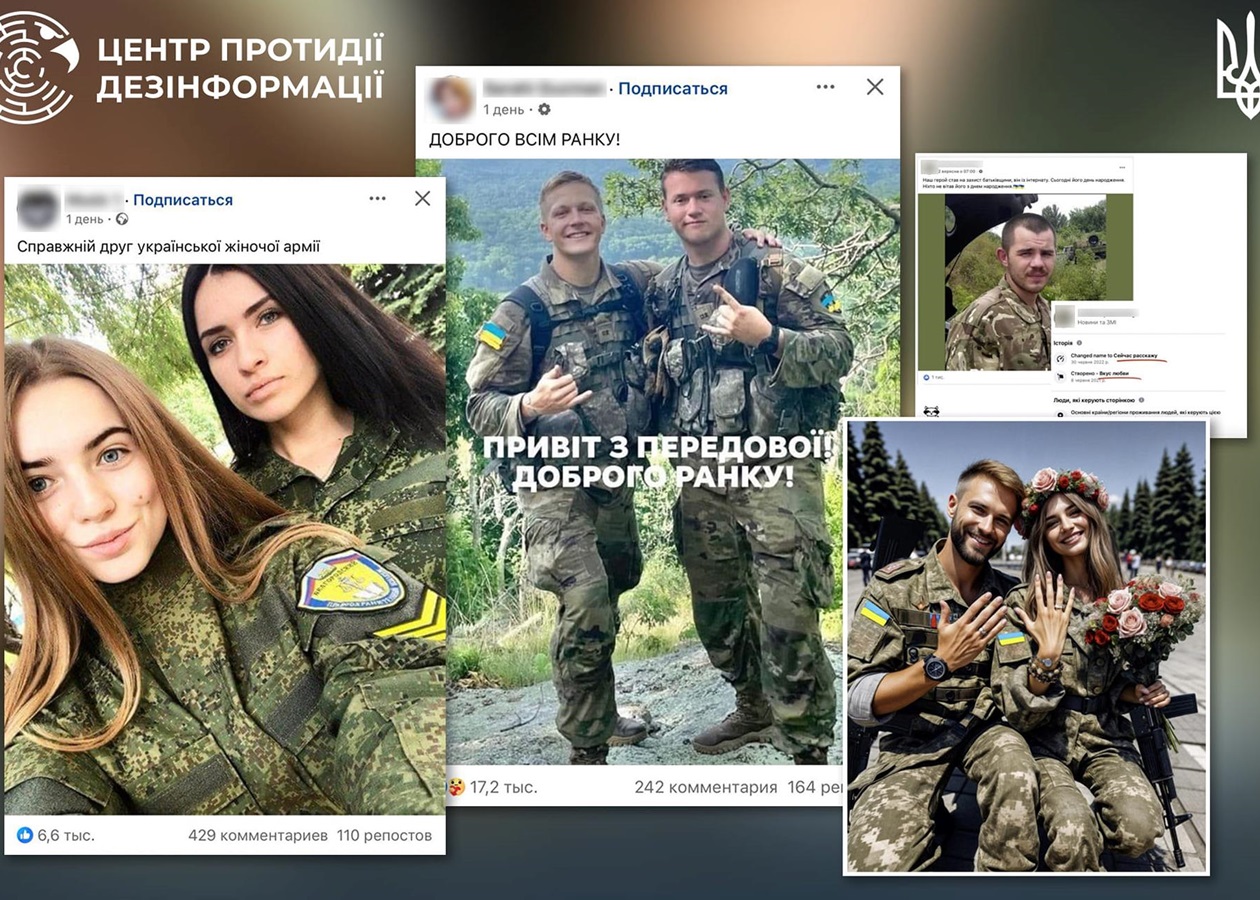

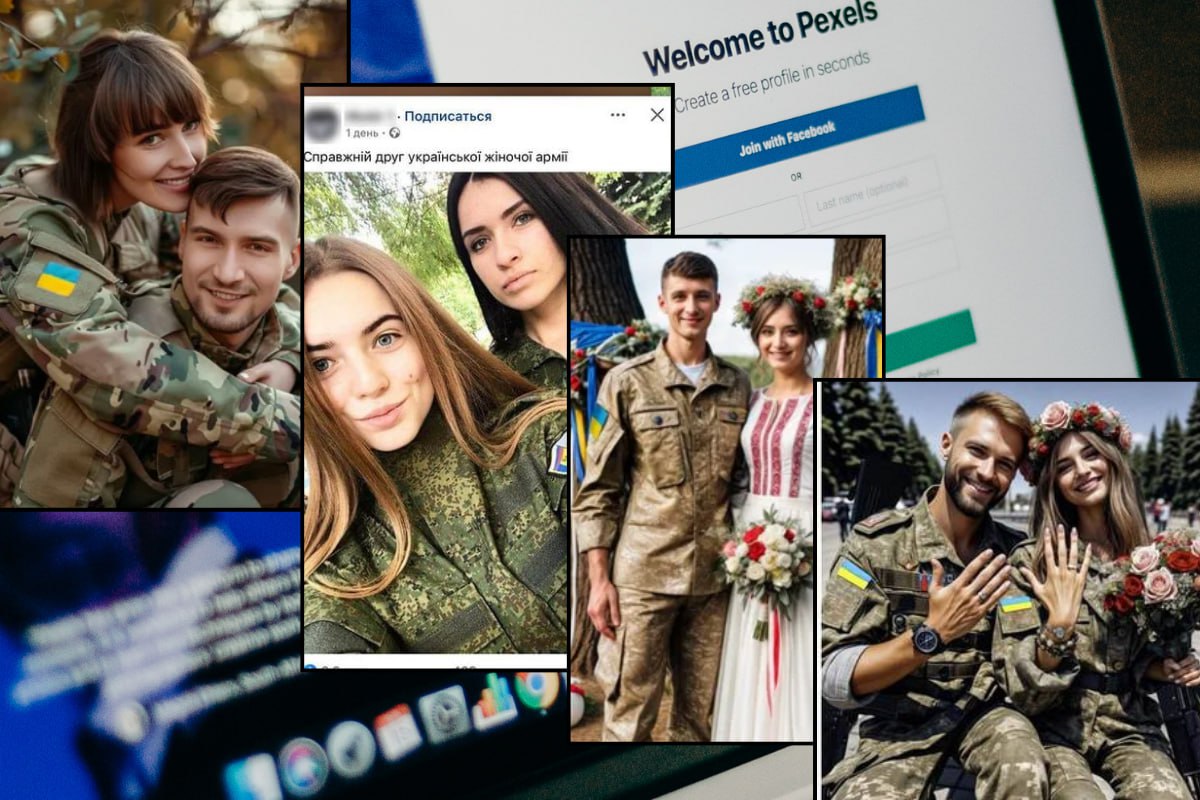

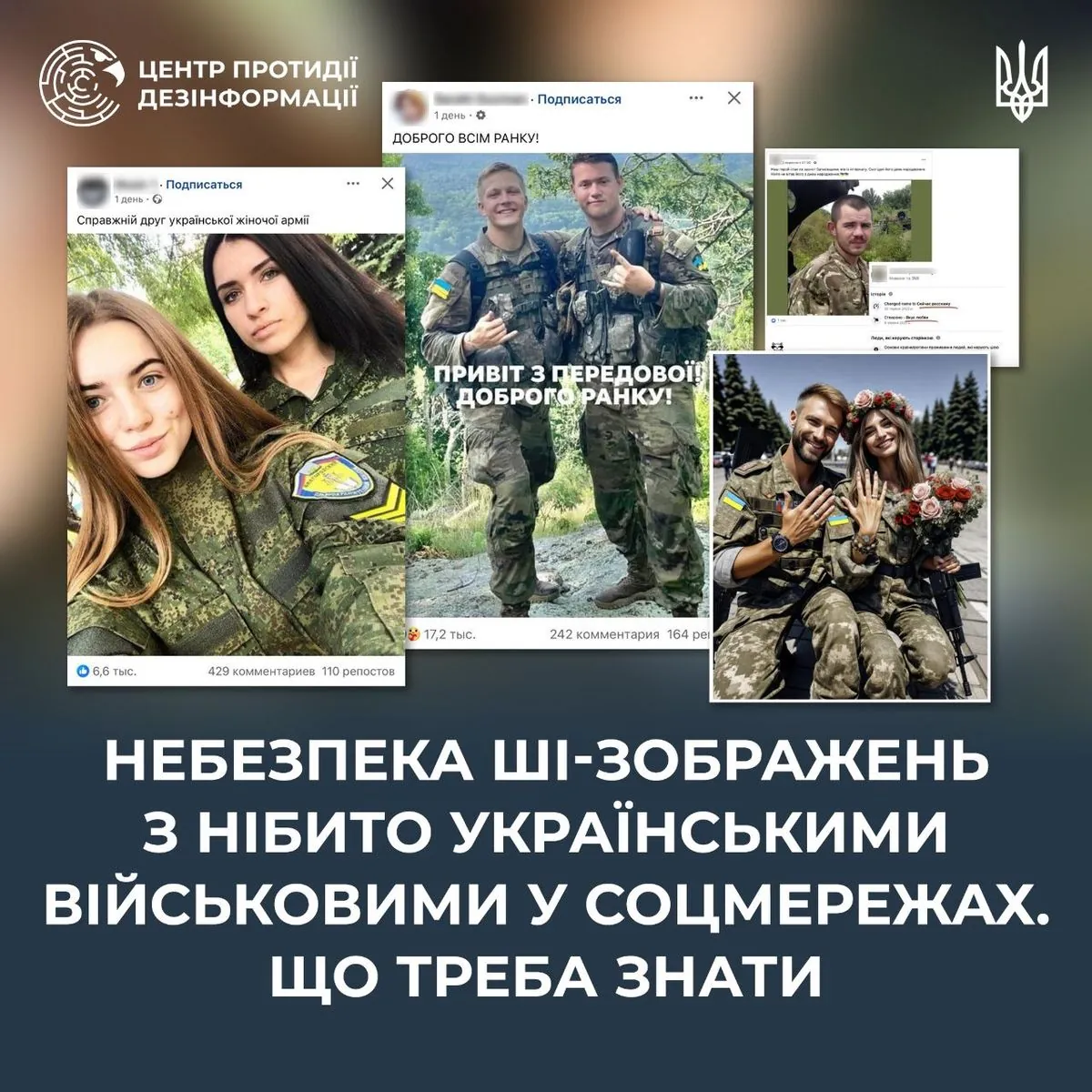

AI-generated images of supposed Ukrainian soldiers and civilians are being spread on social media by bots to manipulate emotions, boost engagement, and facilitate Russian disinformation and fraud. Ukrainian authorities warn these posts are part of an information warfare campaign, exploiting public trust and enabling harmful narratives and scams.[AI generated]