The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

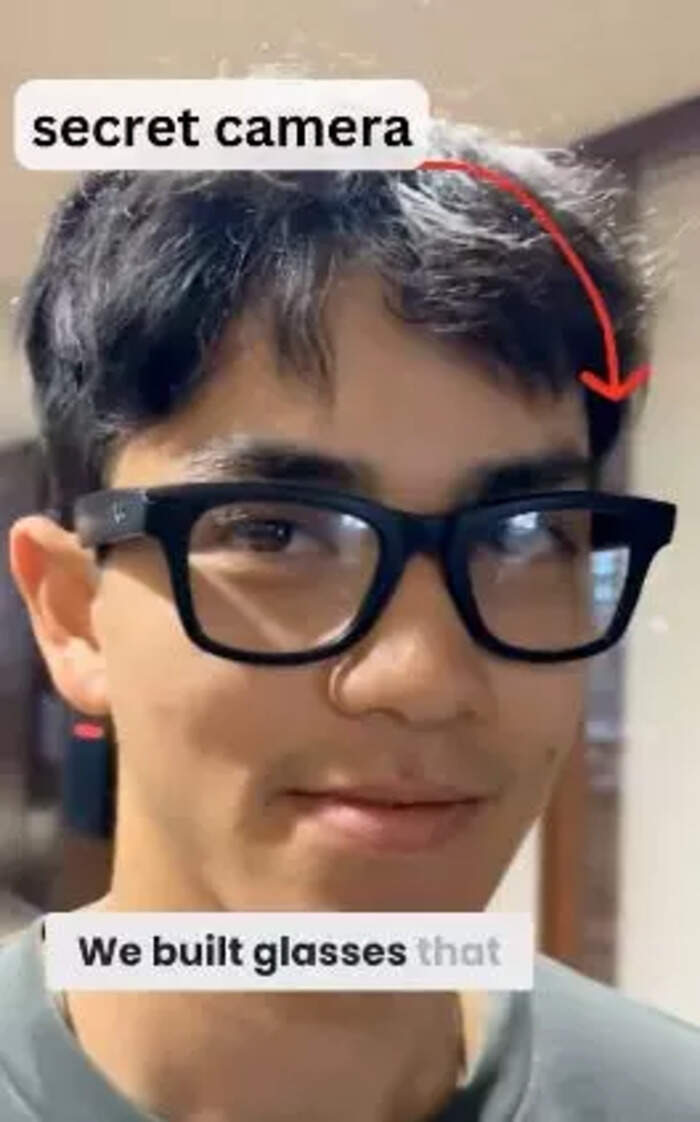

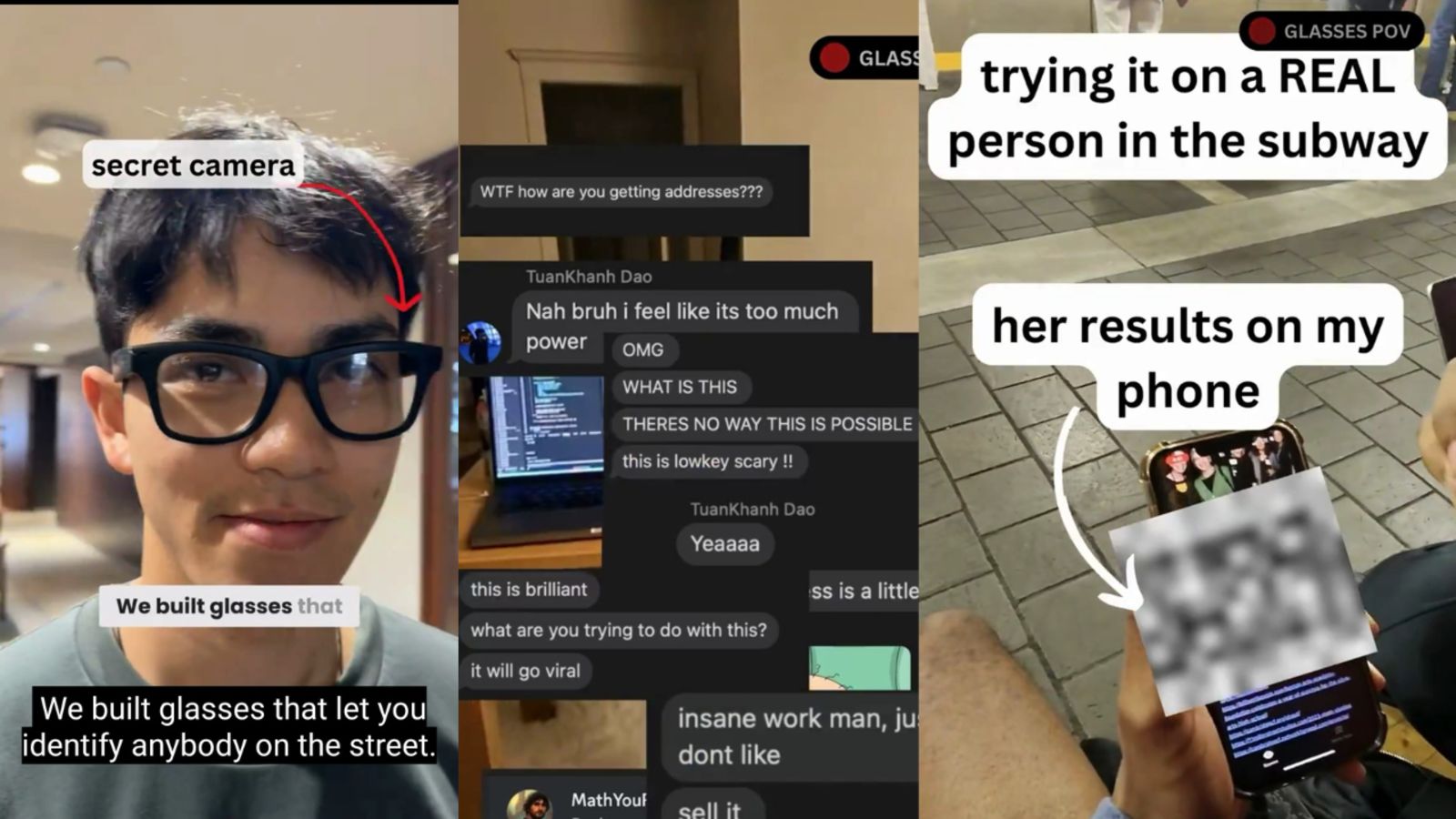

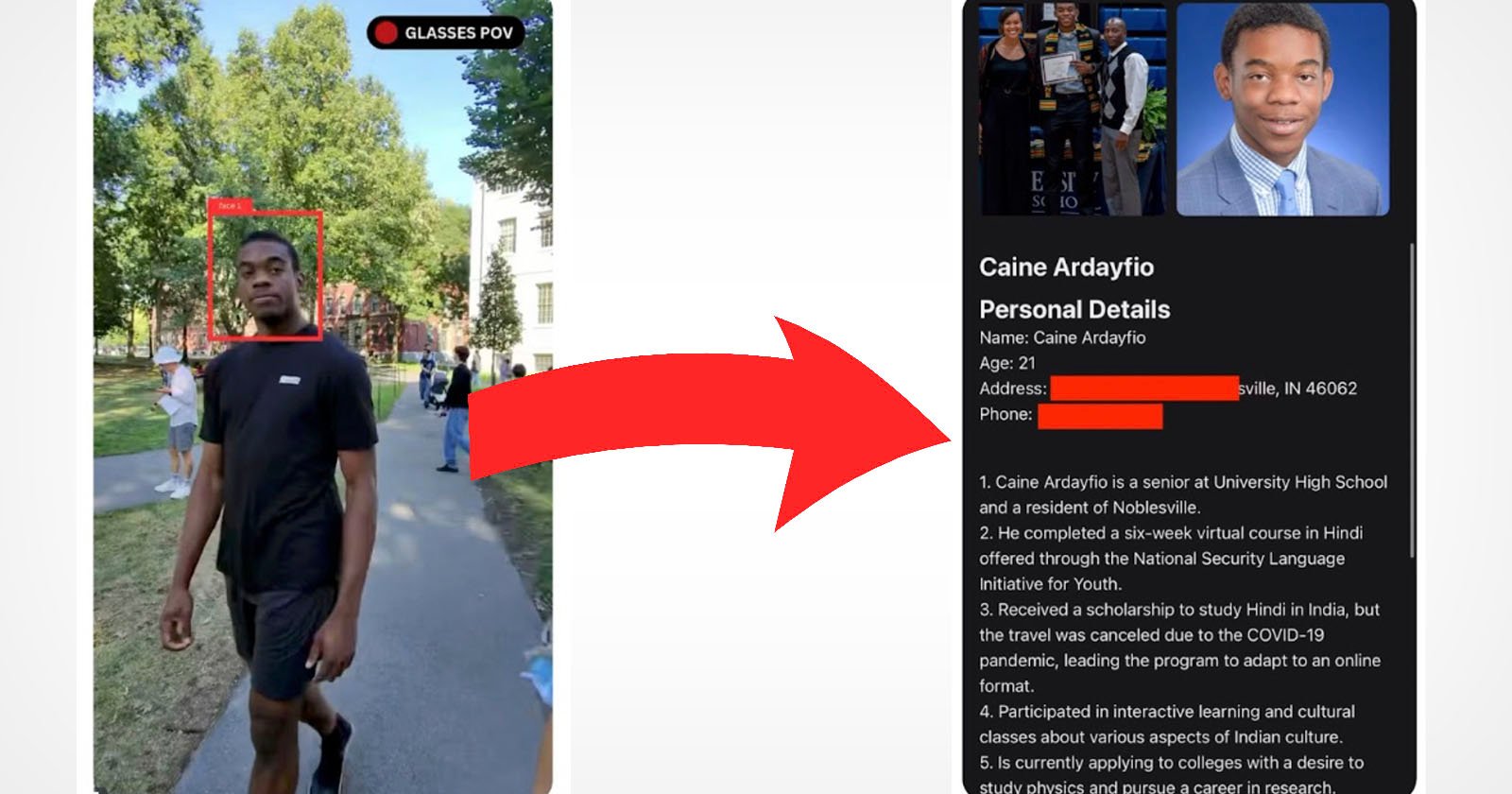

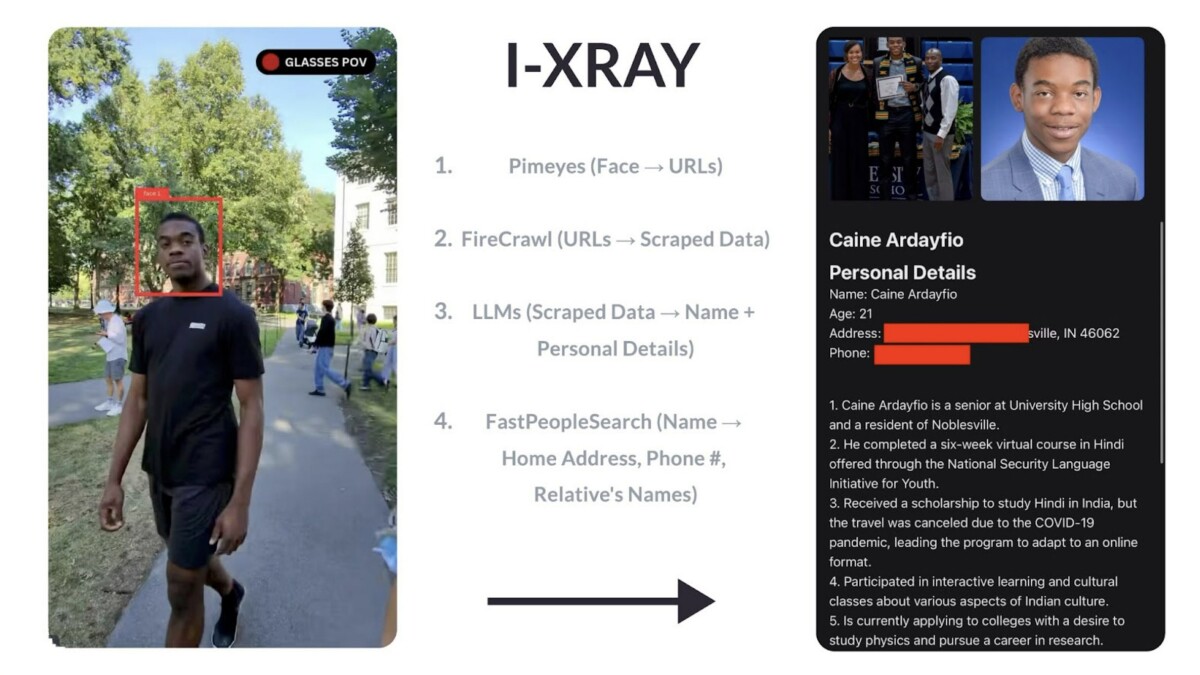

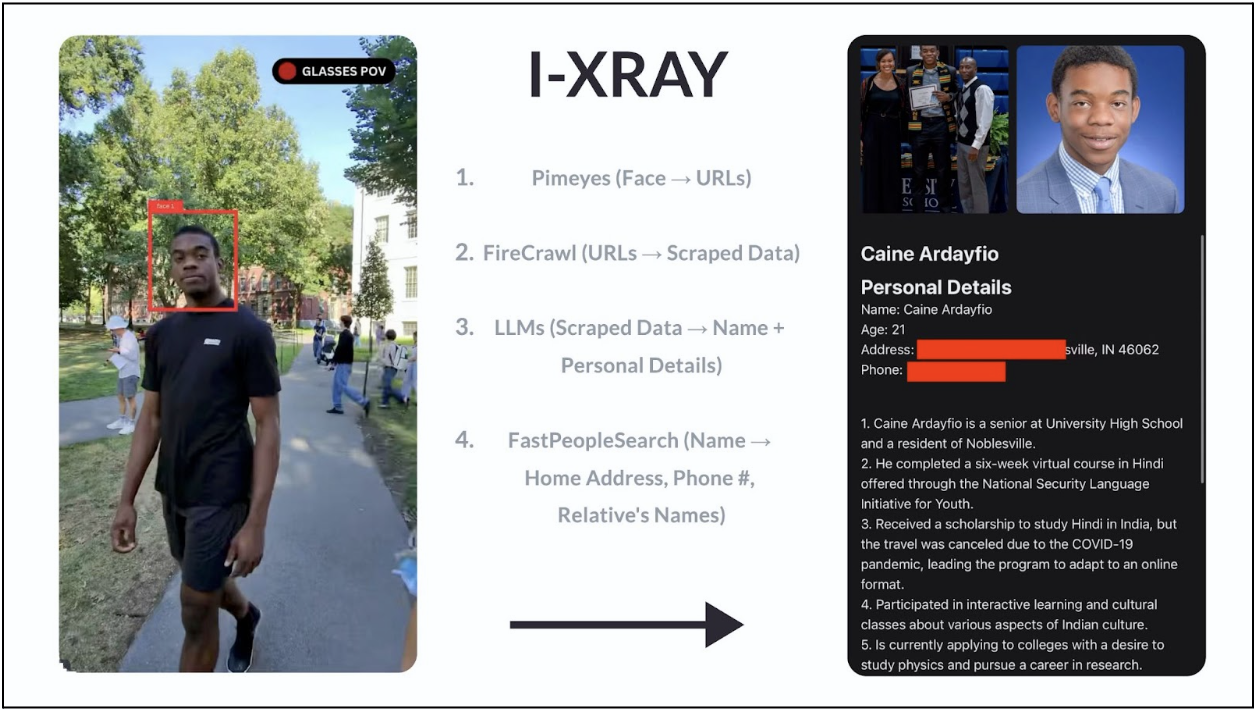

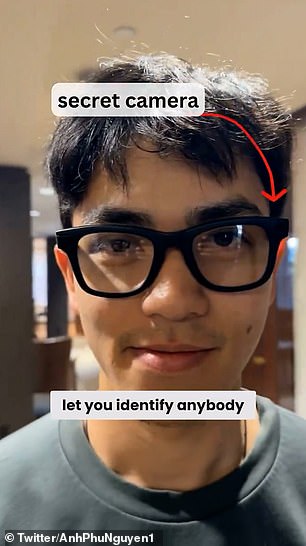

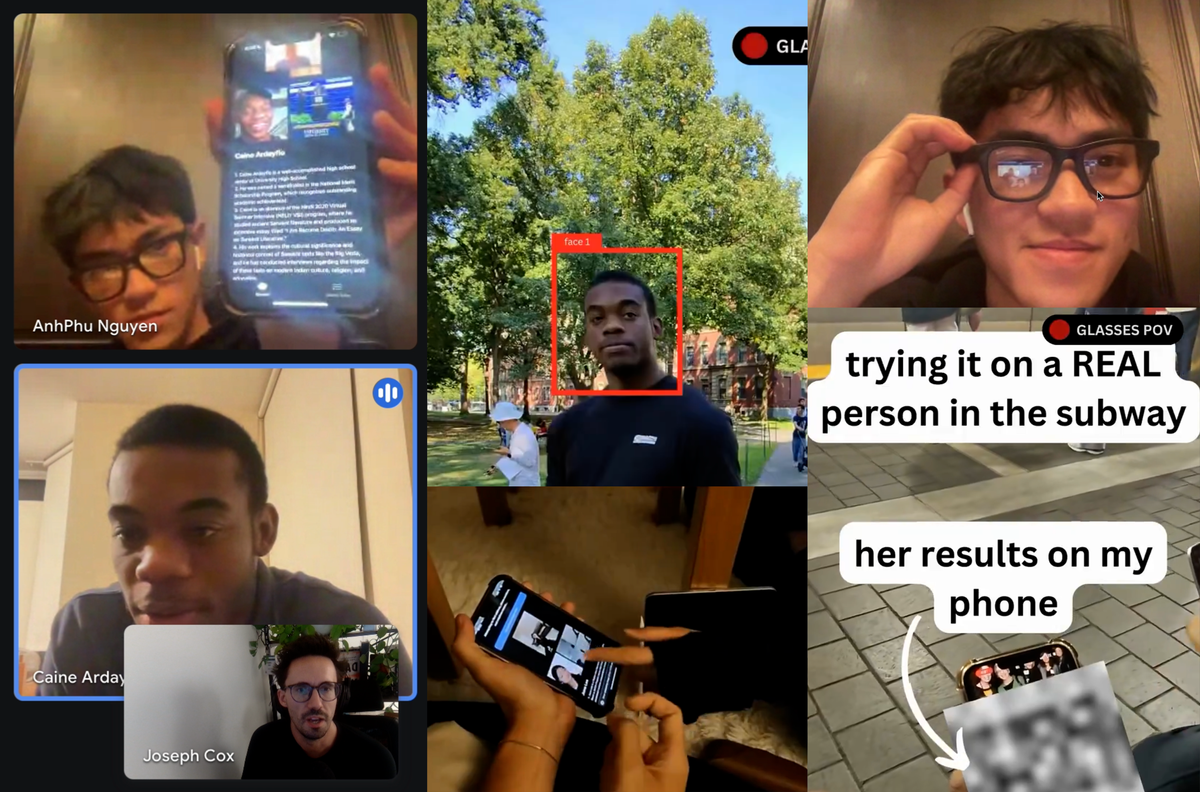

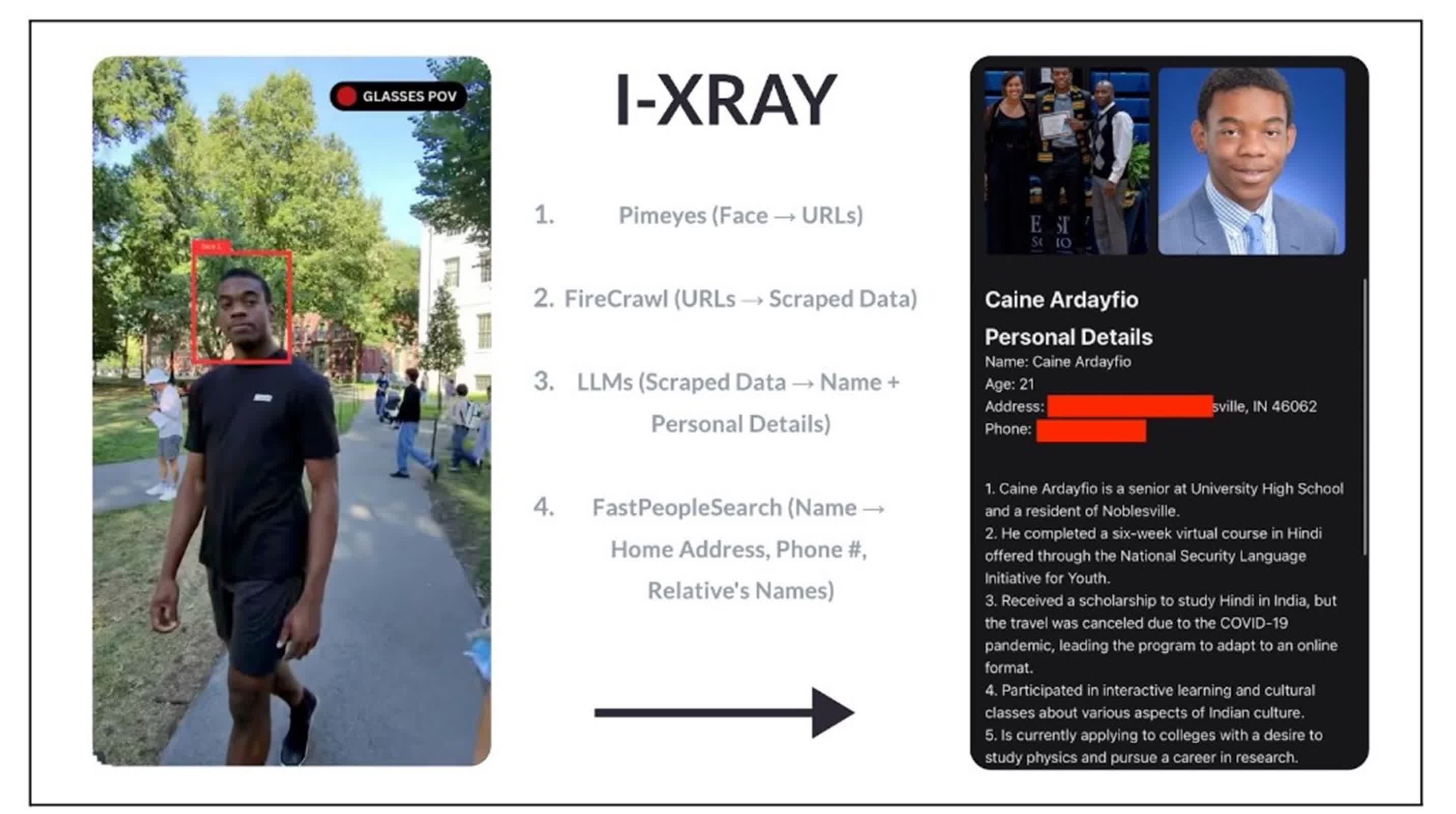

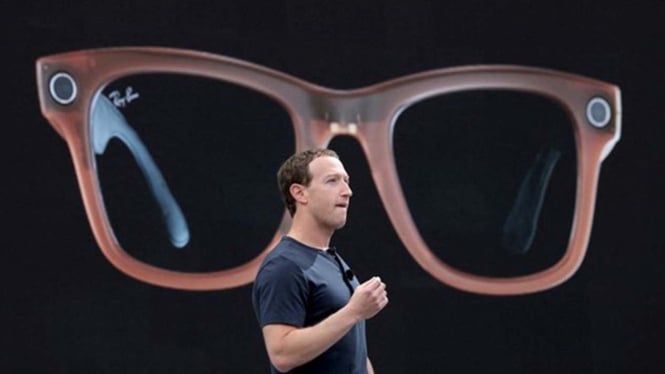

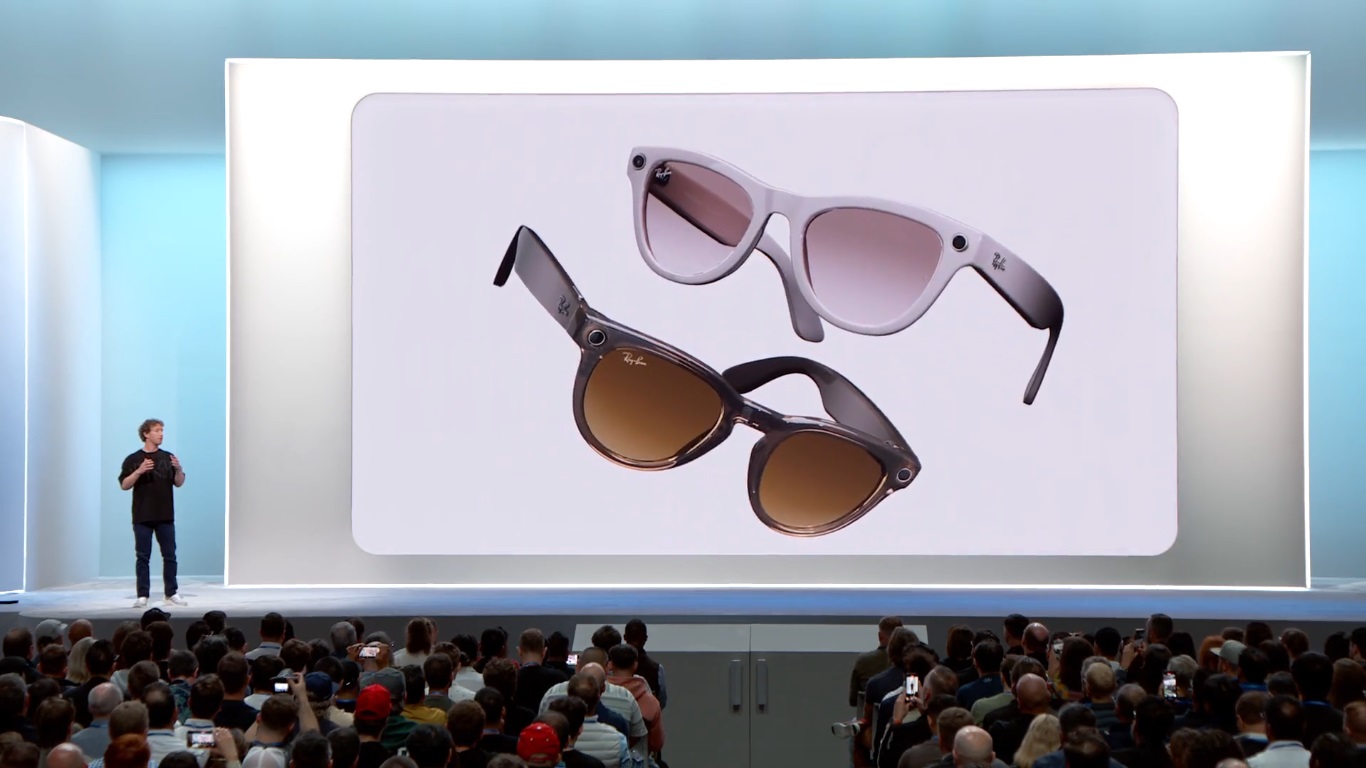

Two Harvard students, AnhPhu Nguyen and Caine Ardayfio, developed a system using Meta's smart glasses and AI facial recognition to identify strangers and access their personal information without consent. This project, named I-XRAY, highlights significant privacy concerns and potential human rights violations associated with consumer technology.[AI generated]